Igor Babuschkin

1.1K posts

Igor Babuschkin

@ibab

Prev co-founder @xAI, Research & Engineering

What the SpaceX–Anthropic Deal Means Two weeks ago, we published a note laying out what GPT-5.5's release implied. The conclusion was simple: whoever secures compute first, in greater volume, and with greater reliability ultimately takes the win. With OpenAI's 30GW roadmap dwarfing Anthropic's 7–8GW, we closed by arguing that the structural advantage on compute sat with OpenAI. Less than a fortnight later, that conclusion is being tested. On May 6, Anthropic signed a single-tenant lease for the entirety of Colossus 1 with SpaceXAI — the infrastructure subsidiary that consolidates Elon Musk's xAI and SpaceX. The asset carries more than 220,000 GPUs and 300MW of power, and crucially, is scheduled to come online within this month. It served as the capstone of Anthropic's April blitz, which added 13.8GW of cumulative capacity over the span of a single month. On headline numbers alone, OpenAI took more than a year to stack 18GW; Anthropic has put 13.8GW in the ground in thirty days. The takeaways break down into three. First, the compute pecking order has been redrawn again. Anthropic has now swept up the AWS expansion (5GW, with $100B+ in spend commitments over a decade), Google + Broadcom (3.5GW of TPU), Google Cloud (5GW alongside a $40B investment), and now SpaceXAI's Colossus 1 (0.3GW). Cumulative committed capacity, inclusive of pre-April allocations, sits at 14.8GW. This is still only half of OpenAI's 2030 target of 30GW, but the fact that the SpaceX lease will be live inside a month makes "deliverability" a qualitatively different proposition. Second, Elon Musk is the plaintiff in an active lawsuit against OpenAI — and at the same time, the supplier handing 220,000+ GPUs and 300MW of power, in one block, to OpenAI's most formidable competitor. The timing matters: the deal was struck in the middle of the Musk–Altman trial. We read this as a deliberate pincer with OpenAI in the middle. In the courtroom, Musk works to dismantle the moral legitimacy of OpenAI's leadership; in the market, he arms Anthropic to absorb OpenAI's revenue and user base. Third, the structure is financial-engineering perfection — a clean win-win for both sides. xAI can recognize $6B of annual revenue from a single contract, an amount that almost precisely offsets its Q1 2026 annualized net loss of $6B. It also accelerates the cleanup of SpaceXAI's pre-IPO balance sheet, with the entity now being floated at around $1.75T. Anthropic, on the other side, converts roughly $5B of spend into what it expects to be $15B of ARR via the coming inference-revenue surge. (Mirae Asset Securities, May 8, 2026)

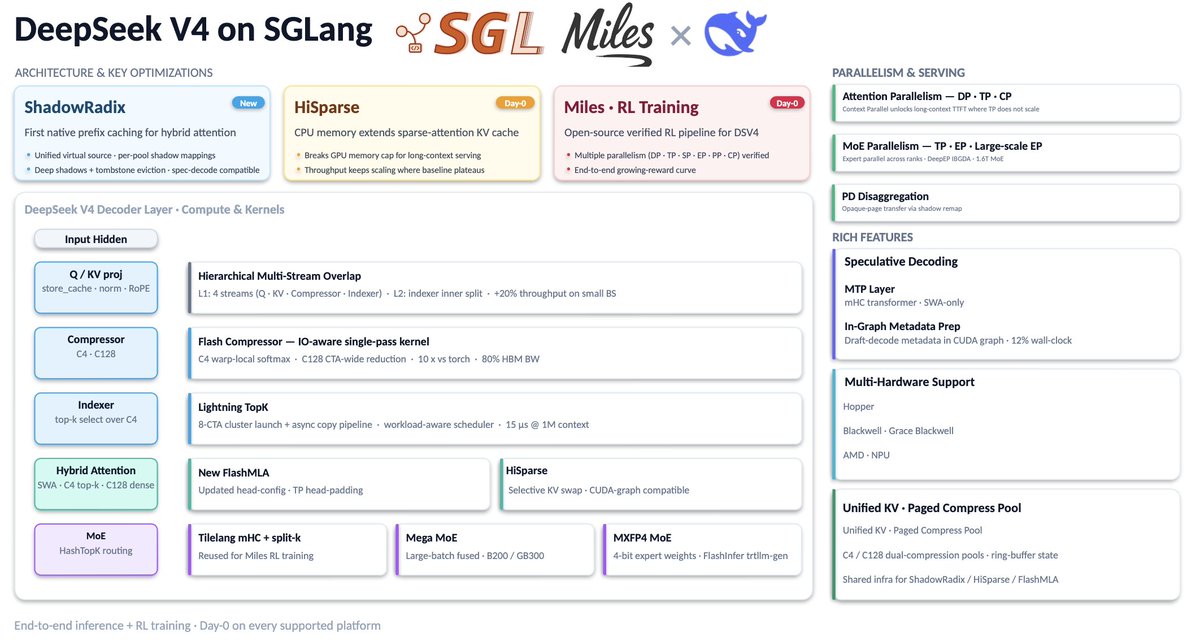

Today, we are thrilled to officially launch RadixArk with $100M in Seed funding at a $400M valuation. The round was led by @Accel and co-led by @sparkcapital. RadixArk exists to make frontier AI infrastructure open and accessible to everyone. Today, the systems behind the most capable AI models are concentrated in a small number of companies. As a result, most AI teams are forced to rebuild training and inference stacks from scratch, duplicating the same infrastructure work instead of focusing on new models, products, and ideas. RadixArk was founded to change that. We are building an AI platform that makes it easier for teams to train and serve the best models at scale. RadixArk comes from the open-source community. We started with SGLang, where many of us are core developers and maintainers, and expanded our work to Miles for large-scale RL and post-training. We will continue contributing to both projects and working with the community to make them the strongest open-source infrastructure foundations for frontier AI. We would like to thank our long-term partners, contributors, and the broader SGLang community for believing in this mission. We're also grateful to @Accel and @sparkcapital, NVentures (Venture capital arm of @nvidia), Salience Capital, A&E Investment, @HOFCapital, @walden_catalyst, @AMD, LDVP, WTT Fubon Family, @MediaTek, Vocal Ventures, @Sky9Capital and our angel investors @ibab, @LipBuTan1, Hock Tan, @johnschulman2, @soumithchintala, @lilianweng, @oliveur, @Thom_Wolf, @LiamFedus, @robertnishihara, @ericzelikman, @OfficialLoganK, and @multiply_matrix among others. Thanks for the exclusive interview with @MeghanBobrowsky at @WSJ about our vision.

Scientists discuss whether AI could surpass human contributions to physics by 2035 physicsworld.com/a/is-vibe-phys…

At 1:30 a.m. PT on November 3, 2023 Elon sent a message to the xAI group chat saying that we need to go “extremely hardcore” for the next 36 hours; Grok will be released publicly tomorrow. You didn’t have to be in the exclusive company chat to get the message; it was also posted publicly at the same time: x.com/i/status/17203… What unfolded over the next day and a half was one of the best examples of engineering at pace that I’ve ever seen. All we had when we started was a somewhat fine-tuned base model and a half-baked UI. Our team of ten split up the tasks: curate data, improve the model, implement the raw prompting and RAG service, build the production infra. I took care of the latter. At 8:51 p.m. PT the next day, we announced Grok to the world with a long-form post on X (x.com/xai/status/172…). Over the past 36 hours, we came up with Fun mode (including Grok’s sunglasses), finished the whole production system, and most importantly tuned the RAG system that gave it real-time knowledge of the world through the X platform (a first in the industry). A day and a half of straight coding and shipping; no drugs, not even caffeine, just pure adrenaline. Elon gave us a mission and we delivered. The launch went very well. We invited a couple hundred X creators and Grok’s ability to roast accounts went viral. It was the first time a publicly accessible AI was allowed to poke fun at people. This episode is a prime example of what you can achieve by going extremely hardcore: you move and deliver results faster than any outsider could have anticipated. Within 36 hours, we took the company from silence to relevance. It was well worth it. xAI’s hardcore culture is infamous on X. I love the tent meme that suggests we all sleep (well, slept in my case) in the office in tents. Our reputation precedes us and even new joiners hit the ground grinding hard. However, unless you understand the “why,” you are at risk of simply replicating the “how” without achieving the same results. You need to grind with purpose and the purpose is to move fast towards a known goal. When the goal and the means of reaching it are crystal clear, a small, skilled, and highly motivated team can outcompete companies old and new, big and small. Never grind to show off; never work late to be seen; never sacrifice without cause. There is no medal for the one who tried extremely hard but failed. There is only a medal for the winner. If all your efforts lead nowhere, you’re arguably not very productive. Always keep your eyes firmly on the goal, do everything to reach it as quickly as possible, and make sure you're on track to win. A hardcore engineering culture is one of the most effective ways of accelerating real progress. Watch out for performative sacrifice and don’t confuse pain with progress.

Anthropic has no strategy. Claude Code started as someone's side project, and so did Cowork and MCP.

Peter Steinberger is joining OpenAI to drive the next generation of personal agents. He is a genius with a lot of amazing ideas about the future of very smart agents interacting with each other to do very useful things for people. We expect this will quickly become core to our product offerings. OpenClaw will live in a foundation as an open source project that OpenAI will continue to support. The future is going to be extremely multi-agent and it's important to us to support open source as part of that.

Introducing TinyClaw 🦞 OpenClaw in 400 LoC @openclaw is great, but it breaks all the time. So I recreated @openclaw with just a shell script in ~400 lines of code using Claude Code and tmux. Everything works! WhatsApp channels, heartbeat system, cron jobs, and it uses your existing Claude Code plugins and setup. It’s super stable and extremely easy to deploy compared to openclaw, just install Claude Code! github.com/jlia0/tinyclaw