Orionlee

49 posts

@sudoingX hello @sudoingX ! is it even worth to buy 5090 or 3090 would be enough?

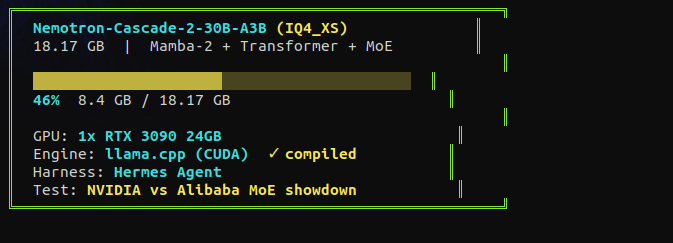

the hype around this model settled fast. good. now i can test it without the noise. NVIDIA released nemotron cascade. 30B total, 3B active. fits on a single RTX 3090. hybrid mamba MoE. gold medal on the international math olympiad with only 3 billion active parameters. they say it beats qwen on math, code, and reasoning. i tested qwen 3.5 35B-A3B on a single 3090 at 112 tok/s. now same card, same tests, different architecture. mamba vs deltanet. nvidia vs alibaba. receipts incoming tonight.

这是我在和朋友一起调试的商业模式分析工具,类似专注于商业分析场景的 Deep Research。 这篇文章是一个 Short Summary。背后是 7 个不同视角(宏观到微观,经济到运营)的独立分析报告,然后逻辑上整合浓缩到一起的超简单版(也有 6000 字左右)。 不同于单纯的使用正向 Deep Research 工具甚至只是 Web Search 然后让 AI 来分析并输出结果,这套 Agent 有比较严格的幻觉控制机制,对每个分析框架都固定了推理逻辑细节,并有逆向的逻辑对抗审核机制,这样实测在多个 Ralph Loop 后可以比较有效的推高整个报告的质量,产生有一些真的务实视角的洞察。(为了省钱,我自己的样例报告不会循环太多次,平衡成本问题) 对我个人来说,可以让我快速的了解一个感兴趣的陌生企业和行业,对我的部分创业者朋友来说,有了一些启发。 似乎就足够了。 我大概会在不断迭代和修改的过程中,经常的发我感兴趣的企业的商业分析,也可以让大家了解不同行业企业的一些皮毛商识。 但如果有特别不正确的或者冒犯的,请告知,我随时删除。 博君一笑。

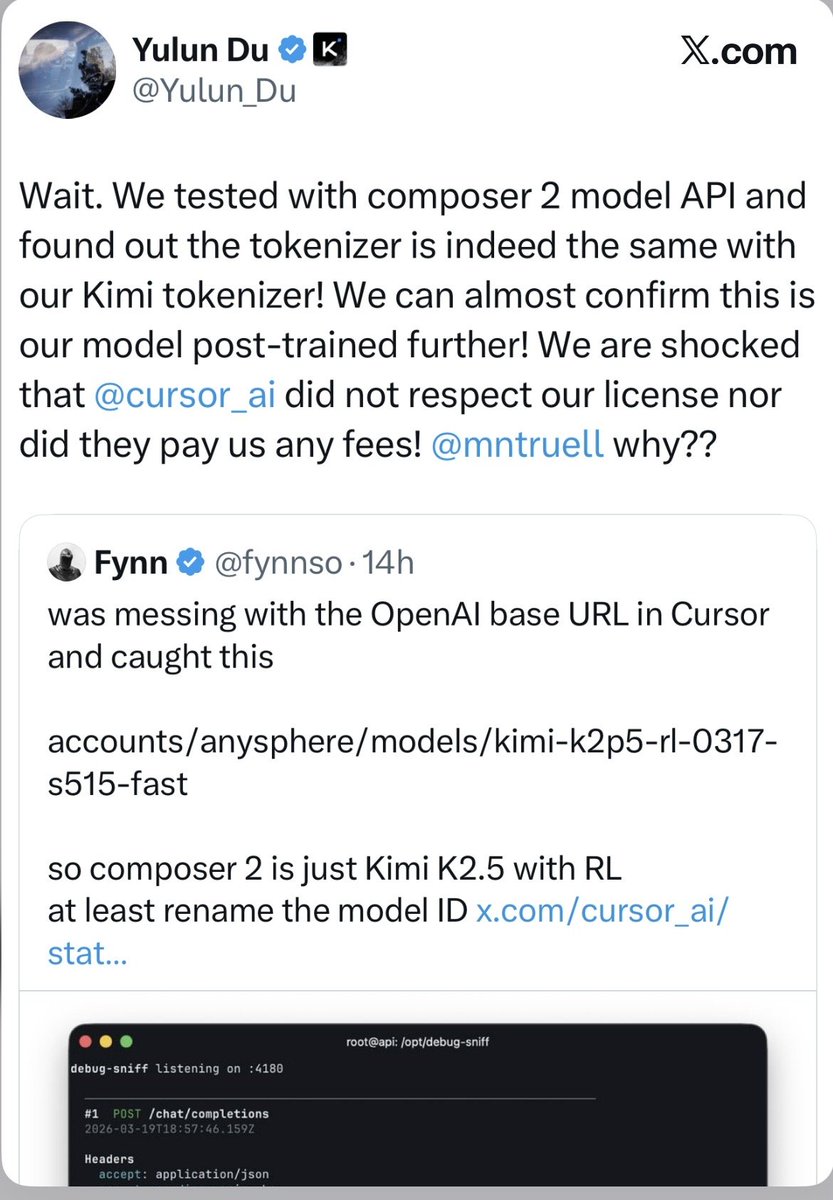

Cursor is raising at a $50 billion valuation on the claim that its “in-house models generate more code than almost any other LLMs in the world.” Less than 24 hours after launching Composer 2, a developer found the model ID in the API response: kimi-k2p5-rl-0317-s515-fast. That’s Moonshot AI’s Kimi K2.5 with reinforcement learning appended. A developer named Fynn was testing Cursor’s OpenAI-compatible base URL when the identifier leaked through the response headers. Moonshot’s head of pretraining, Yulun Du, confirmed on X that the tokenizer is identical to Kimi’s and questioned Cursor’s license compliance. Two other Moonshot employees posted confirmations. All three posts have since been deleted. This is the second time. When Cursor launched Composer 1 in October 2025, users across multiple countries reported the model spontaneously switching its inner monologue to Chinese mid-session. Kenneth Auchenberg, a partner at Alley Corp, posted a screenshot calling it a smoking gun. KR-Asia and 36Kr confirmed both Cursor and Windsurf were running fine-tuned Chinese open-weight models underneath. Cursor never disclosed what Composer 1 was built on. They shipped Composer 1.5 in February and moved on. The pattern: take a Chinese open-weight model, run RL on coding tasks, ship it as a proprietary breakthrough, publish a cost-performance chart comparing yourself against Opus 4.6 and GPT-5.4 without disclosing that your base model was free, then raise another round. That chart from the Composer 2 announcement deserves its own paragraph. Cursor plotted Composer 2 against frontier models on a price-vs-quality axis to argue they’d hit a superior tradeoff. What the chart doesn’t show is that Anthropic and OpenAI trained their models from scratch. Cursor took an open-weight model that Moonshot spent hundreds of millions developing, ran RL on top, and presented the output as evidence of in-house research. That’s margin arbitrage on someone else’s R&D dressed up as a benchmark slide. The license makes this more than an attribution oversight. Kimi K2.5 ships under a Modified MIT License with one clause designed for exactly this scenario: if your product exceeds $20 million in monthly revenue, you must prominently display “Kimi K2.5” on the user interface. Cursor’s ARR crossed $2 billion in February. That’s roughly $167 million per month, 8x the threshold. The clause covers derivative works explicitly. Cursor is valued at $29.3 billion and raising at $50 billion. Moonshot’s last reported valuation was $4.3 billion. The company worth 12x more took the smaller company’s model and shipped it as proprietary technology to justify a valuation built on the frontier lab narrative. Three Composer releases in five months. Composer 1 caught speaking Chinese. Composer 2 caught with a Kimi model ID in the API. A P0 incident this year. And a benchmark chart that compares an RL fine-tune against models requiring billions in training compute without disclosing the base was free. The question for investors in the $50 billion round: what exactly are you buying? A VS Code fork with strong distribution, or a frontier research lab? The model ID in the API answers that. If Moonshot doesn’t enforce this license against a company generating $2 billion annually from a derivative of their model, the attribution clause becomes decoration for every future open-weight release. Every AI lab watching this is running the same math: why open-source your model if companies with better distribution can strip attribution, call it proprietary, and raise at 12x your valuation? kimi-k2p5-rl-0317-s515-fast is the most expensive model ID leak in the history of AI licensing.

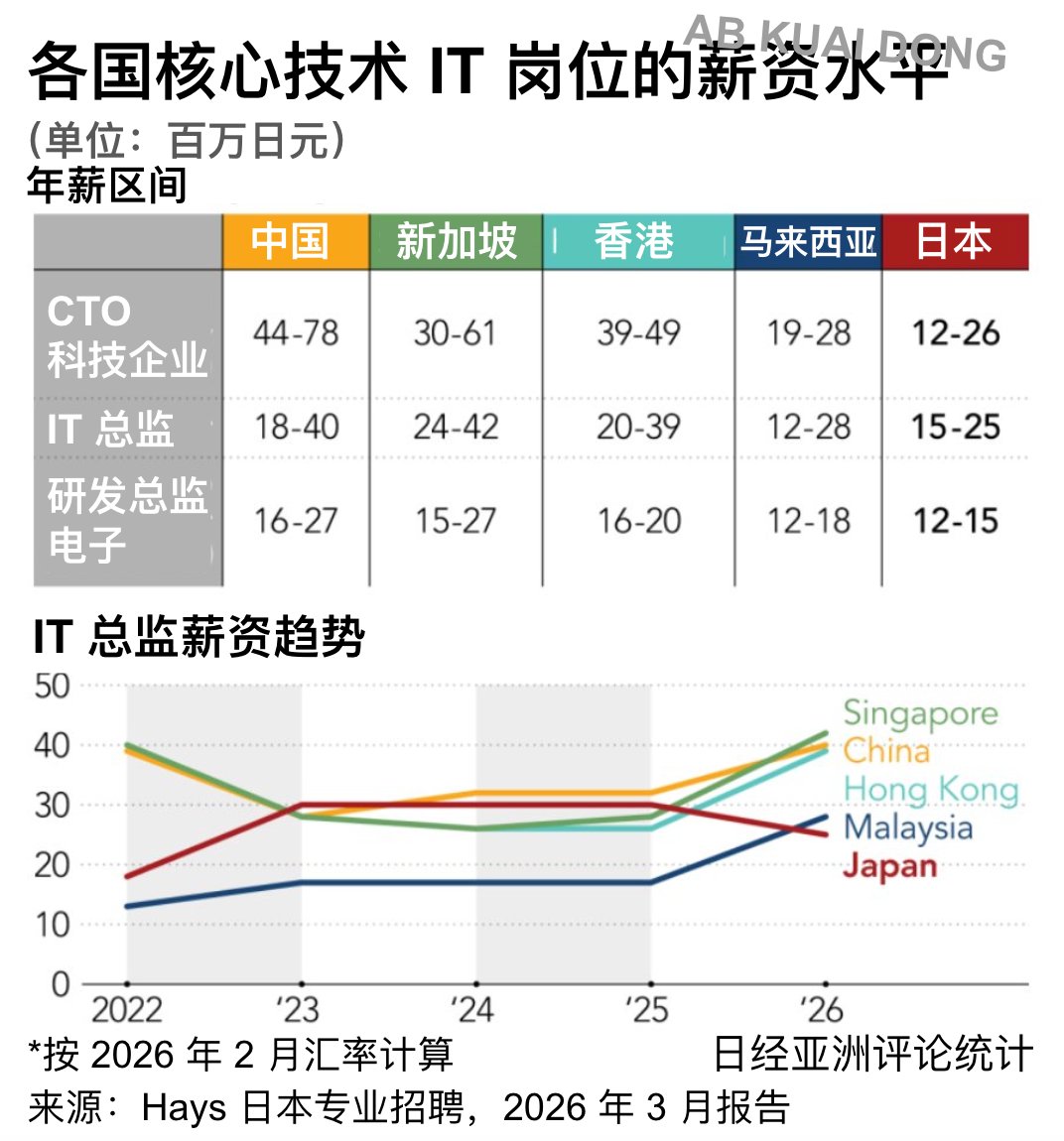

Malaysia has overtaken Japan in salaries for key technology roles for the first time, driven by rising investment in the booming semiconductor industry and intensifying competition in digital sectors. s.nikkei.com/40D2o4E