Hungry Minded

1.8K posts

Hungry Minded

@HungryMinded

Always curious and always HungryMinded.

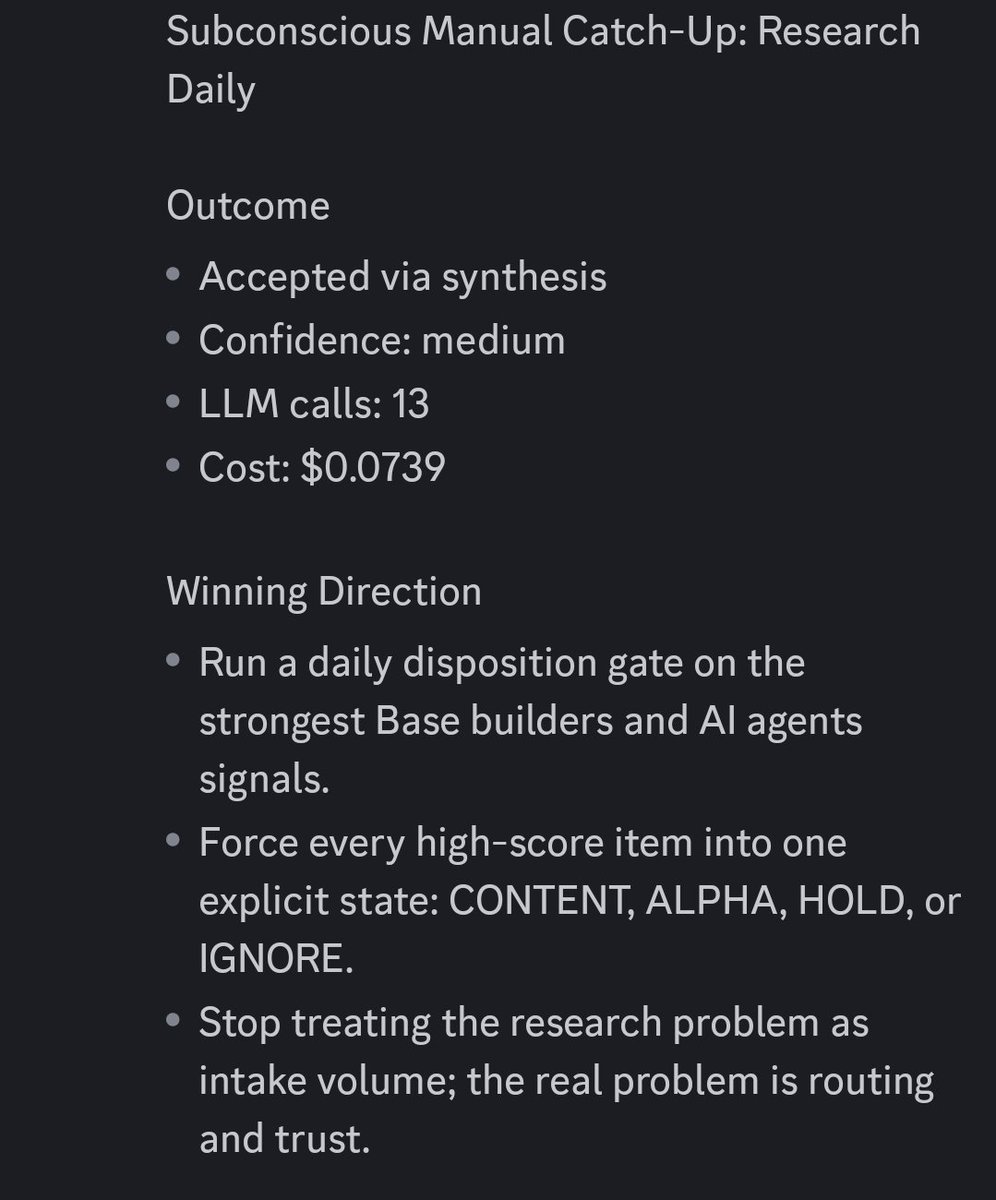

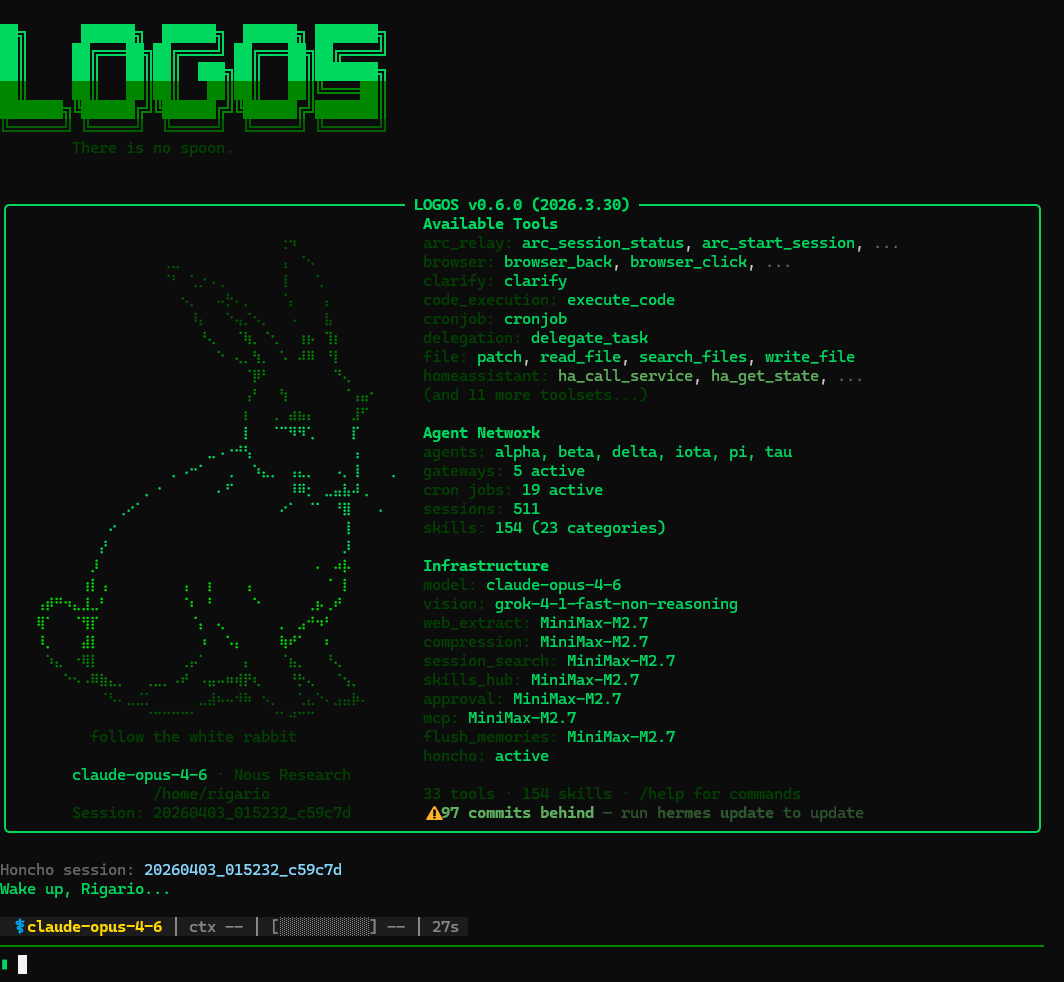

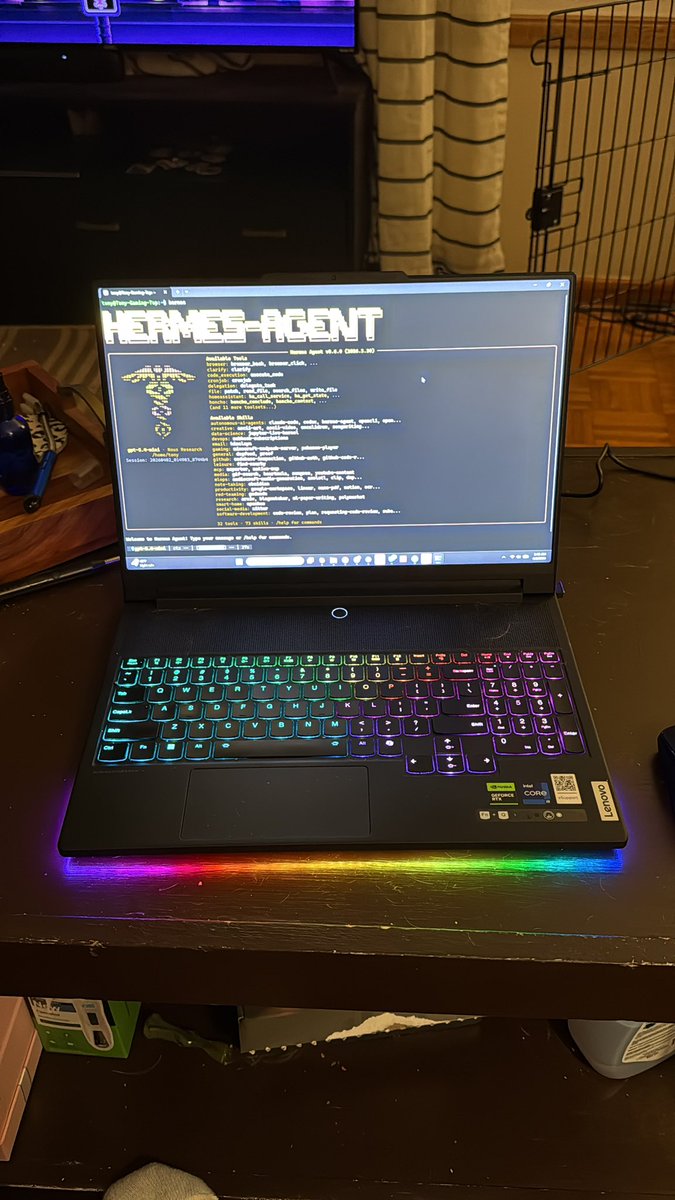

The #1 criticism I've received about the self-improving multi-agent framework is that you can't control the agents' outputs. So I built a “Subconscious agent.” Inspired by @karpathy’s autoresearch, it’s an LLM process that continuously looks for useful problems to solve. All day long it contextualizes data, connects ideas, and stress-tests assumptions before anything reaches the main agent. Once the Subconscious has a tested good idea, it brings it to the Main agent to be pressure-tested further. The flow looks like this: - [IDEA] Subconscious surfaces a promising idea - [CHALLENGE] Main agent attacks it, questions it, and asks for proof - [DEFEND] Subconscious strengthens the case - [REVISE] Subconscious improves the idea based on feedback - [REJECT] Main agent kills weak ideas - [ACCEPT] Main agent approves ideas worth implementing - [SHELVE] rejected ideas get logged for future learning Hard rules: - max 3 challenge rounds - every idea needs evidence and reasoning - every implementation runs in a sandbox The two agents will go back and forth until the idea is either accepted or rejected. This runs all day long. I’m using Hermes agent frame for both agents, the Subconscious has its own profile to concentrate on surfacing ideas 24/7. The Subconscious runs on a local Qwen3.5 9B model, while the main agent uses ChatGPT 5.4 mini. If you don’t have a local LLM, OpenRouter should work too. The goal is simple: more magic, less noise. If this gets traction, I’ll share the full setup in an article.

New Anthropic research: Emotion concepts and their function in a large language model. All LLMs sometimes act like they have emotions. But why? We found internal representations of emotion concepts that can drive Claude’s behavior, sometimes in surprising ways.

We’re saying goodbye to Sora. To everyone who created with Sora, shared it, and built community around it: thank you. What you made with Sora mattered, and we know this news is disappointing. We’ll share more soon, including timelines for the app and API and details on preserving your work. – The Sora Team

Today, we’re releasing a feature that allows Claude to control your computer: Mouse, keyboard, and screen, giving it the ability to use any app. I believe this is especially useful if used with Dispatch, which allows you to remotely control Claude on your computer while you’re away.

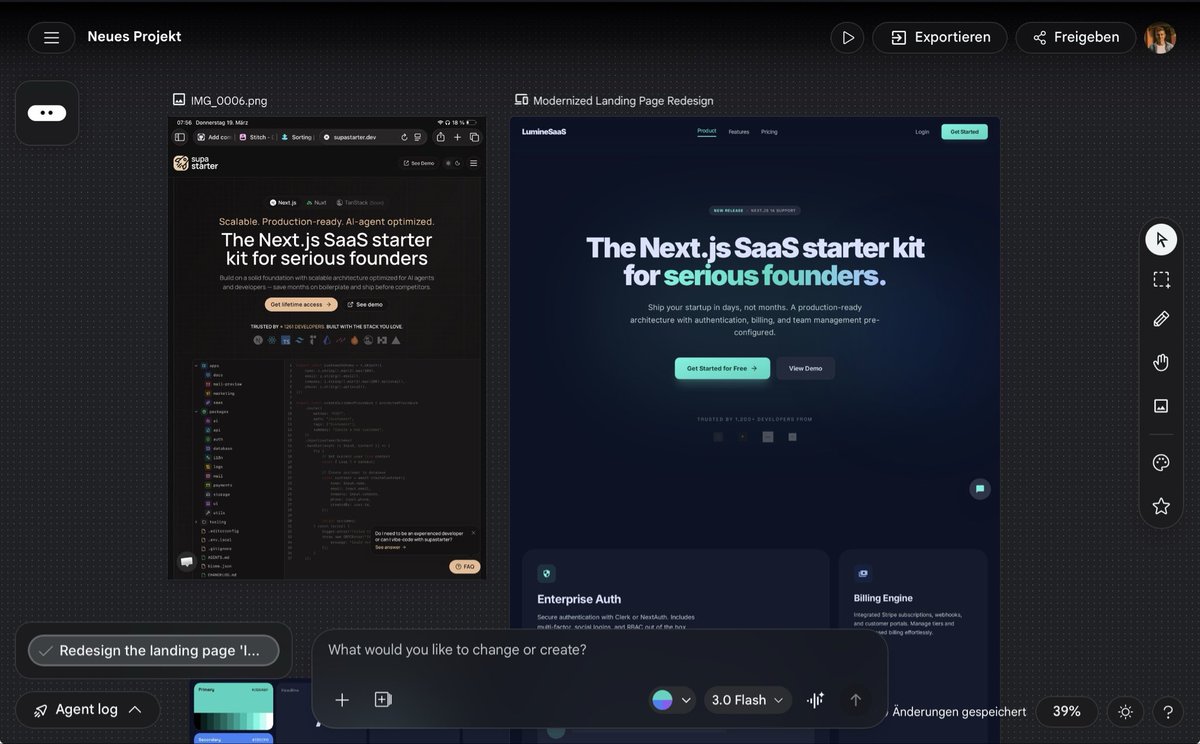

Introducing the new @stitchbygoogle, Google’s vibe design platform that transforms natural language into high-fidelity designs in one seamless flow. 🎨Create with a smarter design agent: Describe a new business concept or app vision and see it take shape on an AI-native canvas. ⚡️ Iterate quickly: Stitch screens together into interactive prototypes and manage your brand with a portable design system. 🎤 Collaborate with voice: Use hands-free voice interactions to update layouts and explore new variations in real-time. Try it now (Age 18+ only. Currently available in English and in countries where Gemini is supported.) → stitch.withgoogle.com

Meet the new Stitch, your vibe design partner. Here are 5 major upgrades to help you create, iterate and collaborate: 🎨 AI-Native Canvas 🧠 Smarter Design Agent 🎙️ Voice ⚡️ Instant Prototypes 📐 Design Systems and DESIGN.md Rolling out now. Details and product walkthrough video in 🧵