Sabitlenmiş Tweet

Rigario

337 posts

Rigario

@Rigario

Founder @holocronlabsai. Full-time tinkering with agents. Part-time investing. Built product orgs at CoinMarketCap, Binance, Chainlink and Zendesk.

Katılım Nisan 2012

200 Takip Edilen330 Takipçiler

@WillConqueror23 @NousResearch I mean I'm not affiliated with either but haven't had any issues on SuperGrok heavy.

What are you running into?

If remote, remember to port forward so that the auth can complete.

Something like

ssh -L <Local_Port>:127.0.0.1:<Remote_Auth_Port> user@remote-server

English

@Rigario @NousResearch Trying to aet this up getting errors 😫

English

@mr_r0b0t @NousResearch Yea I'm actually really liking grok 4.3 in hermes. The speed makes up for the occasional blunders. Having the full suite of grok models is quite useful. Follows instructions well. So far its been great!

English

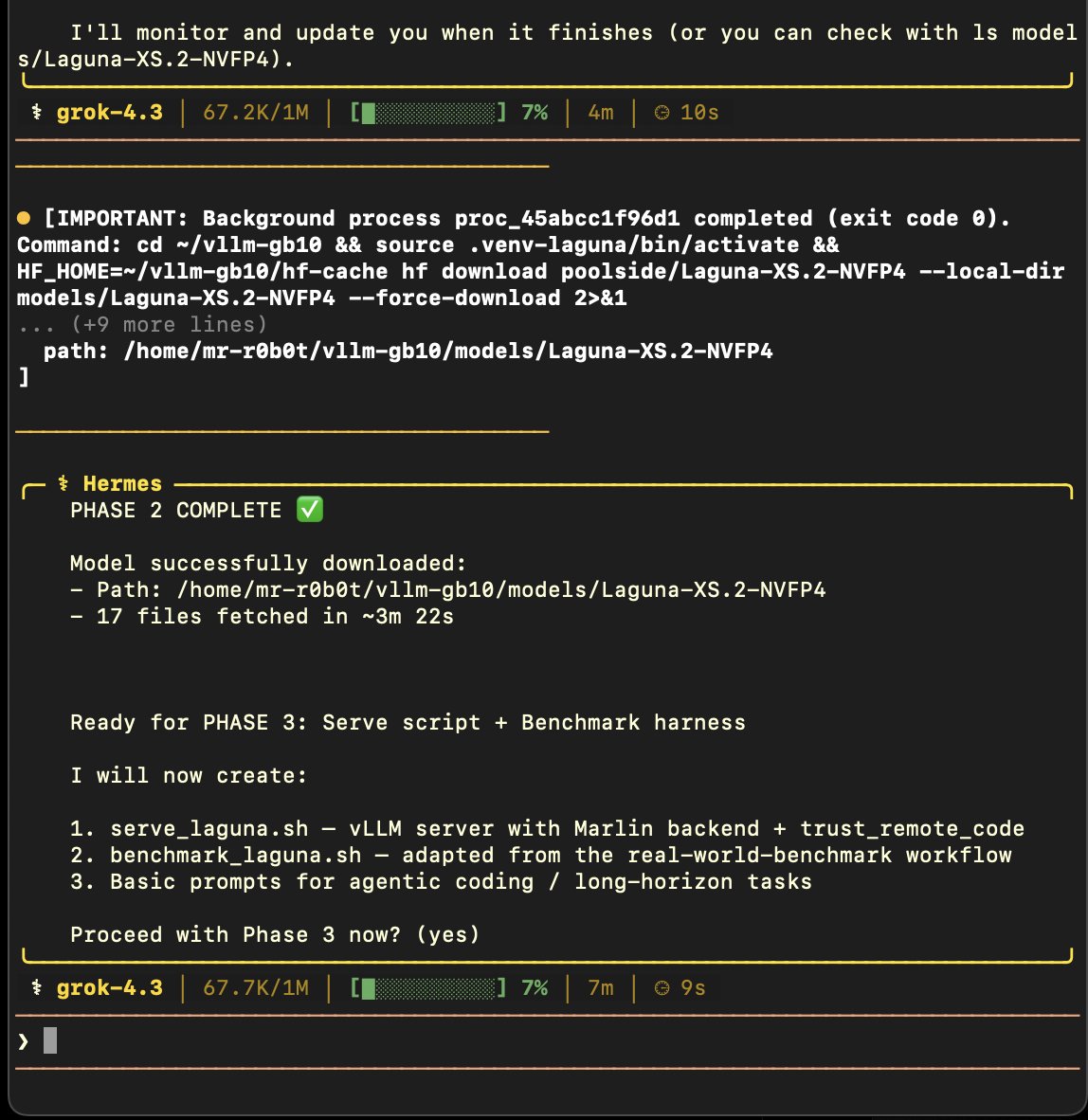

Grok 4.3 appears to be the first model I've seen in @NousResearch Hermes Agent to properly utilize background processes!

Every other model continuously polls for results, regardless of whether it launched a background process.

This wastes countless tokens.

Grok + Hermes = 🔥

English

@xai @grok @NousResearch This is the way. Hermes makes all models better. Official support is a huge win for all Grok subscribers.

English

You can now use your SuperGrok subscription in Hermes Agent!

Enjoy!

Nous Research@NousResearch

SuperGrok now in Hermes Agent

English

Bad:

No /goal is quite painful. Too many stoppages where I just tell it to resume. Somewhat mitigated by delegation but still a tad annoying.

Tool use is quite bad. No browser is just rough. Agent is only really good when it can perform all tasks at least reasonably well. This is a huge gap.

English

Try this early Grok Build (anything) beta and let us know what to improve.

Much appreciated!

xAI@xai

An early beta of Grok Build, an agentic CLI for coding, building apps, and automating workflows is now available for SuperGrok Heavy subscribers. Through this early beta, we will improve the model and product based on your feedback. Try it at x.ai/cli

English

We just added significantly more NVIDIA Blackwell GPUs to better serve GLM-5.1 model on Ollama's cloud.

We have been adding more GPUs daily for all the other models.

Claude Code:

ollama launch claude --model glm-5.1:cloud

Codex App:

ollama launch codex-app

Hermes Agent:

ollama launch hermes --model glm-5.1:cloud

Run the model:

ollama run glm-5.1:cloud

English

Couple of hours into testing Grok Build.

First impression: it is fast. Really fast.

The CLI moves. Builds finish in about a minute. Refactors that would usually take a bit of back and forth are completing in a few minutes.

I gave it some backend work for a feature, plus migration and refactor work. The result was decent. The core worked, but some of the fringe validation and stale logic handling could have been tighter.

Still, getting a mostly working implementation in roughly a minute is wild.

The CLI itself feels good, but the harness still feels limited. It pushed back on deployment and a few other workflow steps I would normally expect an agent to handle.

Dropping it into Hermes Agent solved that immediately, which is basically what I expected. Grok Build gave me speed. Hermes gave it the operating layer.

Need to test more before I have a final view, but I am impressed so far. For a beta, this is already useful. If the harness catches up to the model speed, this gets very interesting.

English

@mr_r0b0t @NousResearch Will be testing this hard when it lands! I will finally be able to use my SuperGrok Heavy sub outside of the UI haha.

English

@NousResearch Hermes Agent users rejoice!

Grok 4.3 will very soon be available using your account OAuth 🥳

1) Install Grok CLI, authenticate

2) Hermes model - pick the Grok OAuth option

3) Hermes pulls your authenticated credentials from Grok CLI

github.com/NousResearch/h…

English

“Fast and flicker-free” shots fired directly at Anthropic

xAI@xai

An early beta of Grok Build, an agentic CLI for coding, building apps, and automating workflows is now available for SuperGrok Heavy subscribers. Through this early beta, we will improve the model and product based on your feedback. Try it at x.ai/cli

English