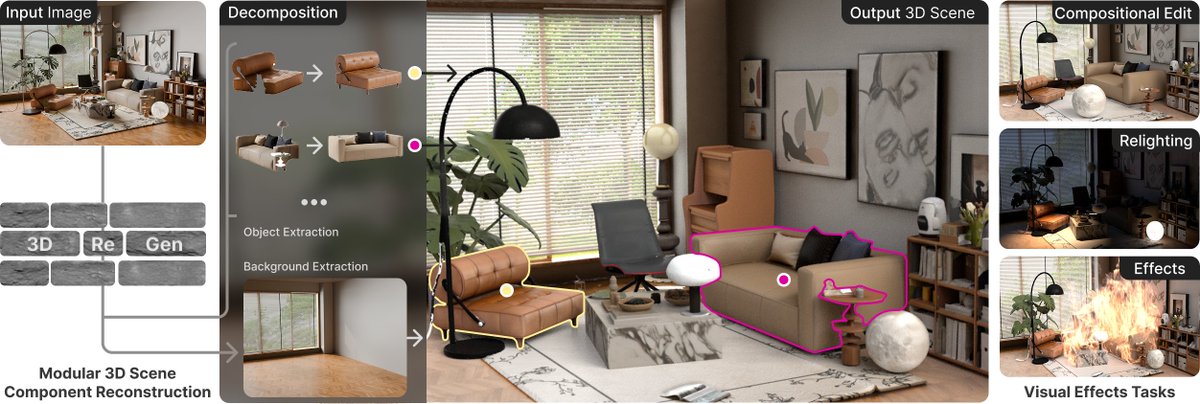

MatSpray: Fusing 2D Material World Knowledge on 3D Geometry Super fast materials for every 3D Gaussian Splatting scene from swappable foundation models. Work together with Philipp Langsteiner @CleanupVessel94 from our @CG_Tuebingen Links 👇

Jan

132 posts

@JDihlmann

Researching 3D Reconstruction & Generation | PhD Student University of Tübingen @CG_Tuebingen & @MPI_IS | Previously @saysomapp

MatSpray: Fusing 2D Material World Knowledge on 3D Geometry Super fast materials for every 3D Gaussian Splatting scene from swappable foundation models. Work together with Philipp Langsteiner @CleanupVessel94 from our @CG_Tuebingen Links 👇

MatSpray: Fusing 2D Material World Knowledge on 3D Geometry Contributions: • World Material Fusion: A plug-and-play pipeline that, to our knowledge, is the first to fuse swappable diffusion-based 2D PBR priors ("world material knowledge") with 3D Gaussian material optimization. It uses Gaussian ray tracing and PBR consistent supervision to obtain relightable assets. • Neural Merger: A softmax neural merger that aggregates per-Gaussian, multi-view material estimates, suppresses baked-in lighting, and enforces cross-view consistency while stabilizing joint environment map optimization. • Faster Reconstruction: A simple projection and optimization scheme that reconstructs high-quality relightable 3D materials with 3.5× less per-scene optimization time than IRGS [9].