Kangrui Wang

44 posts

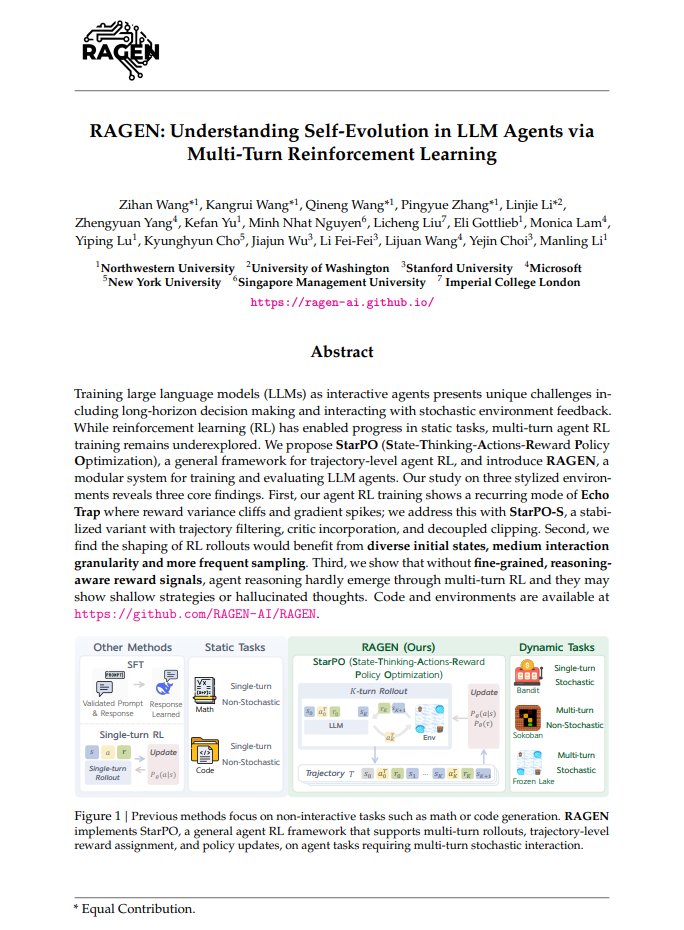

In Agent RL, models suffer from Template Collapse. They generate vast, diverse outputs (High Entropy) that lose all meaningful connection to the input prompt (Low Mutual Information). In other words, agent learn different ways to say nothing. 🚀 Introducing RAGEN-v2 -- Here's how we define and fix such silent failure modes in Agent RL. 🧵

🚀 Announcing the 2nd Workshop on Foundation Models Meet Embodied Agents (FMEA) @ CVPR 2026! How can we leverage foundation models to help perceive, reason, plan, and act in the physical world? 👉 FMEA brings together researchers across vision, language, robotics, and ML to push the frontier of foundation models for embodied agents. 📣 Call for Papers is now open! We invite submissions on LLMs, VLMs, Video Action (VA), and Vision–Language–Action (VLA) models for embodied agents, including: - Long-horizon reasoning & planning - Spatial intelligence & physical understanding - World models, memory, and interaction - Vision–language–action learning and evaluation - Benchmarks, datasets, and evaluation protocols for embodied agents 🏆 Challenges @ FMEA 2026 🔹 ENACT — evaluating embodied cognition of VLMs with world modeling of egocentric interaction enact-embodied-cognition.github.io 🔹 EmbodiedBench — benchmarking VLM-based embodied agents across perception, reasoning, and action embodiedbench.github.io 🔹 Embodied Agent Interface (EAI) — evaluating LLM-based agents on goal interpretation, subgoal decomposition, action sequencing, and transition modeling embodied-agent-interface.github.io 📝 OpenReview submission portal (deadline: May 1st, 2026): openreview.net/group?id=thecv… 🌐 Workshop website: …models-meet-embodied-agents.github.io/cvpr2026/ 📍 Join us at CVPR 2026 — excited to see what you’ll build and submit!

🔥Our #NeurIPS challenge on Foundation Models meet Embodied Agents released the final eval for “Embodied Agent Interface". 🚀Come test your LLMs for Embodied Agent tasks! ⚒️We've newly annotated ~5000 data points for: - Goal Interpretation - Subgoal Decomposition - Action Sequencing - Transition Modeling 🏅Multiple prizes: generously sponsored by @AIX_Foundation. 🙌Looking forward to talking with you at San Diego! Come chat with our organizers @jiajunwu_cs @drfeifei @YejinChoinka @percyliang @maojiayuan @Weiyu_Liu_ @RuohanZhang76 ErranLi, and huge thanks to Tianwei Bao @qineng_wang @James_KKW @yu_bryan_zhou for their incredible efforts!

🚀🔥 Thrilled to announce our ICML25 paper: "Why Is Spatial Reasoning Hard for VLMs? An Attention Mechanism Perspective on Focus Areas"! We dive into the core reasons behind spatial reasoning difficulties for Vision-Language Models from an attention mechanism view. 🌍🔍 Paper: arxiv.org/pdf/2503.01773 Code: github.com/shiqichen17/Ad… Website: shiqichen17.github.io/AdaptVis/

AI’s next frontier is Spatial Intelligence, a technology that will turn seeing into reasoning, perception into action, and imagination into creation. But what is it? Why does it matter? How do we build it? And how can we use it? Today, I want to share with you my thoughts on building and using world models to unlock spatial intelligence in this essay below. 1/n

Want to get an LLM agent to succeed in an OOD environment? We tackle the hardest case with SPA (Self-Play Agent). No extra data, tools, or stronger models. Pure self-play. We first internalize a world model via Self-Play, then we learn how to win by RL. Like a child playing with the env to simply learn about “what if I do this?” Below, we show our findings on: What is wrong with OOD environments? What are the key factors that allow self-play to succeed? (1/8)

World Model Reasoning for VLM Agents (NeurIPS 2025, Score 5544) We release VAGEN to teach VLMs to build internal world models via visual state reasoning: - StateEstimation: what is the current state? - TransitionModeling: what is next? MDP → POMDP shift to handle the partial observability from visual states! mll.lab.northwestern.edu/VAGEN/ 🙌Led by @James_KKW @WilliamZhangNU @wzihanw @yaning_gao @LINJIEFUN @qineng_wang @hc81Jeremy @w4nanch1 @2prime_PKU @zhengyuan_yang lijuanwang,@RanjayKrishna @jiajunwu_cs @drfeifei @YejinChoinka 👍Grateful for the joint effort of @northwesterncs @uwcse @StanfordAILab @microsoft @WisconsinCS @siebelschool.

Can VLMs build Spatial Mental Models like humans? Reasoning from limited views? Reasoning from partial observations? Reasoning about unseen objects behind furniture / beyond current view? Check out MindCube! 🌐mll-lab-nu.github.io/mind-cube/ 📰arxiv.org/pdf/2506.21458 🤗huggingface.co/datasets/MLL-L… 👩💻github.com/mll-lab-nu/Min…

🚀 Introducing Chain-of-Experts (CoE), A Free-lunch optimization method for DeepSeek-like MoE models! within $200, we explore to train MoEs that enables 17.6-42% efficiency boost in memory! Code: github.com/ZihanWang314/c… Blog: notion.so/Chain-of-Exper… 博客:notion.so/Chain-of-Exper… 1/7🧵

🤖 Household robots are becoming physically viable. But interacting with people in the home requires handling unseen, unconstrained, dynamic preferences, not just a complex physical domain. We introduce ROSETTA: a method to generate reward for such preferences cheaply. 🧵⬇️