Jinghan Zhang

53 posts

@jinghan23

CSE PhD student @hkust in her second year advised by @junxian_he . Machine learning, NLP. bluesky here: https://t.co/ECxlKtKTxz

Want to get an LLM agent to succeed in an OOD environment? We tackle the hardest case with SPA (Self-Play Agent). No extra data, tools, or stronger models. Pure self-play. We first internalize a world model via Self-Play, then we learn how to win by RL. Like a child playing with the env to simply learn about “what if I do this?” Below, we show our findings on: What is wrong with OOD environments? What are the key factors that allow self-play to succeed? (1/8)

🚀🔥 Thrilled to announce our ICML25 paper: "Why Is Spatial Reasoning Hard for VLMs? An Attention Mechanism Perspective on Focus Areas"! We dive into the core reasons behind spatial reasoning difficulties for Vision-Language Models from an attention mechanism view. 🌍🔍 Paper: arxiv.org/pdf/2503.01773 Code: github.com/shiqichen17/Ad… Website: shiqichen17.github.io/AdaptVis/

Share our another #ICML25 paper: “Bring Reason to Vision: Understanding Perception and Reasoning through Model Merging” ! (1/5) We use model merging to enhance VLMs' reasoning by integrating math-focused LLMs—bringing textual reasoning into multi-modal models. Surprisingly, this avoids catastrophic forgetting and yields strong performance gains as a free lunch ! 🍱 We further leverage merging as an interpretability tool and uncover a key insight: perception and chain-of-thought reasoning are naturally decomposed in the parameter space! 🌍🔍 Paper: arxiv.org/pdf/2505.05464 Code: github.com/shiqichen17/VL…

[1/4] RSA is accepted by #EMNLP2024 main track 🥳 - Enhance Any protein understanding model with lightning-fast retrieval. - 373x faster than MSA, on-the-fly computation, achieves comparable performance. Preprint link: biorxiv.org/content/10.110… Code: github.com/HKUNLP/RSA

🎉Adapters 1.0 is here!🚀 Our open-source library for modular and parameter-efficient fine-tuning got a major upgrade! v1.0 is packed with new features (ReFT, Adapter Merging, QLoRA, ...), new models & improvements! Blog: adapterhub.ml/blog/2024/08/a… Highlights in the thread! 🧵👇

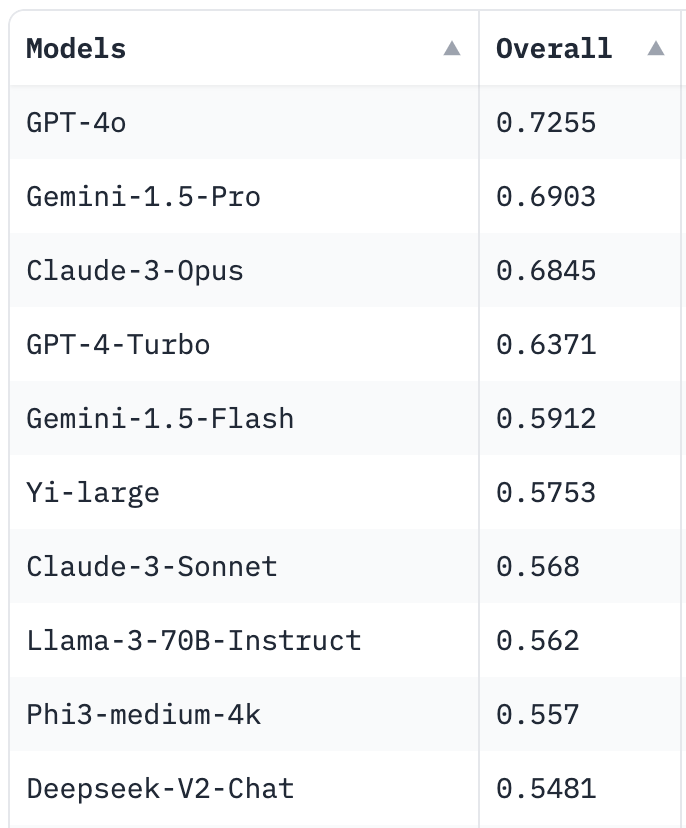

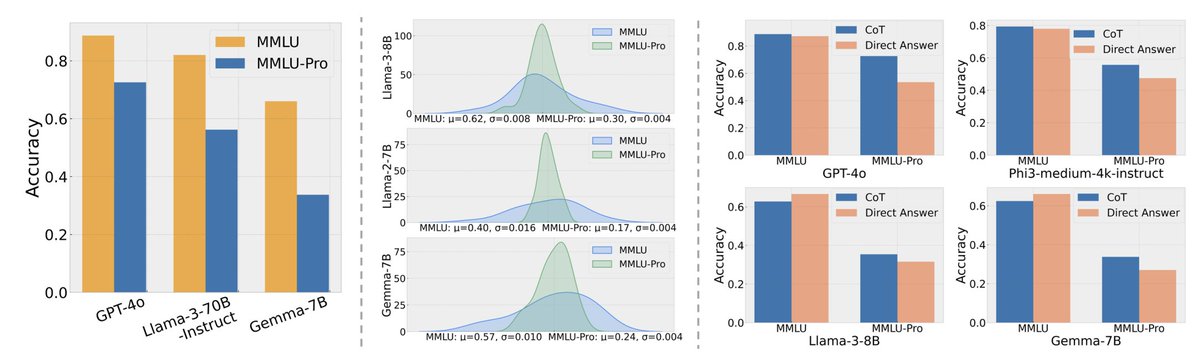

MMLU-Pro A More Robust and Challenging Multi-Task Language Understanding Benchmark In the age of large-scale language models, benchmarks like the Massive Multitask Language Understanding (MMLU) have been pivotal in pushing the boundaries of what AI can achieve

🚨 Model Merging competition @NeurIPSConf!🚀 Can you revolutionize model selection and merging?Let's create the best LLMs!🧠✨ 💻Come for science 💰Stay for $8K 💬Discord: discord.gg/dPBHEVnV 🔗Sign up: llm-merging.github.io Sponsors: @huggingface @SakanaAILabs @arcee_ai

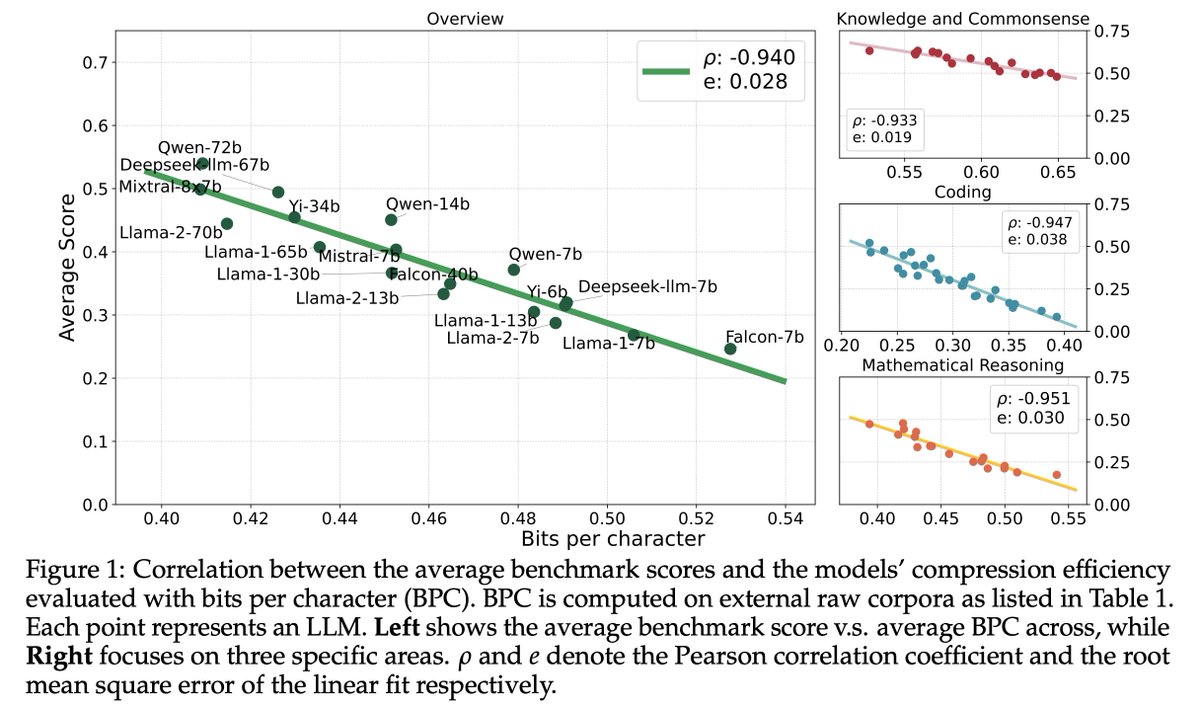

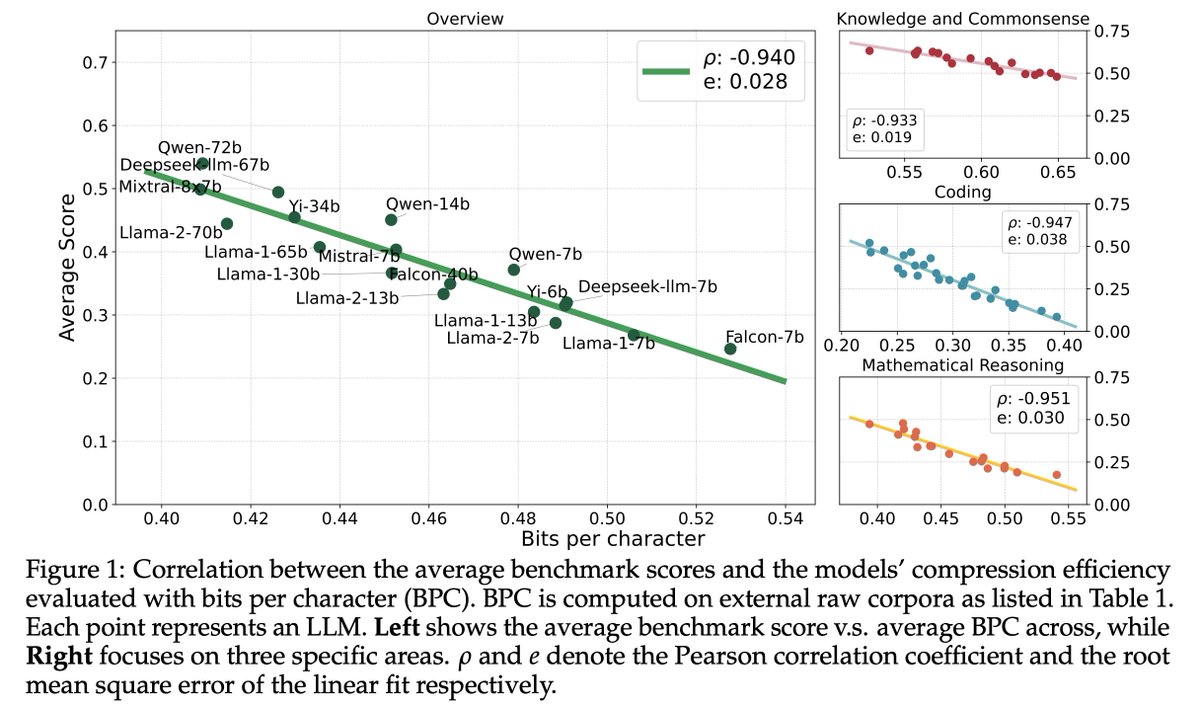

📢 Excited to finally be releasing my NeurIPS 2024 submission! Is Chinchilla universal? No! We find that: 1. language model scaling laws depend on data complexity 2. gzip effectively predicts scaling properties from training data As compressibility 📉, data preference 📈. 🧵⬇️