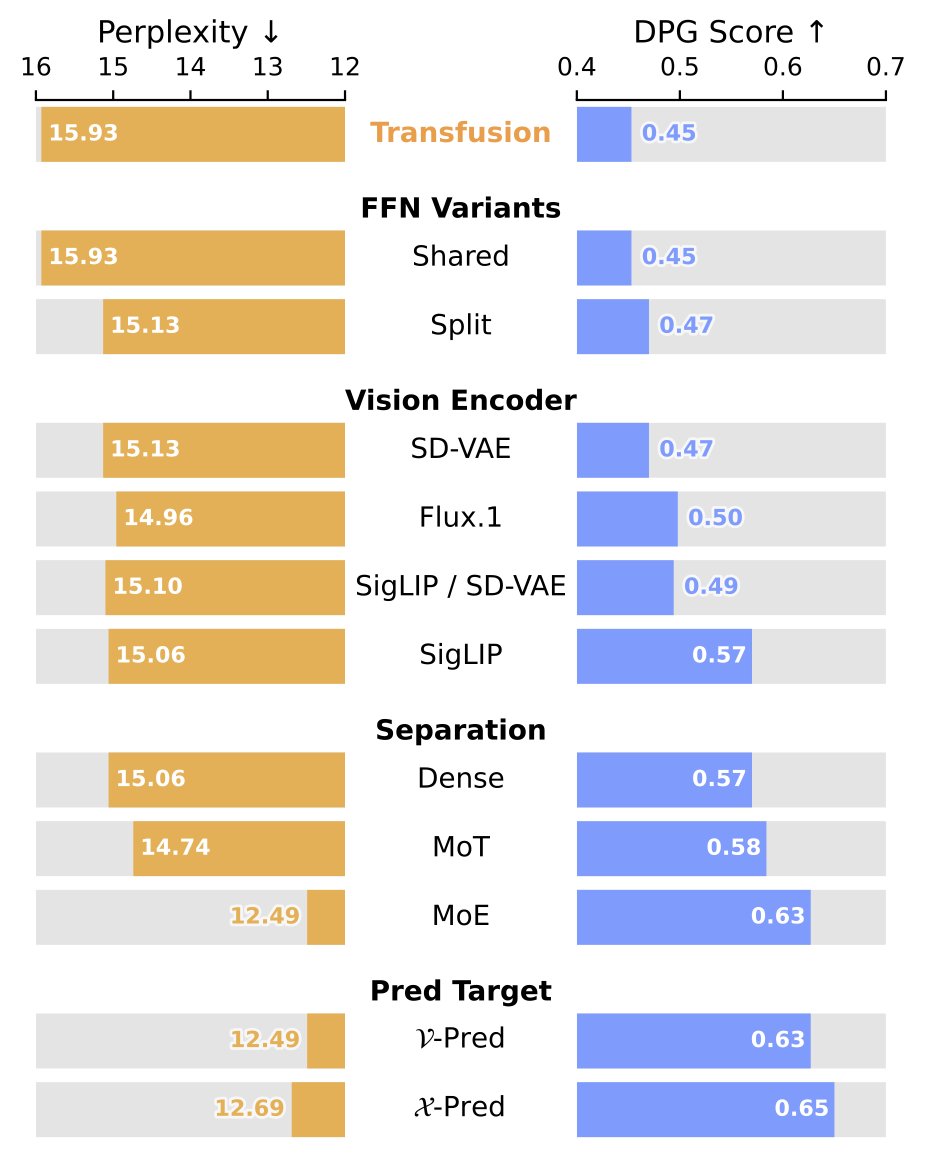

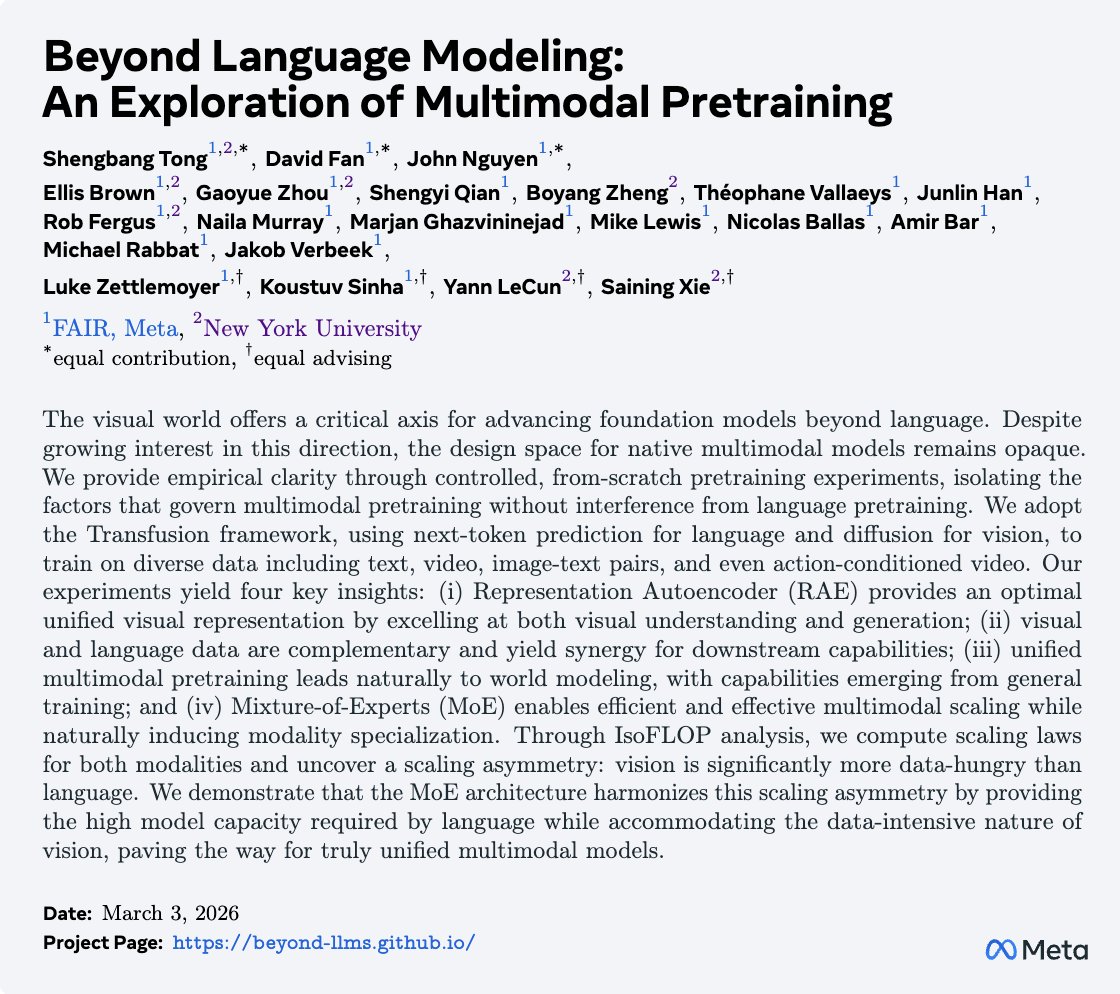

[1/9] What happens when you treat vision as a first-class citizen during multimodal pretraining? To find out, we studied the design space of training Transfusion-style models that input and output all modalities, from scratch. Here is what we learned about visual representations, data, world modeling, architecture, and scaling behavior! Paper: arxiv.org/abs/2603.03276 Website: beyond-llms.github.io @TongPetersb, @DavidJFan, @__JohnNguyen__, @ellisbrown, @GaoyueZhou, @JasonQSY, @boyangzheng, @webalorn, @han_junlin, @rob_fergus, @NailaMurray, @gh_marjan, @ml_perception, Nicolas Ballas, @_amirbar, Michael Rabbat, Jakob Verbeek, @LukeZettlemoyer, @koustuvsinha, @ylecun, @sainingxie