Mike Lewis

277 posts

Mike Lewis

@ml_perception

Llama3 pre-training lead. Partially to blame for things like the Cicero Diplomacy bot, BART, RoBERTa, attention sinks, kNN-LM, top-k sampling & Deal Or No Deal.

Beginnings are very special. Today is an important day for @adaptionlabs. Today a handful of one-size-fits-all-models are optimized for the average use case. Averages erase the exceptional. Everything intelligent adapts. So should AI.

new post. there's a lot in it. i suggest you check it out

Want to learn about Llama's pre-training? Mike Lewis will be giving a Keynote at NAACL 2025 in Albuquerque, NM on May 1. 2025.naacl.org @naaclmeeting

Today I’m launching @reflection_ai with my friend and co-founder @real_ioannis. Our team pioneered major advances in RL and LLMs, including AlphaGo and Gemini. At Reflection, we're building superintelligent autonomous systems. Starting with autonomous coding.

One reason I'm excited about the Llama 3 paper is we finally have a description of what you need to do to get an LLM to reason sort-of well (human supervision, symbolic methods in post-training). GPT-4 and co. do not show that reasoning "emerges from distributional learning".

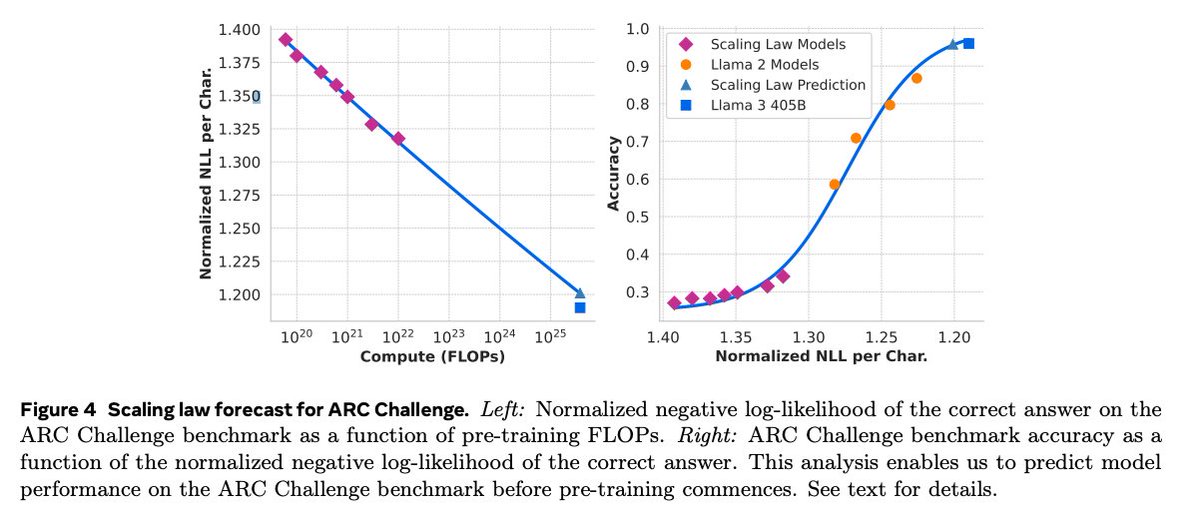

So excited for the open release of Llama 3.1 405B - with MMLU > 87, it's a really strong model and I can't wait to see what you all build with it! llama.meta.com Also check out the paper here, with lots of details on how this was made: tinyurl.com/2z2cpj8m