Jiwei Liu

131 posts

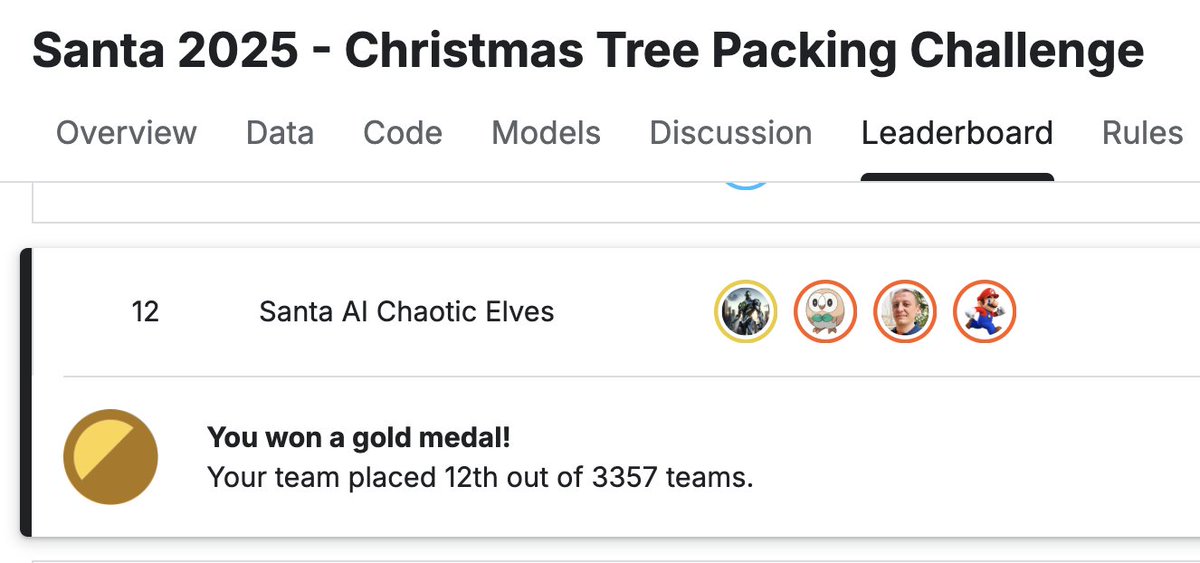

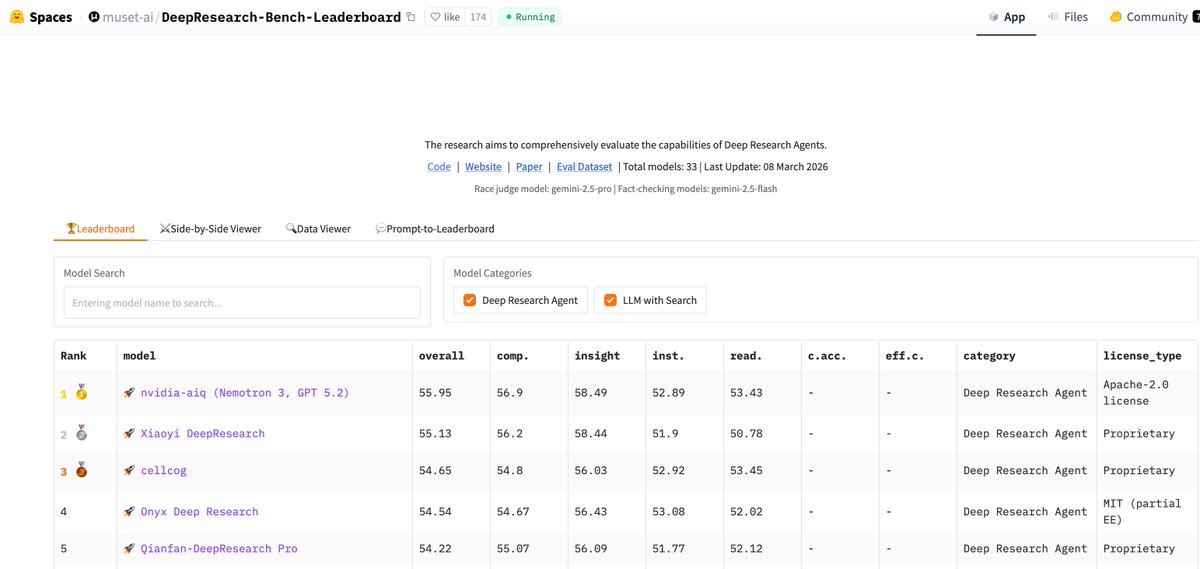

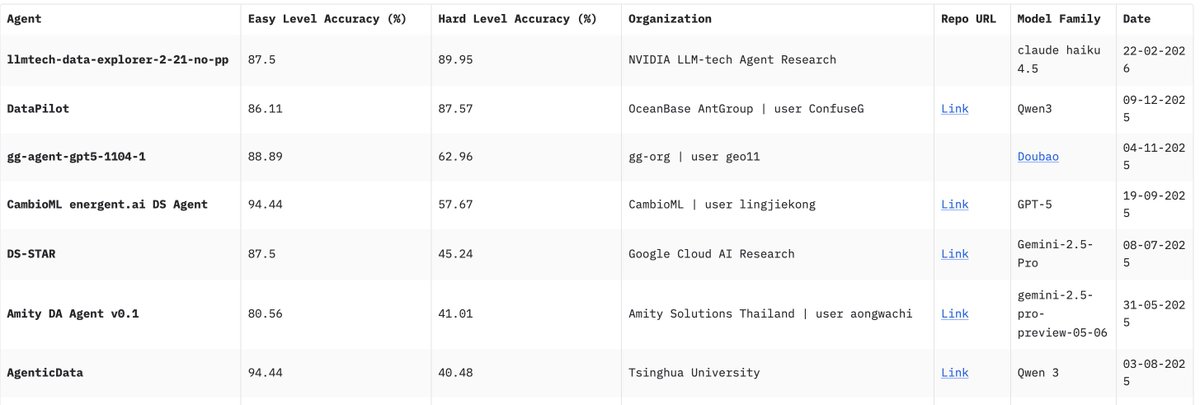

Very proud that a cross NVIDIA effort led to top positions in Deep Research Bench I and II. DRB-I evaluates overall report quality: comprehensiveness, insight, instruction-following, and readability whereas DRB-II is more difficult and tests for information recall, analysis, and presentation. This was led by David Austin and @raja_biswas from my team. I contributed a bit. We will share more ASAP. huggingface.co/spaces/muset-a… #leaderboard" target="_blank" rel="nofollow noopener">agentresearchlab.com/benchmarks/dee…

We are open-sourcing KVzap, a SOTA KV cache pruning method that delivers near-lossless 2x - 4x compression. Since releasing KVpress, this is the first method I believe deserves a serious look for integration into @vllm_project, @sgl_project, or @nvidia TRT-LLM 👇 (1/5)

🌟 XGBoost 3.0 now has new capabilities for GPUs: ✅ Train up to 1 TB of data on a single Grace Hopper GPU: Up to 8x faster than CPU with External-Memory Quantile DMatrix. ✅ Faster and lighter: GPU hist/approx methods an additional ~2x speed up and reduced memory use. ✅ Full feature support: External memory now supports categorical features, all objectives, and SHAP. ✅ Distributed mode: Experimental support for out-of-core training across a cluster. Technical deep dive ➡️ nvda.ws/4le4ZcX