Big thanks to everyone on the team and our mentors 🌟

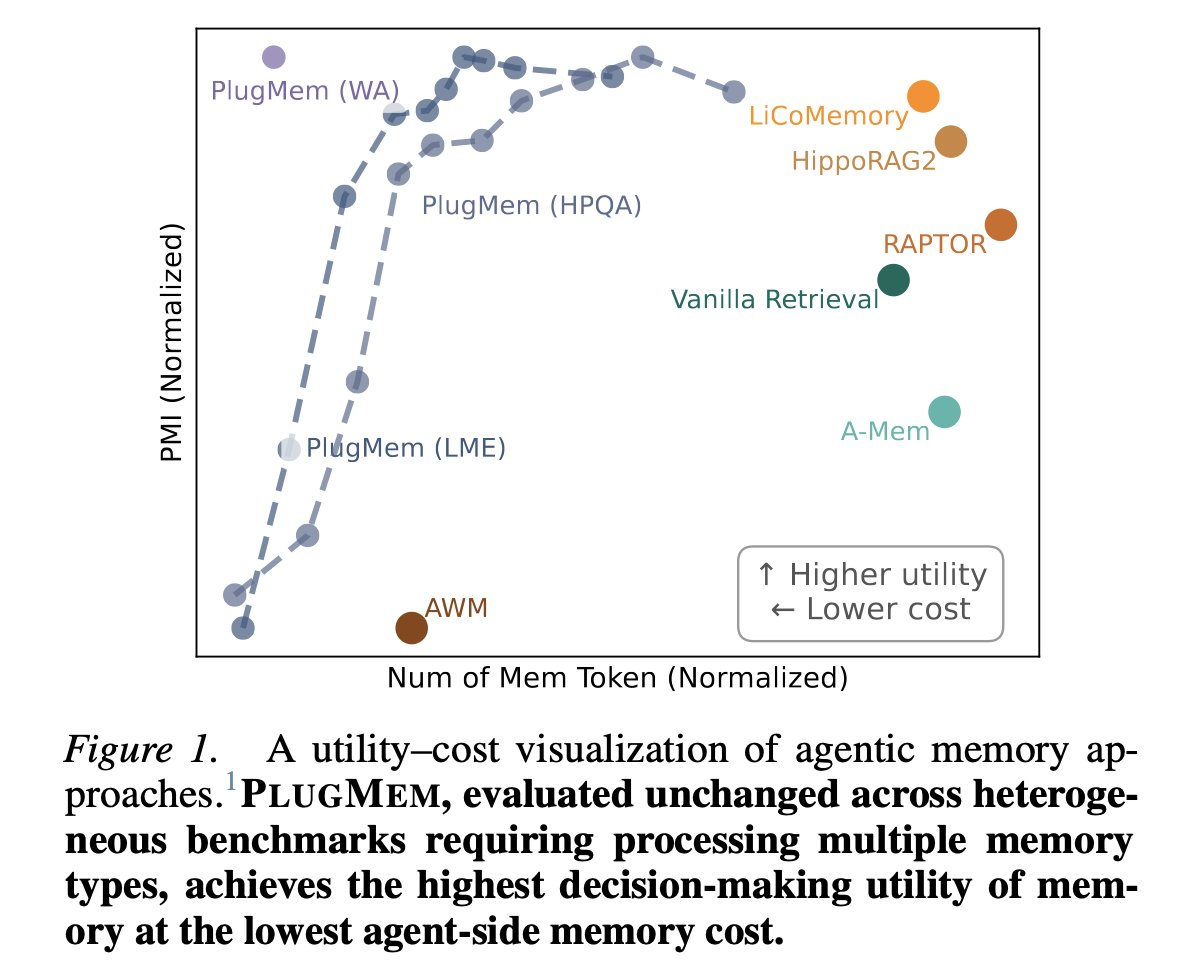

I’m thrilled that PlugMem has been accepted to ICML 2026. This is a big milestone for our work on memory for evolving agents.

What excites me just as much is we are turning PlugMem into something people can actually build with, a truly plug-and-play memory module that works across real agent runtimes and interpretable through visualization interfaces.

Making research accessible is part of pushing the frontier 🎆

Ke Yang@EmpathYang

Big PlugMem update 🧠 A plug-and-play memory module for LLM agents — turns raw trajectories into a knowledge graph your agent actually reasons over. 🎉 Accepted to ICML 2026 🔌 Drop it into OpenClaw 🦞, Claude Code, and other agent runtimes 🔍 Visualize memory · test retrieval · replay sessions 🥇 SOTA backbone on LongMemEval & HotpotQA — general enough to build on Paper: arxiv.org/abs/2603.03296 Code: github.com/TIMAN-group/Pl… #ICML2026 #LLM #Agents

English