Joseph Jeesung Suh retweetledi

Joseph Jeesung Suh

30 posts

Joseph Jeesung Suh

@JosephJSSuh

CS Grad student @ BAIR, UC Berkeley

Berkeley, CA Katılım Haziran 2024

61 Takip Edilen84 Takipçiler

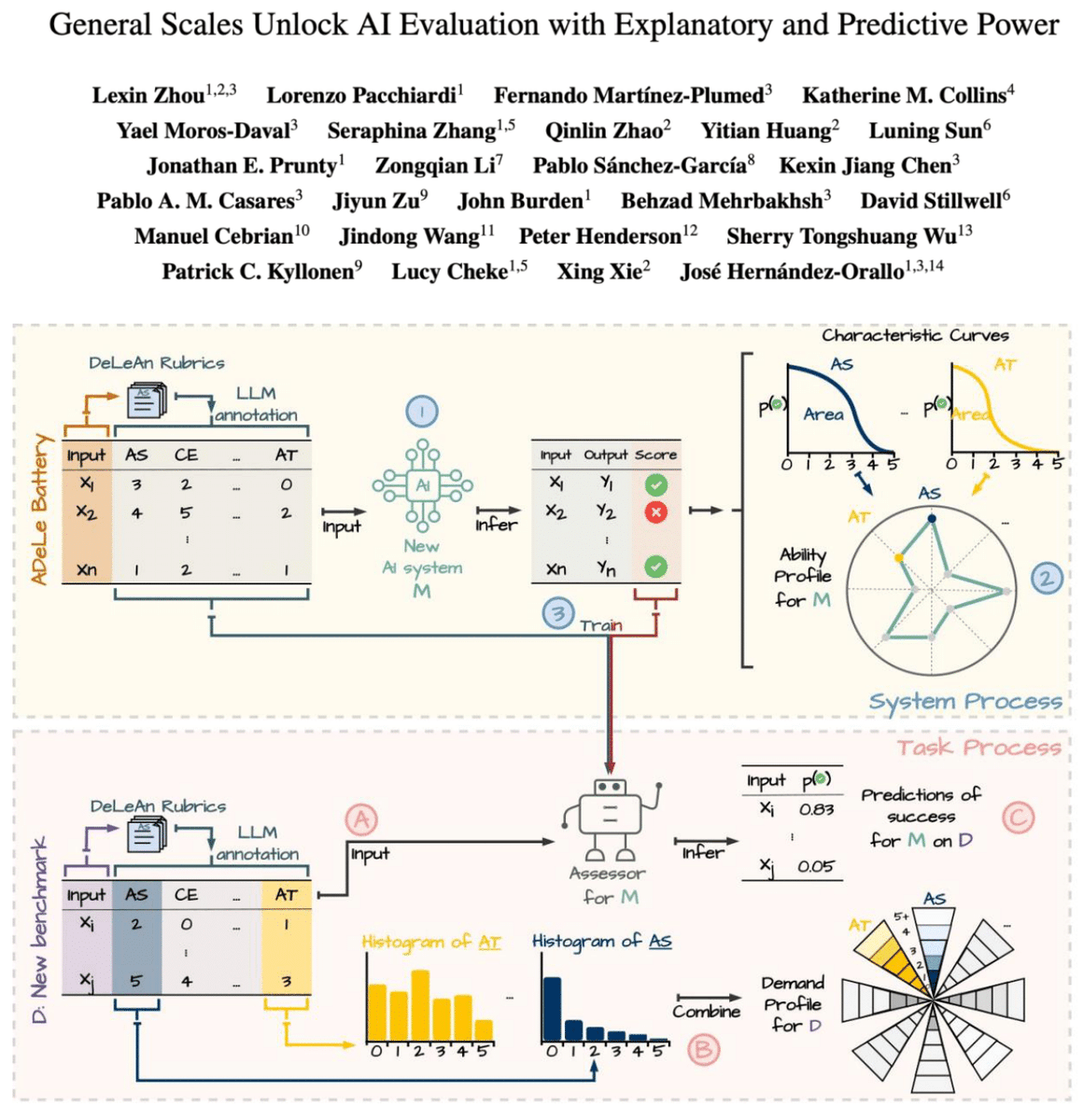

🎉 Excited to share that GEMS is accepted to ACL 2026 main!

We show that a lightweight GNN can match or outperform LLMs at simulating human behavior in discrete-choice settings — with multiple advantages, including efficiency and transparency.

Paper: arxiv.org/abs/2511.02135

Joseph Jeesung Suh@JosephJSSuh

LLMs have dominated recent work on simulating human behaviors. But do you really need them? In discrete‑choice settings, our answer is: not necessarily. A lightweight graph neural network (GNN) can match or beat strong LLM-based methods. Paper: arxiv.org/abs/2511.02135 🧵👇

English

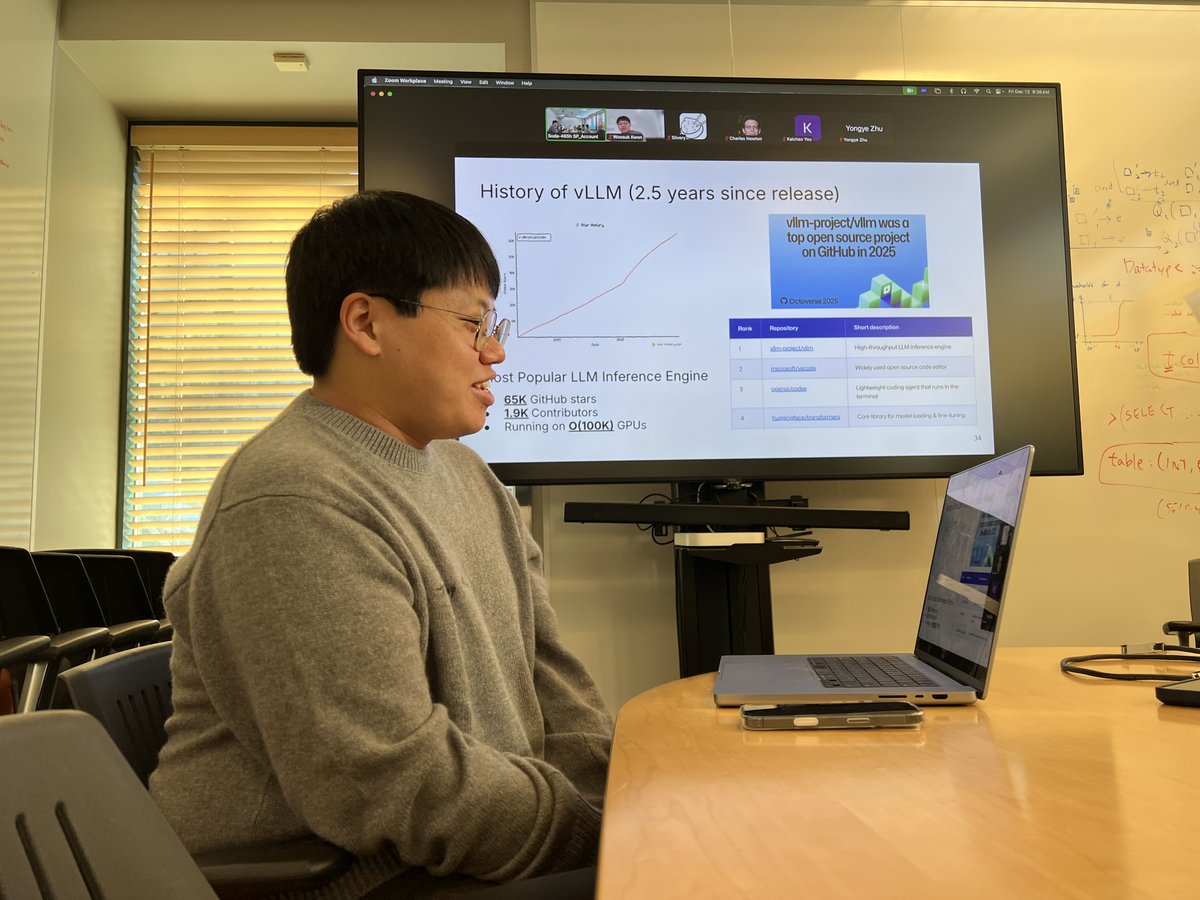

@profjoeyg @woosuk_k @vllm_project Congratulations Woosuk! Wishing you all the best in your future journey

English

Joseph Jeesung Suh retweetledi

I am excited to attend @woosuk_k's thesis defense on PagedAttention and the @vllm_project. Congratulations on such an incredibly high impact project!

English

Joseph Jeesung Suh retweetledi

📢 Come work with me at UC Berkeley @berkeley_ai! I’m recruiting PhD students in @Berkeley_EECS and @UCJointCPH. I work on AI for social good, simulating humans with AI, human-AI interaction, and applications in public health & social science.

serinachang5.github.io

English

(11/11) For people who are interested, here is a link:

Paper: arxiv.org/abs/2511.02135

Github: github.com/schang-lab/gems

Huge thanks to my amazing PI @serinachang5 and collaborator @SuhongMoon.

English

LLMs have dominated recent work on simulating human behaviors. But do you really need them?

In discrete‑choice settings, our answer is: not necessarily.

A lightweight graph neural network (GNN) can match or beat strong LLM-based methods.

Paper: arxiv.org/abs/2511.02135 🧵👇

English

Joseph Jeesung Suh retweetledi

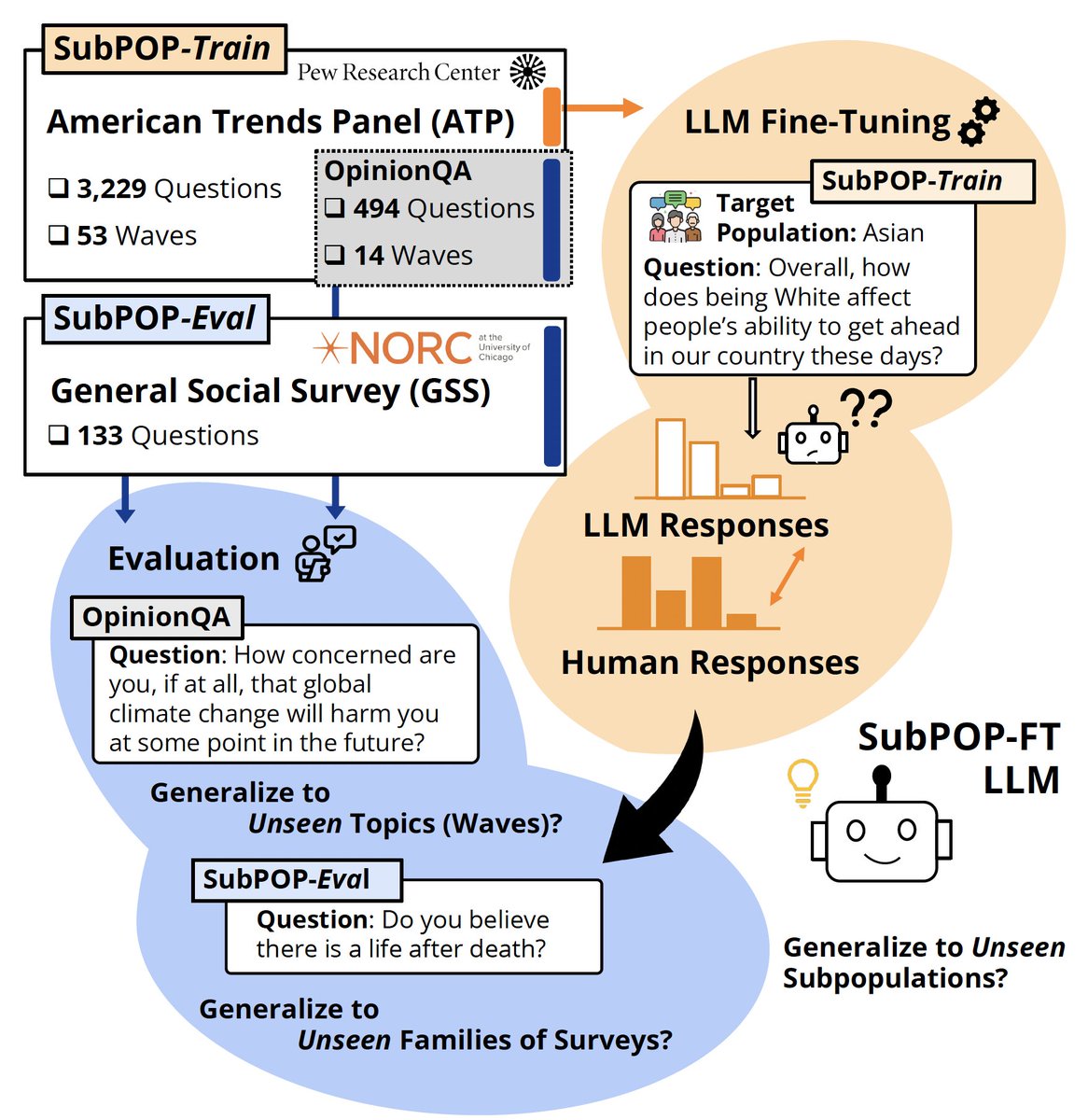

🤔 Do LLMs exhibit in-group↔out-group perceptions like us?

❓ Can they serve as faithful virtual subjects of human political partisans?

Excited to share our paper on taking LLM virtual personas to the *next level* of depth!

🔗 arxiv.org/abs/2504.11673 🧵

English

Joseph Jeesung Suh retweetledi

Joseph Jeesung Suh retweetledi

@Ugo_alves Hi! The largest model we fine-tuned was 70B, Llama-3-70B

English

@JosephJSSuh Is my understanding correct that the largest model you’ve fine tuned was 13b?

English