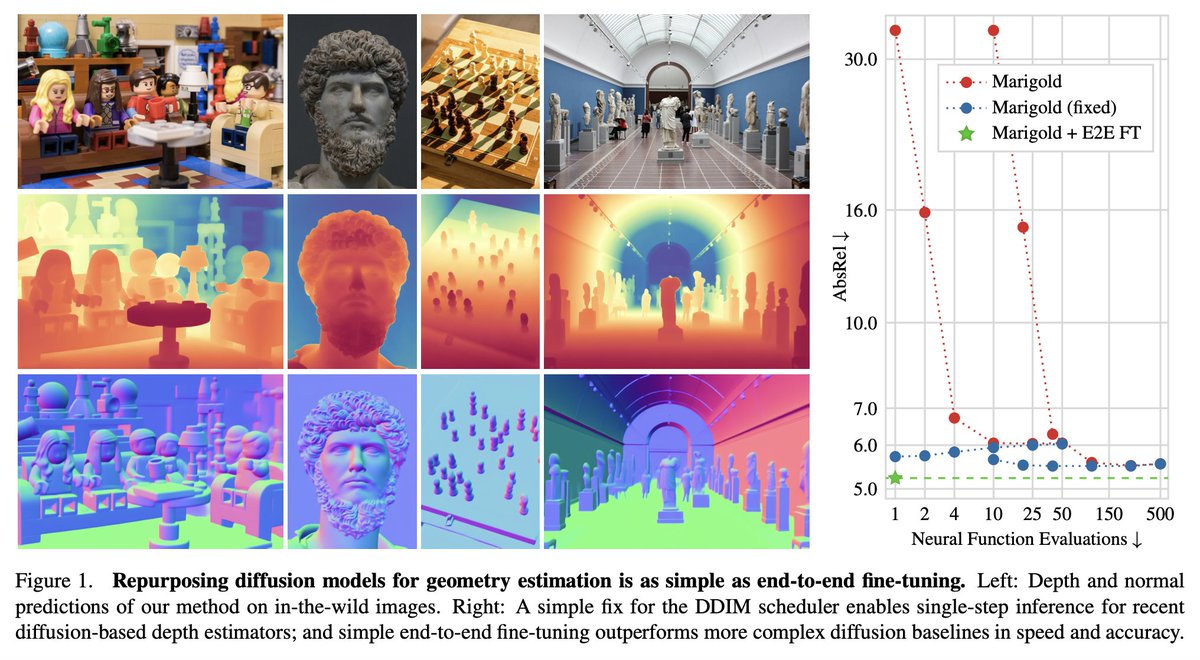

Introducing Marigold 🌼 - a universal monocular depth estimator, delivering incredibly sharp predictions in the wild! Based on Stable Diffusion, it is trained with synthetic depth data only and excels in zero-shot adaptation to real-world imagery. Check it out: 🌐 Website: marigoldmonodepth.github.io 🤗 Hugging Face Space: huggingface.co/spaces/toshas/… 📄 Paper: arxiv.org/abs/2312.02145 👾 Code: github.com/prs-eth/marigo… The team: Bingxin Ke (@KBingxin), yours truly (@AntonObukhov1), Shengyu Huang (@ShengyHuang), Nando Metzger (@NandoMetzger), Rodrigo Caye Daudt (@rcdaudt), and Konrad Schindler. #ComputerVision #PRS #ETHZurich