Ghost

2.7K posts

@KaihoNow @Eric_Scruffy Not going to happen. Next week is going to be a down week.

English

@gadgetzmo @TopStockAlerts1 Yes, it will go slightly down on open. But will shoot to 40 today

English

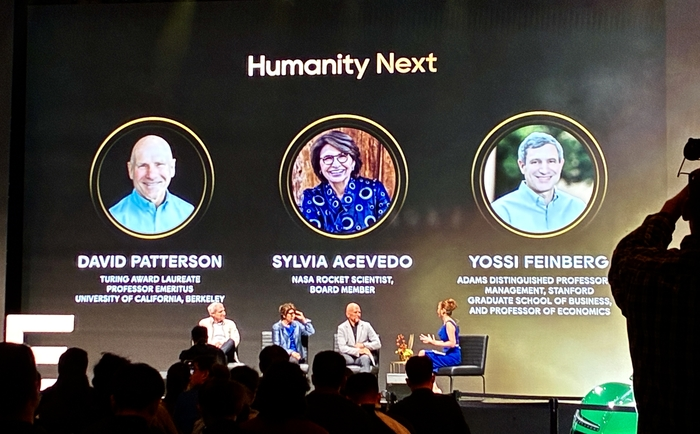

P.S. I didn’t know this before, but David A. Patterson is actually a huge figure.

- He coined the term “RISC” itself.

- At a time when CISC architectures had complex instructions and complex hardware, he argued that a simpler instruction set executed faster could be more efficient.

- This idea later had a major influence on architectures such as ARM, MIPS, and SPARC, and became one of the core design philosophies behind today’s smartphone, embedded, and server CPUs. In 2017, he also received the Turing Award together with John Hennessy for their contributions to the development of RISC architecture.

- And RISC is not the only thing he is famous for. Patterson, together with Randy Katz and Garth Gibson, was also one of the key figures behind the concept of RAID, or Redundant Array of Inexpensive/Independent Disks.

English

"The Next Bottleneck After HBM Is HBF"... A Computing Pioneer's Prediction

"I have been consistently paying close attention to High Bandwidth Flash (HBF). I'm also collaborating with semiconductor companies on this. HBF is highly likely to stand at the center of the next bottleneck — a surge in demand."

David Patterson, professor at UC Berkeley, Turing Award laureate, and widely recognized as the architect of RISC (Reduced Instruction Set Computing — an approach that simplifies instructions to improve processing efficiency), made these remarks on April 30 (local time) when he met with reporters in San Francisco immediately after delivering a keynote at the Dreamy Next event.

Asked about what comes after HBM (High Bandwidth Memory), which is currently in a supply-constrained bottleneck, Professor Patterson answered that HBF will emerge as the next focus. Specifically, he said, "Although a number of technical challenges still remain, the HBF being developed by companies such as SK hynix and SanDisk is a meaningful alternative in that it can deliver large capacity with low power consumption," adding, "Going forward, how efficiently data can be stored and delivered will become the critical variable." This past March, SK hynix announced that it had joined hands with U.S. flash memory company SanDisk to drive the global standardization of HBF.

Unlike HBM, which stacks DRAM, HBF is built by stacking NAND flash — a non-volatile memory. Their roles are also distinct. While HBM serves as a fast computation aid, HBF is focused on storing the vast amounts of data that AI processes at high capacity.

HBF is drawing attention as the AI inference market grows. The AI market is broadly divided into learning (training) and inference. Training is the process of feeding massive amounts of data to teach an AI model. Inference is the stage in which results are derived based on the trained data.

In inference AI, the ability to continuously store and retrieve vast amounts of intermediate data — such as prior conversations, judgment outcomes, and task context — is crucial. This is because AI carries out reasoning by remembering context and building upon it. The problem is that all of this data is difficult to fit into HBM. Since HBM is optimized for handling data used immediately, its capacity itself is inherently limited. Moreover, given its high price, processing the enormous amounts of context data generated during inference using HBM alone would impose significant cost burdens. As a result, an environment has formed in which both HBM and HBF are needed simultaneously — a kind of division of labor.

Domestic experts in Korea also anticipate that the importance of HBF will grow going forward. At an HBF research and technology development strategy briefing held this past February, Kim Jung-ho, professor in the School of Electrical and Electronic Engineering at KAIST, stated, "If the central processing unit (CPU) was the core in the PC era and low-power technology was the core in the smartphone era, memory will be the core of the AI era," adding, "What determines speed is HBM, and what determines capacity is HBF." He further predicted, "From 2038 onward, demand for HBF will surpass that of HBM."

English

@jdemer64 @Eric_Scruffy Thanks for your input, I’m happy to take the other side of your trade. A down day or an up day doesn’t matter to me. I just needed an entry at lows. I expect this to be above 40+ in the immediate future. $APLD

English

@Eric_Scruffy @KaihoNow Tomorrow looks to be a down day unfortunately. Shorts are doubling down. Since its Friday options expirations expect minimal institution support.

English

@citrini @ContrarianCurse Oh please. No one is shorting $NVDA on a long term basis. Now, pumping and dumping $NVDA, taking quick profits and running, yes.

English

Money is starting to flow downhill - a critical observation for the next few years.

The Mags may make all the money but they are spending it faster than they can make it.

Their free cash flows are cratering.

Best to follow the money and see who’s getting it. In this case, it’s following the AI trade and, more specifically, the data center and power economies.

Make money from capital light business models like ads and software, then redirect it to asset heavy, traditional infrastructure companies.

A complete reversal from the last 20 years is underway.

Chamath Palihapitiya@chamath

Blockbuster earnings from the Mags…BUT the equal weight is massively outperforming the weighted index today. 🤔

English

@robot_maniac 2) they are very transparent unlike many other DC's. This can be easily seen by them creating a docuseries on the build via YouTube. It's quite a smart idea, as hyperscalers need reliability to grow. Money is not an object at the moment with current unreal demand.

English

@robot_maniac It's a clear long, there are not a lot of data centres that are ready to even build. $APLD is one of the first movers. they are already building and in my opinion will dominate market share (DC builds are delayed due to bottlenecks). They already have a contract with $MSFT.

English

Ghost retweetledi

Data centres anyone? $APLD $IREN

Joseph Carlson@joecarlsonshow

This is so crazy it literally looks fake.

Filipino

@aakashgupta And the ones that have started already and first movers will dominate the market. $APLD

English

A 5-year backlog on grid transformers just killed half of America's 2026 AI data centers.

Sightline Climate tracked 12 GW of 2026 US data center capacity announced across 140 projects. Only 5 GW is actually under construction. 11 GW sits in the "announced" stage with no physical progress despite typical build times of 12-18 months. 25% of those projects haven't disclosed a power strategy at all.

That last number is the tell. A quarter of "planned 2026 AI capacity" has no sourced answer to where the electrons come from. Call those projects what they are: vapor capex with a press release attached.

Nvidia is shipping. The gating constraint is high-voltage transformers, switchgear, and grid-tie batteries. Pre-2020 lead time on a high-power transformer was 24-30 months. Today it stretches to 5 years. Electrical equipment is under 10% of total data center cost and 100% of the bottleneck.

This breaks the standard analyst model. When a hyperscaler announces $50B of capex, the Street treats it as compute coming online in 18 months. If the transformer order wasn't placed in 2022, that money sits as commitment without capacity. You cannot pay for a transformer that doesn't exist yet.

The winners under this regime are whoever locked in power purchase agreements and electrical equipment orders 3-4 years ago, before anyone was modeling hundreds of megawatts of inference load. Everyone else is waiting in line behind them.

Second order is uglier. Hyperscalers buying $50B of GPUs that sit unpowered depreciate against Nvidia's annual cadence while paying carrying costs on empty data center shells. Every quarter dark is a quarter of compounding waste.

The "we're 6 months from running out of compute" panic just became "we're 5 years from running out of transformers." Capital fixes one. Capital cannot manufacture a transformer.

Hedgeye@Hedgeye

BREAKING: Half of planned US data center builds have been delayed or canceled

English