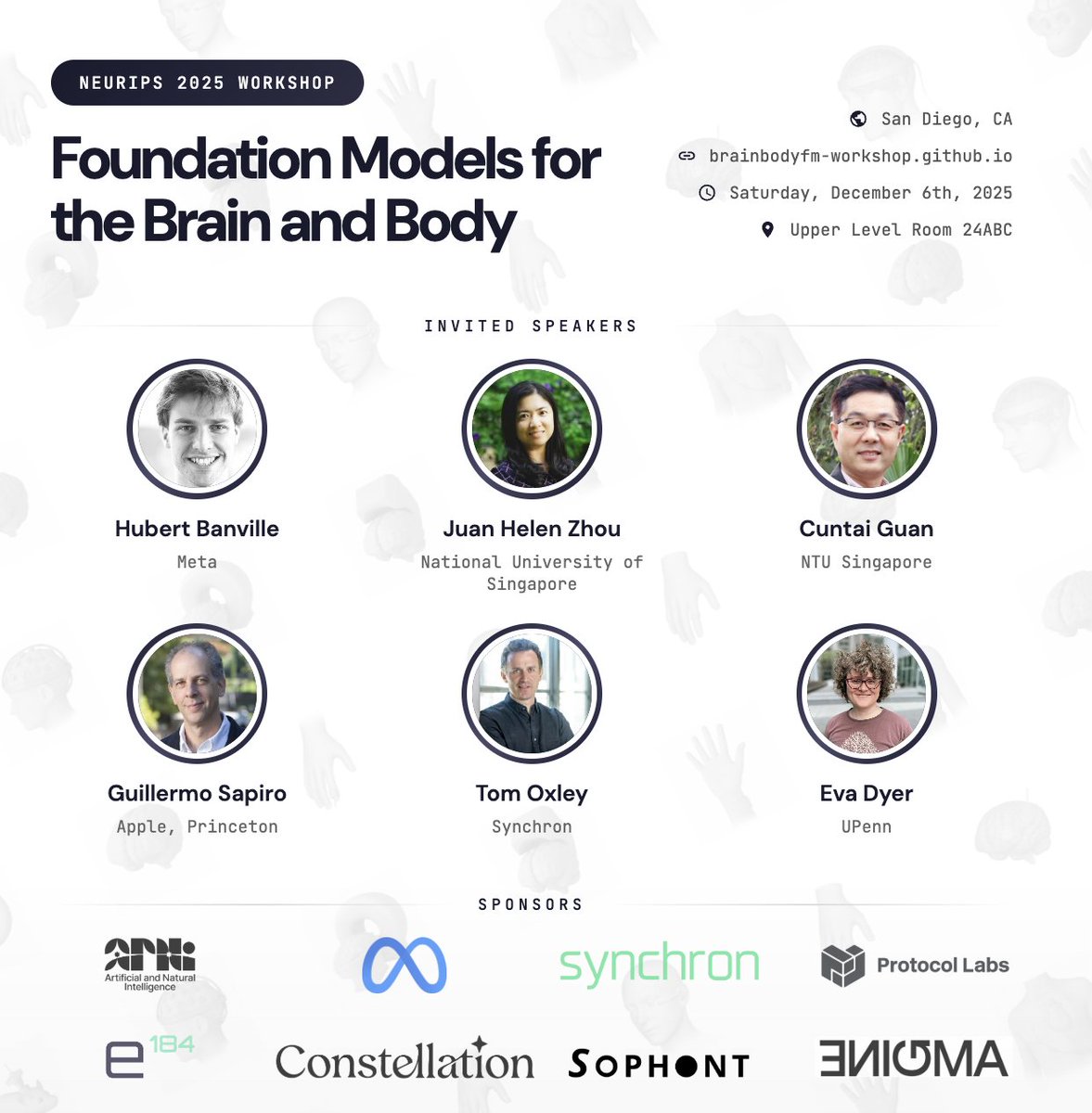

Is a universal brain decoder possible? Can we train a decoding system that easily transfers to new individuals/tasks? Check out our #NeurIPS2023 paper where we show that it’s possible to transfer from a large pretrained model to achieve SOTA 🧠! Link: poyo-brain.github.io 🧵