Khurram Pirov

212 posts

Khurram Pirov

@KhurramCEO

PhD dropout MIT/MIPT CS/Quantum Physics • prev CV @ Samsung AI Bayesian Inference • Helping physical AI learn skills the way humans do

the robot sim to real overlay is near perfect but that SAM3 mask tracking for the object trajectory is quite noisy :) might be a good idea to add some filters/ or try out TAPnext

Indian factory workers wear head-mounted cameras to capture data for training robotics AI models. This image captures a blunt truth about robotics: teaching a machine to move in the real world is still painfully expensive. What looks dystopian at first is also a clue about the bottleneck. Robots do not learn useful physical behavior from internet-scale text the way language models do. They need embodied data: hands reaching, wrists turning, objects slipping, fabric folding, tools resisting, people recovering from small mistakes in real time. That data is rare because reality is slow, messy, and costly. A robot fleet is expensive to buy, expensive to maintain, hard to supervise, and dangerous to scale in uncontrolled settings. Even teleoperation is costly, because every minute of human-guided movement requires hardware, operators, calibration, and failure recovery. So companies go looking for the cheapest possible proxy for physical intelligence. First-person video from factory workers is not the same as robot action data, but it can still be valuable because it captures sequencing, posture, bimanual coordination, and the micro-adjustments that make real work look easy. The frontier in robotics is not just better models. It is better pipelines for collecting reality itself. That is why warehouses, factories, kitchens, and repair benches matter so much: they are dense environments of repeated contact with the physical world, which is exactly what robots lack. The unsettling part is that this turns human labor into training infrastructure twice over, first as work, then as data. And until embodied data becomes cheaper to gather than human motion is to record, robotics will keep learning from workers before it fully replaces them.

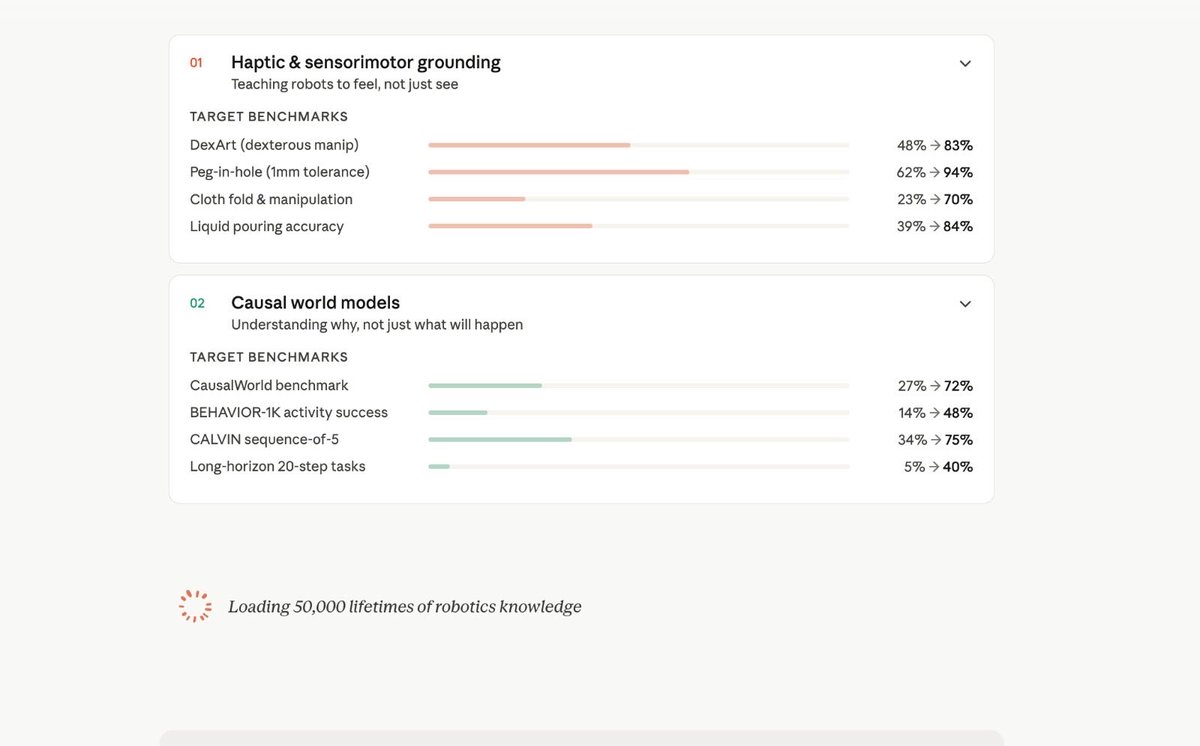

A child consumes more data in 1 month than any LLM has ever seen. Embodied agents learn by doing, but the data that teaches them is tactile, sensorial and causal. Such data does not exist. To make physical AGI possible, we need to generate this new data at an industrial scale. Enter Palatial: automated infrastructure that converts raw data into sensory rich playgrounds for robots to learn in. Today, we’re unveiling Palatial PhysReady, the first automated sim asset generator (try it ⬇️) [1/5]