Jiawei Gu

131 posts

Some finding: Observation scaffolding is the most decisive factor for RL training success — more than algorithm choice. ✅ Adding captions to images → consistent improvement across ALL environments ❌ Removing game rules → can kill learning entirely ⚖️ GRPO vs GSPO vs SAPO? All improve, but no single algorithm dominates HOW you present the task to the agent matters more than HOW you optimize it.

Text agents have their Gym. Vision agents? Not until now. Introducing Gym-V — a unified gym-style platform for agentic vision research, with 179 procedurally generated environments across 10 domains. One API to rule them all: 📦 Offline dataset 🤖 Agentic RL training 🔧 Tool-use training 👥 Multi-agent training 📊 VLM & T2I model evaluation All under the same reset/step interface. Key findings: 1. Observation scaffolding matters MORE than RL algorithm choice 2. Broad curricula transfer well; narrow training causes negative transfer 3. Multi-turn interaction amplifies everything 📄 Paper: arxiv.org/abs/2603.15432 💻 Code: github.com/ModalMinds/gym… Open the thread for a deep dive! 🧵

1/7) We present PyVision-RL, a unified RL framework that stabilizes training and sustains interaction for agentic vision models with Python as the primitive tool. 🧵👇 arxiv.org/abs/2602.20739

I am so confused that some says research and engineer separately To be a Good Engineer , Then learn to become Researcher

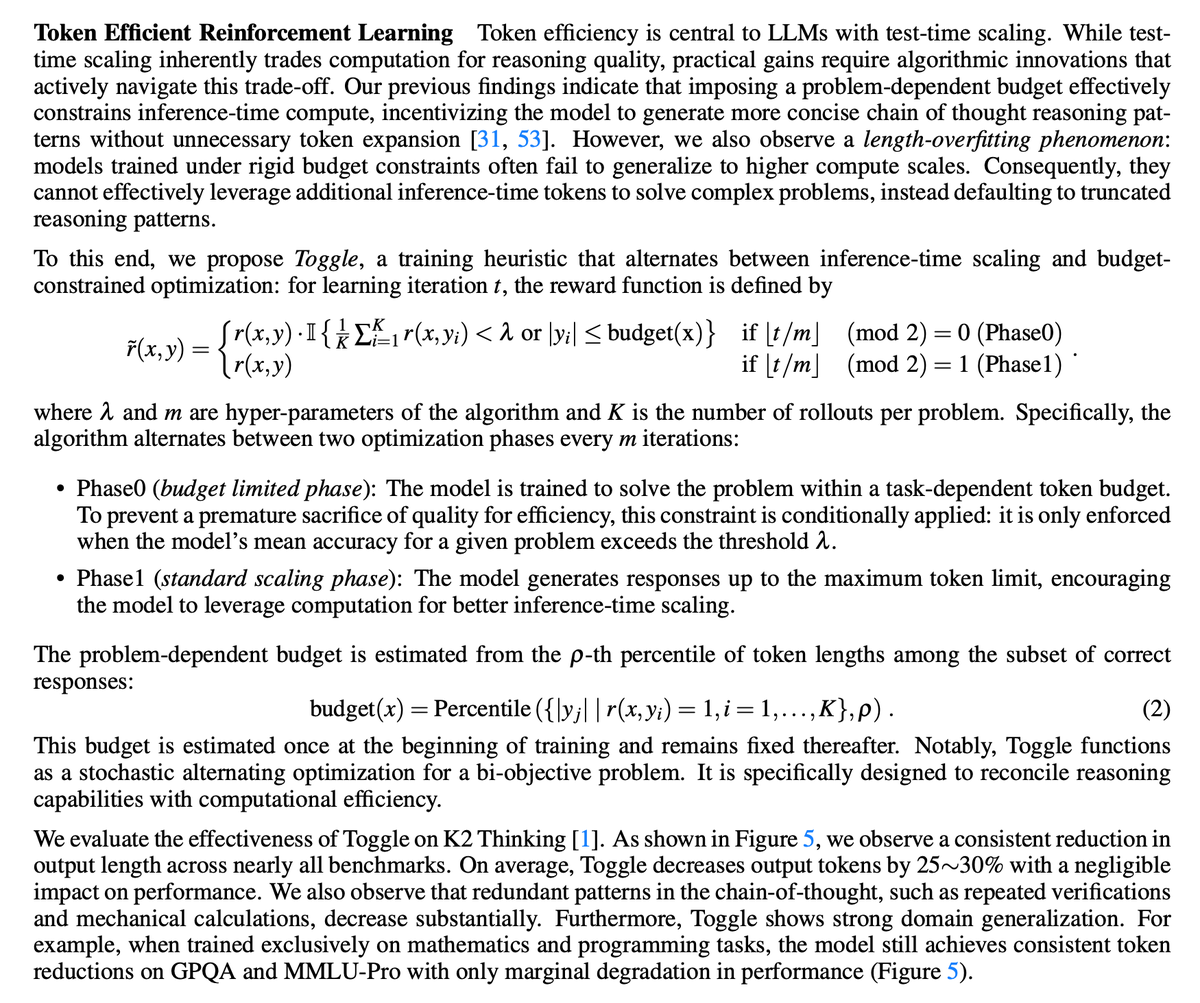

“What is the answer of 1 + 1?” Large Reasoning Models (LRMs) may generate 1500+ tokens just to answer this trivial question. Too much thinking 🤯 Can LRMs be both Faster AND Stronger? Yes. Introducing LASER💥: Learn to Reason Efficiently with Adaptive Length-based Reward Shaping We propose LASER and its adaptive variants LASER-D / LASER-DE: → +6.1 accuracy on AIME24 → –63% token usage 🔧 What we introduce: A unified framework that connects truncation, previous length rewards under one view A novel Length-bAsed StEp Reward (LASER) that softly encourages conciseness LASER-D: Adapts target lengths based on question difficulty + training dynamics LASER-DE: Encourages exploration on incorrect attempts 🔥 Unlike prior methods that trade efficiency for accuracy, LASER-D/E achieve Pareto-optimality: ✔️ Higher accuracy ✔️ Shorter outputs ✔️ Robust across model sizes (1.5B → 32B) ✔️ Strong generalization (GPQA, LSAT, MMLU) Example: Original LRM needs 1490 tokens to answer “1 + 1” (with many self-reflections and finger counting 🤦) LASER-D model? ✅ Answers directly in 76 tokens ✅ No lost reasoning ability ✅ More concise and intelligent Check it out: 📄 Paper: huggingface.co/papers/2505.15… 💻 Code & Models: github.com/hkust-nlp/Laser

⛔️ Can MLLMs truly learn WHEN and HOW to use tools? (🛠AdaReasoner says: yes!! Like… actually decide: - “Should I call a tool right now?” - “Which one?” - “How many times?” What happened surprised us: a 7B model beats GPT-5 on visual tool-reasoning—and shows adaptive behaviors we never programmed. (1/17)🧵👇 📄 arxiv.org/abs/2601.18631 🌐 adareasoner.github.io

How to get AI to make discoveries on open scientific problems? Most methods just improve the prompt with more attempts. But the AI itself doesn't improve. With test-time training, AI can continue to learn on the problem it’s trying to solve: test-time-training.github.io/discover.pdf

Introducing Agentic Vision — a new frontier AI capability in Gemini 3 Flash that converts image understanding from a static act into an agentic process. By combining visual reasoning with code execution, one of the first tools supported by Agentic Vision, the model grounds answers in visual evidence and delivers a consistent 5-10% quality boost across most vision benchmarks. Here’s how the agentic ‘Think, Act, Observe’ loop works: — Think: The model analyzes an image query then architects a multi-step plan — Act: The model then generates and executes Python code to actively manipulate or analyze images — Observe: The transformed image is appended to the model's context window, allowing it to inspect the new data before generating a final response to the initial image query Learn more about Agentic Vision and how to access it in our blog ⬇️ blog.google/innovation-and…

⛔️ Can MLLMs truly learn WHEN and HOW to use tools? (🛠AdaReasoner says: yes!! Like… actually decide: - “Should I call a tool right now?” - “Which one?” - “How many times?” What happened surprised us: a 7B model beats GPT-5 on visual tool-reasoning—and shows adaptive behaviors we never programmed. (1/17)🧵👇 📄 arxiv.org/abs/2601.18631 🌐 adareasoner.github.io