Sabitlenmiş Tweet

nananaa.eth

1.5K posts

nananaa.eth

@LHW0803

@Web3MapInk / https://t.co/JtAmKTijLJ / @DecipherGlobal / Medium: https://t.co/hmlGbLIBzG

Katılım Nisan 2022

691 Takip Edilen734 Takipçiler

nananaa.eth retweetledi

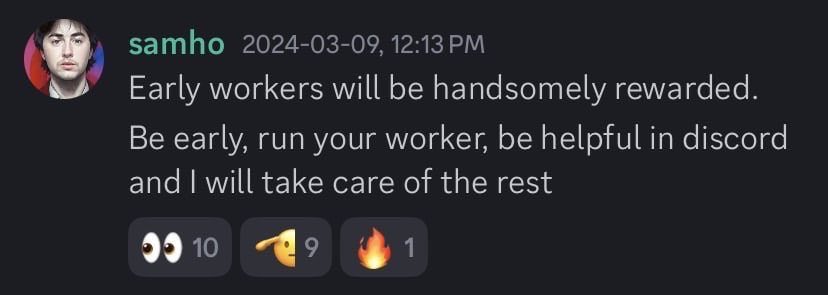

We catch the price drop of @AsteroidCoinOG today

Alerts went within 15 seconds.

Chance of x6.

Only 2 days left for the free pro event.

Try web3map.ink

English

nananaa.eth retweetledi

nananaa.eth retweetledi

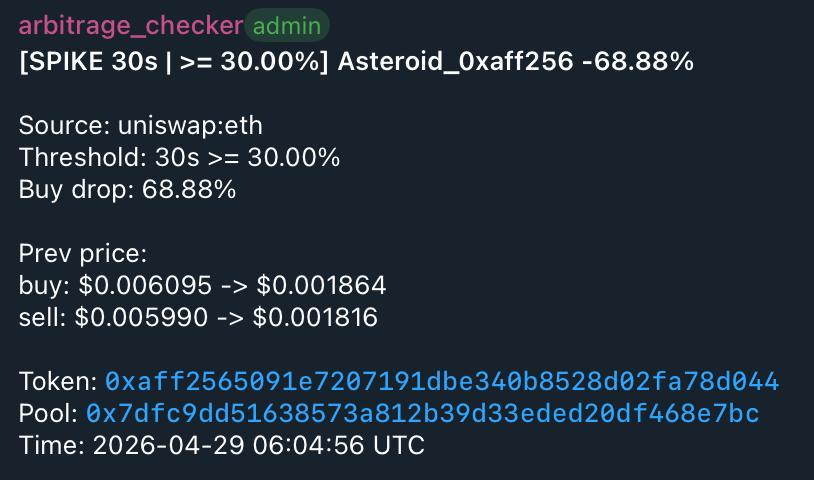

April 23, 2026 / Arbitrage Recap — cmETH (Mantle <-> Bybit)

- Event Time: 23 Apr 01:15 ~ 09:20

- Detection Time: 23 Apr 01:15

- Cause: Possible market maker error

- Route / Execution: Buy bybit - Sell Mantle Moe (or other dapp)

pool: 0x38e2a053e67697e411344b184b3abae4fab42cc2

token: 0xe6829d9a7ee3040e1276fa75293bde931859e8fa

- Expected Profit: ~1% x n times

Apart from Web3 Map, few platforms track prices all the way to Mantle, and it appears that Bybit’s price remained artificially low due to a market maker error.

The market maker on that exchange made a similar mistake on April 17, 2026 as well, so this pair warrants close monitoring.

source: dexscreener.com/mantle/0x3d887…

English

nananaa.eth retweetledi

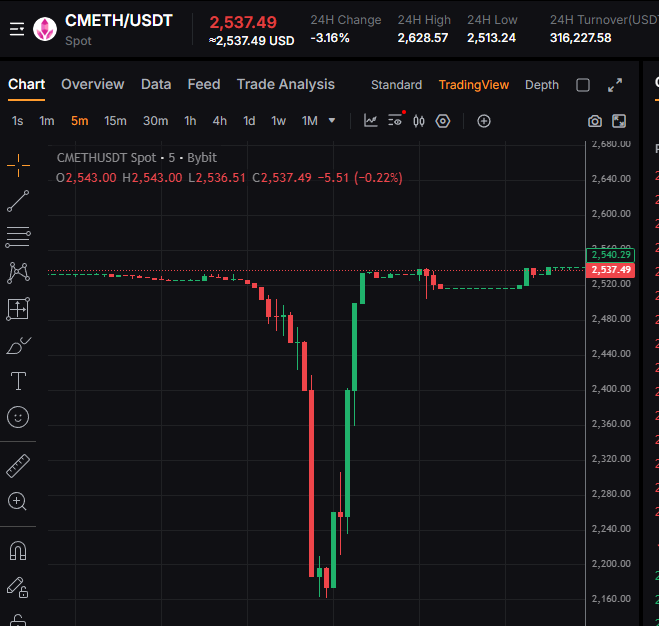

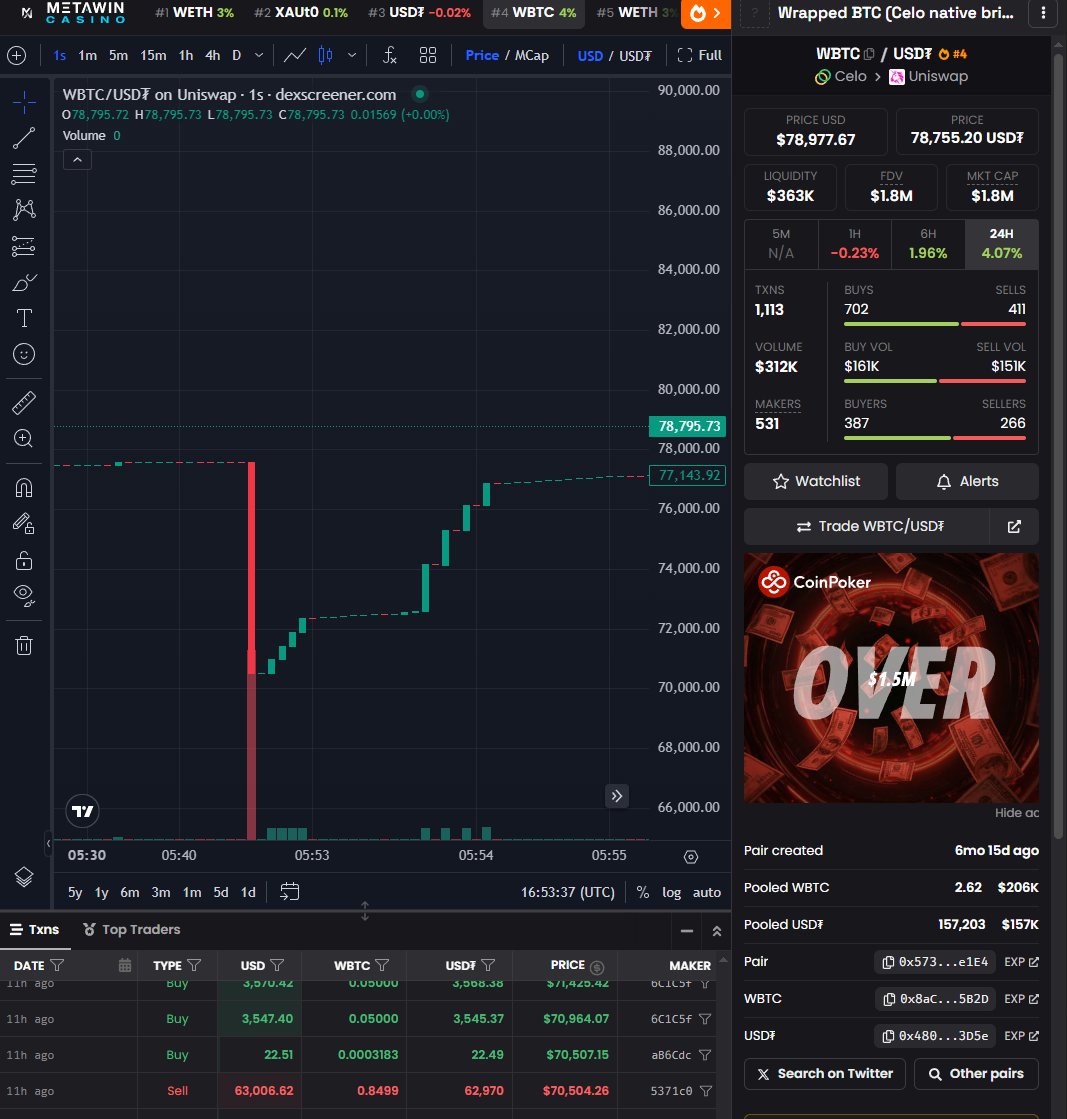

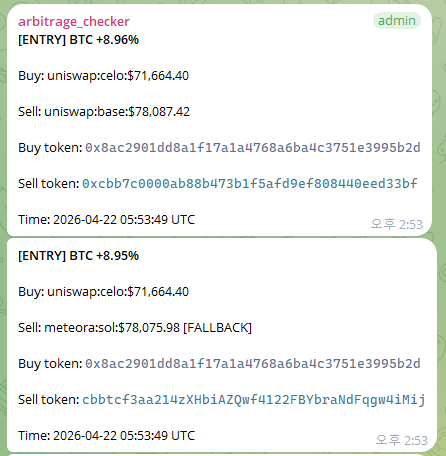

April 22, 2026 / Arbitrage Recap — wBTC (celo)

- Event Time: 22 Apr 05:52:39

- Detection Time: 22 Apr 05:52:54 (+15 sec)

- Cause: Fat finger dump 60k in the small tvl pool

- Route / Execution: buy Uniswap in Celo

pool: celoscan.io/address/0x5733…

token: celoscan.io/address/0x8aC2…

Because the gap was caused by a simple large sell-off mistake, no bridge to another chain was required.

- Expected Profit: +8.96%

There was sufficient liquidity, it was possible to swap well over $1,000 during this event.

At peak profitability, buying the 0.85 BTC sold in the fat-finger trade required an investment of around $63,000, with an expected profit of roughly $2,000.

(The timestamp shown in the image is based on an unsynchronized server clock and may differ from the actual on-chain time.)

source: dexscreener.com/celo/0x57332c2…

English

nananaa.eth retweetledi

Introducing @Web3MapInk — a platform for detecting cross-chain arbitrage opportunities and potential exploits.

We detect opportunities across 10,000+ price sources.

(Detected the USR and DOT exploits within 30 seconds.)

Page: web3map.ink

Learn more 👇

English

nananaa.eth retweetledi

Introducing Privacy Boost: onchain privacy SDK for enterprise.

The first privacy offering for @Optimism. Now live on OP Mainnet.

Enterprises need regulatory-friendly privacy.

English

nananaa.eth retweetledi

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

nananaa.eth retweetledi

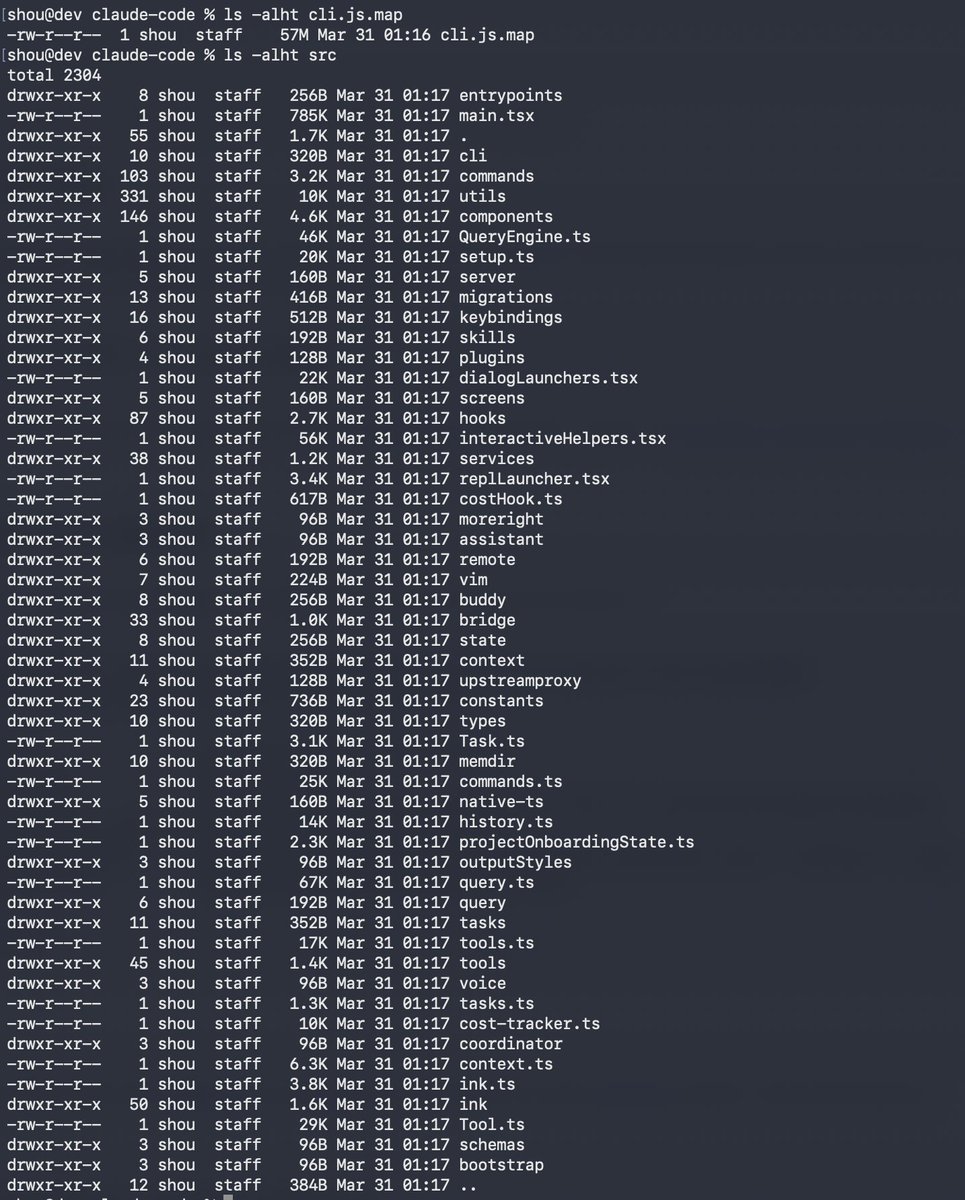

Claude code source code has been leaked via a map file in their npm registry!

Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

English

If the weather were like this 9 months per year, SF would be the #1 American city and it’s not even close

English

nananaa.eth retweetledi

nananaa.eth retweetledi

nananaa.eth retweetledi

nananaa.eth retweetledi

[TLDR: dr-manhattan solves liquidity problem in prediction markets]

1/ Prediction markets are exploding. Polymarket, Kalshi, Limitless, Opinion... new venues and markets launching every day.

But here's the problem: orderbook is too thin. Traders struggle to enter positions at fair prices—and exiting is even harder.

Too many markets. Not enough depth.

English

디사이퍼 11기 학회원 최원재(@0xwonj)님이 @WalrusProtocol Haulout Hackathon에서 zkDungeon 프로젝트로 $5,000 상금을 수상하셨습니다! 🏆🎉

zkDungeon은 ZK 증명을 활용한 'Truth Engine'으로, 온체인 게임의 신뢰성과 확장성 딜레마를 해결하는 혁신적인 프로토콜입니다. 로컬 실행의 빠른 속도와 블록체인 롤업의 보안을 결합한 zkFSM을 핵심으로 하며, 하이브리드 검증과 Merkle Oracle 등을 통해 소비자 기기에서도 실현 가능한 verifiable gaming을 제시했습니다.

원재님께 다시 한번 축하의 말씀을 드리며, 앞으로도 많은 관심과 기대 부탁드리겠습니다!🚀🚀

wonjae.eth@0xwonj

Honored to take home a $5,000 prize at the @SuiNetwork Walrus Hackathon for zkDungeon! 🏆 zkDungeon is a 'Truth Engine' designed to solve the on-chain gaming dilemma (trustlessness vs. scalability). How does it solve the on-chain gaming dilemma? Check out the full technical deep dive in the thread below! 👇 🧵

한국어

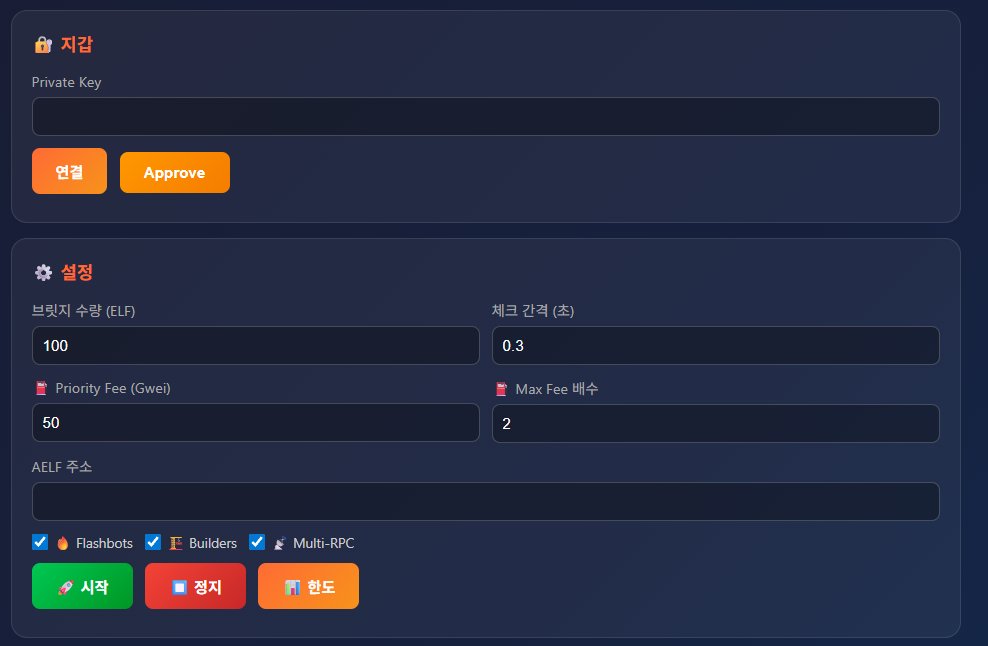

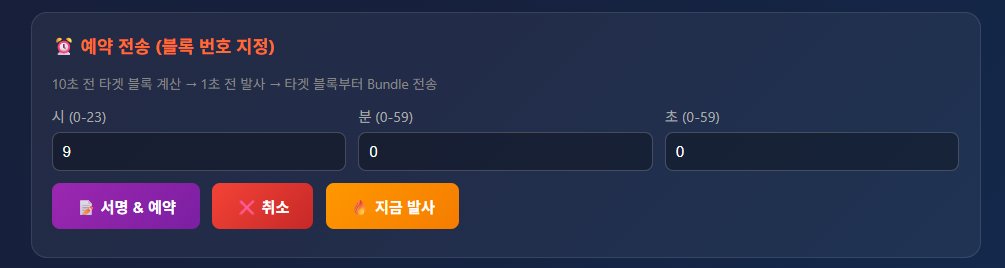

엘프 벗기기(2)

평소엔 브릿지를 300만개 정도 채우면

하루에 50만개씩 6일정도 넘길 수가 있었고

체인간 갭이 그렇게 크지 않아서 쳐자다가 낮쯤에

일어나서 넘겨도 아무 문제가 없었음

근데 1편에 적은대로

어떤 미친놈이 물량을 거의 천만개 가까이 던지는 바람에

만약에 팀에서 평소대로 브릿지를 300~500만개 채운다면,

덱스 물량은 일부밖에 넘길 수 없고

넘기지 못한 나머지 물량은 당연히 덤핑이 나온다는 걸

다들 알고있기 때문에 이제부턴 진짜 브릿지 전쟁이 시작됨

브릿지가 채워지는 시간은 매일 9시 정각

씨발 이때부터 잠을 잘수가없었음

이때까지만해도 코린이들이 이상한 스캠광고에 돈넣지 말고

많이 배워갔으면 해서 우리 다오는 지갑을 전부 공개하고 하고있었는데,

개미친 지갑따라붙는 홍어새끼들 +

안할테니까 알려달라고하고 매일 오전8시 50분에 스왑누르는 씹새끼들도 좆같고,

멕시에서 eth체인 입금은 안됐지만 출금은 됐기 때문에

수백만개 물량을 들고있는 봇쟁이가

파이를 나눠먹으려고 들어와버렸음

근데 이 씨발련이 적당히 나눠먹으면 되는데

매일 20~25만개를 첫번째 블록에

매우 비싼 가스비로 넣고나서

2분이 지나면 3~5만개씩 무한으로 따발을 쏘기 시작함

-> 첫블록에 20만개를 먼저 쐈을때 어떻게 되는지는 1편 참고

이때부터 식은땀이 계속나는데

내 물량이 존나 많이 남은 상태에서 따개비 홍어새끼들 +

봇쟁이 폭격으로 진짜 좃되기 직전이었음

(그냥 안넘기고 덱스에 넘겨도 매우 큰 수익이긴 했음)

이때부터 노펄습 @Kal_hintz 파르나 @FlyingSiri 까지

셋다 달라붙어서 봇 만들기 시작함

1차봇) 따발을 쐈는데 봇쟁이가 다채감

이때 충분히 우리가 이길 수 있다고 생각했는데,

봇쟁이 주문이 퍼블릭 멤풀로 가는걸 보고

못이길 정도는 아니라고 생각했음

2차봇) 역시 개처발림

9시정각 ~ 9시 0분 11초까지 같은 블록인걸 확인하고

가스비를 높이고, 예약전송 기능까지 넣어서 보냈는데

이날 봇쟁이도 경쟁이 붙은걸 알았는지

말도안되는 가스비를 적어서 냄

이 다음날부터는 진짜로 못넘기면 좃되는 상황이었음

3차봇) 첫블록 성공

르나님이 @FlyingSiri mev기능까지 넣어버림

drpc+멀티빌더+플래시봇 넣고

프빗으로 주문 넣어서 내 주문은 읽지 못하게+

내 주문이 씹히면 가스비 환불되게 +

퍼블릭 멤풀에서 주문 읽어서 가스비 1.5배로 쏘게 만들어서

첫주문을 우리가 넣고 2분후부터 나머지 따발쏴서 넘김

물량을 전부 정리하고나서 팀에서 추가적으로

브릿지에 물량을 채웠기 때문에

결국 가만히 있었어도 전부 넘길 수 있기는 했고

사실 봇을 짜지 않았어도 웹에서

손으로 수량을 적게(1~2만개) 계속 누르면 보낼 수는 있었지만

코드도 짜보고 경쟁도 해보면서 많이 배울 수 있는 기회가 된듯

아마 우리 지갑 보고 따라하던 애들중에

그냥 웹에서 막 누르니까 넘겨지던데? 했던 친구들은

무슨일이 있었는지 읽어보고 배우면 도움이 많이 될것같음

특히 펄습님 폼이 요즘 정말 미친것같음

파르나 이색긴 원래 잘해서 나랑 둘이 다오 시작했고

나랑 꽤 오래봤기 땜에 생략

오늘은 소개해줄만한 좋은 툴이 있어서(광고 아님)

이어서 글을 한개 더 써보겠음

그리고 최근엔 홍어들한테 통수를 너무 많이 맞아서

채널도 하기싫고 좆같아서 포스팅 자체를 그냥 안했는데

장이 너무 안좋다보니 그냥 아무것도 안하는게 나을 것 같음

항상 그랬듯이 먹을만한거 or 꼭 해야하는게 나오면 올려보겠음

한국어

nananaa.eth retweetledi

dr-manhattan - CCXT for prediction markets - is now opensource on github.

github.com/guzus/dr-manha…

English