LZ_security

104 posts

@LZ_security

Lead Senior Watson #6 @sherlockdefi | Portfolio: https://t.co/FSiTH1pVqh

I Saved Injective's $500M. They Pay Me $50K. I like hunting bugs on @immunefi . I'm decent at it. - #1 — Attackathon | Stacks - #2 — Attackathon | Stacks II - #1 — Attackathon | XRPL Lending Protocol - 1 Critical and 1 High from bug bounties (not counting this one) Life was good. Then I found a Critical vulnerability in @injective . This vulnerability allowed any user to directly drain any account on the chain. No special permissions needed. Over $500M in on-chain assets were at risk. I reported it through Immunefi. The next day, a mainnet upgrade to fix the bug went to governance vote. The Injective team clearly understood the severity. Then — silence. For 3 months. No follow up. No technical discussion. Nothing. A few days ago, they notified me of their decision: $50K. The maximum payout for a Critical vulnerability in their bug bounty program is $500K. I disputed it. Silence again. No explanation for the reduced payout. No explanation for the 3 month ghost. No conversation at all. To be clear: the $50K has not been paid either. I've seen others share bad experiences with bug bounty payouts recently. I never thought it would happen to me. I can't force them to do the right thing. But I won't let this be forgotten. I will dedicate 10% of all my future bug bounty earnings to making sure this story stays visible — until Injective pays what I deserve. Full Technical Report: github.com/injective-wall…

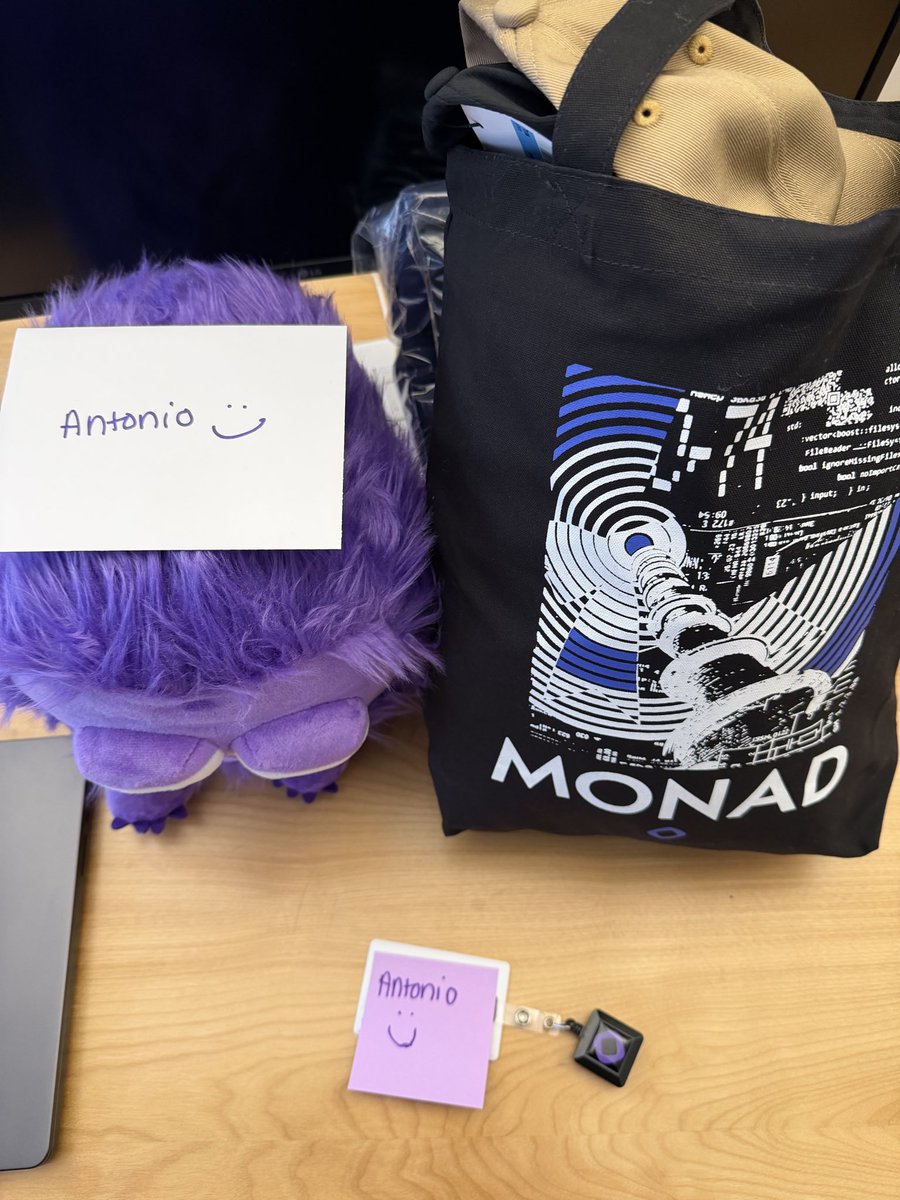

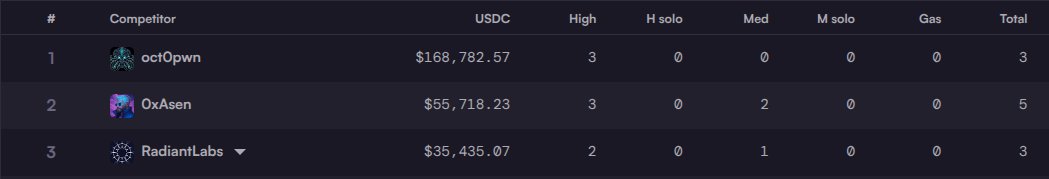

I'll tell you something that people don't want to talk about. Crowdsourced security is at a breaking point. There are no winners here; the security researchers hate it, the customers hate it, and the platforms at the crossroads also hate it. I spoke to someone last week who described it as a necessary evil. Why is that? - The security researchers hate it because no matter how you do it, a portion of security researchers will always disagree with the outcomes. Because the severity of a bug is ultimately subjective, you can almost always make an argument to upgrade or downgrade your finding. This is the ugly truth: the people that make a lot of money are particularly good at arguing about the findings. And they know it too; some of them are the nicest people you've met in your life, but when it comes to arguing why something is a critical bug, they morph into ruthless lawyers. - The customers hate it. Imagine you're Monad here; you just spent half a million dollars (!!) to secure your software before even launching to production, and you see posts like this. It's natural to leave a bad taste. It's also not just anyone who wrote this critique, but someone who got #4 and a $32K reward for a 4-week security competition. - The platforms that host it (us included) end up in the crossfire. No matter what you do, you end up in a lose-lose scenario. It takes up a lot of mental space, and in this case, CodeArena hosted this for free! They probably even lost money judging the competition. There's also a fourth actor that's adding fuel to the fire, which is AI: - LLM-powered reports started as complete slop that you could ignore, but now it's not that obvious anymore. It's starting to be genuinely useful at finding bugs. - The only sustainable long-term solution to crowdsourced competitions and bounties is a pay-per-bug or staking model where invalid submissions get a cash penalty. This is controversial, but it's the only way to scale. - AI is also a glimmer of the future: a future where... P.S. I don't mean to call anyone out in particular; in fact, Dontonka, Monad, and Code4rena are all doing their best here. It's just that the golden age of crowdsourced security is probably over. It was necessary, just like how it was necessary for humans to write open-source code so that the machine god could be built.

Here's what surprised me though: AI doesn't find fewer bugs. It finds different ones. Stuff I'd catch in 10 minutes, it misses. Stuff I'd need 3 hours to trace through, it flags in seconds.

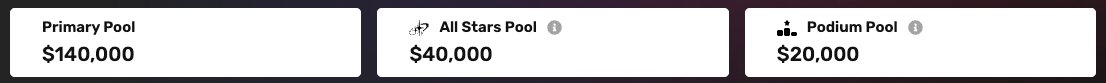

🚨 Half a million dollars paid. 🚨 The largest-ever unconditional prize pool is officially settled — all $500,000 distributed to participants. 4 high & 7 medium severity findings rewarded. Shoutout to @Monad & @category_xyz for their unwavering commitment to security!

🚨 Half a million dollars paid. 🚨 The largest-ever unconditional prize pool is officially settled — all $500,000 distributed to participants. 4 high & 7 medium severity findings rewarded. Shoutout to @Monad & @category_xyz for their unwavering commitment to security!