lajarre

473 posts

lajarre

@lajarre

Gestell contemplator; program synthesizer; futarch @buttermarkets_

The Automated Research Hackathon has begun! You can see the challenges and leaderboards at paradigm.xyz/automated-rese… Submissions end in 8 hours. The winner on each leaderboard gets $1,000

This is one of the coolest such examples! See comments from Lichtman below, who proved the related primitive set conjecture arxiv.org/abs/2202.02384

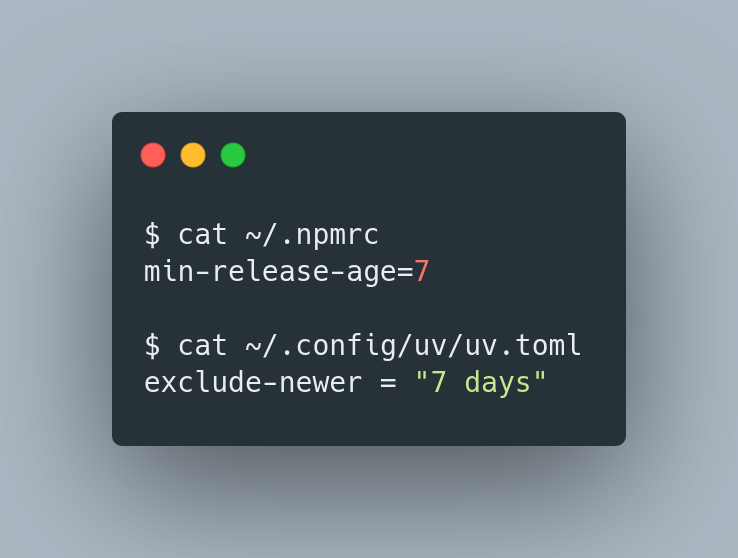

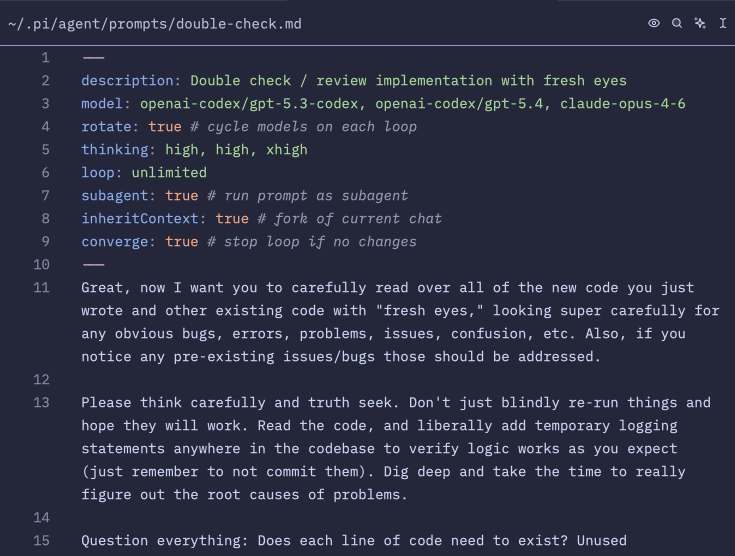

I've got some wild stuff brewing for ntm. What if you could spin up a huge swarm of agents to review your project (any kind of project, not just software), and the difference between the various agents was that they each employed a different mode of reasoning? What does that even mean? Isn't reasoning, well, reasoning? Like with logic and induction and stuff? Well, it turns out that you can really break this stuff down into exquisite detail. For instance, probabilistic reasoning extends classical logic by attaching probabilities (i.e., degrees of belief) to propositions instead of treating them as strictly True or False. Fuzzy logic is different: it treats truth itself as a continuum (e.g., ‘somewhat true’), even when there’s no uncertainty. But that's just scratching the surface. GPT Pro and Opus were jointly able to come up with EIGHTY distinct modes of reasoning, which you can read about here: github.com/Dicklesworthst… The screenshot below shows me getting CC to transform this document into a new feature using this prompt: --- ❯ I have a great idea for this tool for a special mode where we launch a ton of agents on the same project and have them either work on a problem, like "What's wrong with this project and how could it be made a lot better" or brainstorm with something like "What are the best new ideas to add to this project when you take into account the pros and cons of each one?" and so on. The twist is that each agent would be separately prompted to engage in a CERTAIN, NAMED FORM OF REASONING that would be explained to them in the prompt preamble (and thus automatically added to the user's primary prompt). Each named form of reasoning and how it works is laid out in modes_of_reasoning.md, which you should read and ruminate on incredibly deeply. Then come up with a spectacularly brilliant, creative, clever, comprehensive, accretive plan for architecting, designing, and implementing this system in a harmonious, cohesive, coherent way with the existing ntm system, with world-class ui/ux and polish. Make sure your plan is super detailed, granular, and comprehensive. Then please take ALL of that and elaborate on it and use it to create a comprehensive and granular set of beads for all this with tasks, subtasks, and dependency structure overlaid, with detailed comments so that the whole thing is totally self-contained and self-documenting (including relevant background, reasoning/justification, considerations, etc.-- anything we'd want our "future self" to know about the goals and intentions and thought process and how it serves the overarching goals of the project). The beads should be so detailed that we never need to consult back to the original markdown plan document. Remember to ONLY use the `br` tool to create and modify the beads and add the dependencies. --- You could tell Claude was titillated! His first sentence in his response was: "This is a phenomenal document! 80 modes of reasoning organized into 12 categories, each with precise definitions, outputs, differentiators, use cases, and failure modes. Let me deeply synthesize this and design a comprehensive "Reasoning Ensemble" feature for NTM." Big thanks to @darin_gordon for getting me thinking along these lines. PS: I got so sick and tired of seeing the clankers mess up the right-hand borders of ascii art diagrams that I made a rust cli tool to fix it, lol: github.com/Dicklesworthst…

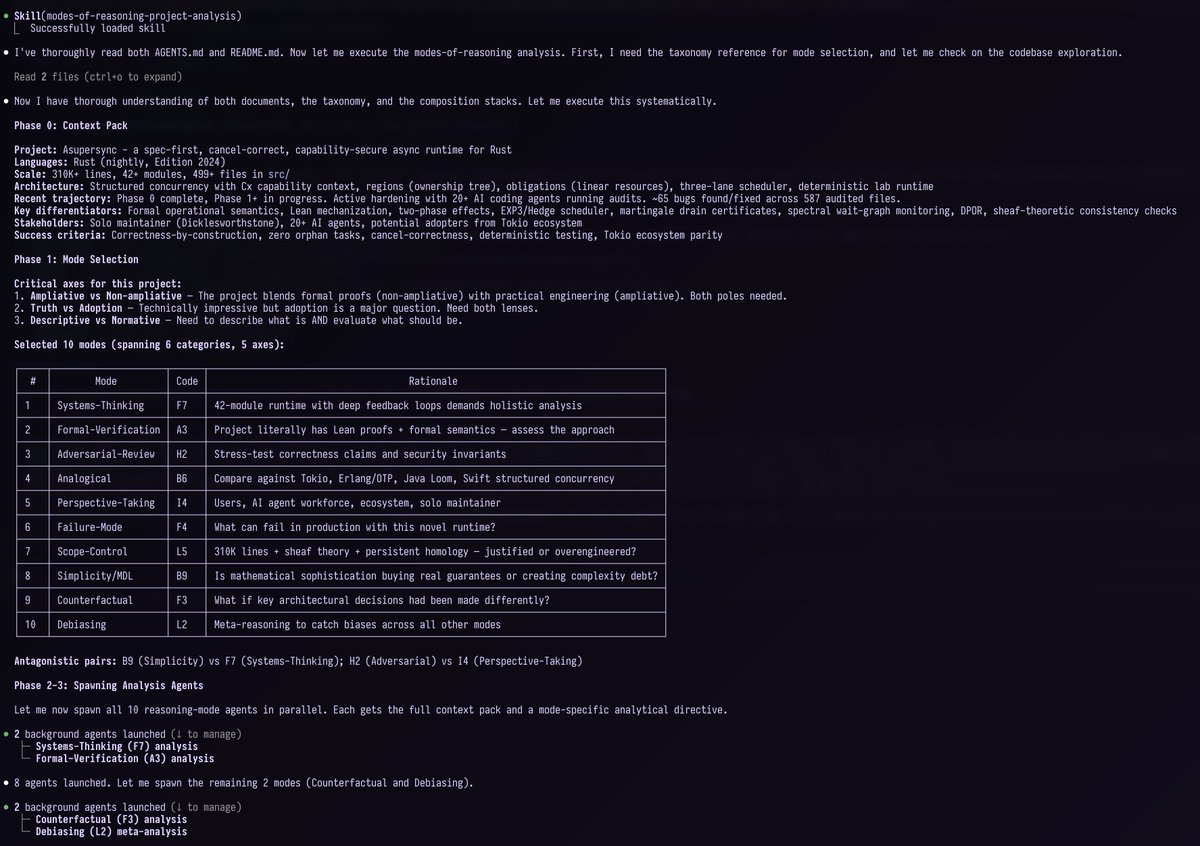

A while back, I posted this concept for my ntm agent orchestration tool that would let you spin up a swarm of agents using various harnesses where each agent could follow a different "mode of reasoning" (see the quoted post for what that means). I didn't really do much with it at the time because I got distracted by other projects. But the other reason was that I wasn't really sure how it could be effectively "steered" and leveraged. But I realized recently that a skill was the perfect medium for finally implementing this properly in a unified, cohesive way that's highly applicable to software development projects, but also to any other sort of project, business plan, conceptual framework, etc. Now you can simply ask Claude Code to invoke the /modes-of-reasoning-project-analysis skill and it will embark on a truly ambitious and deep investigation for you. Rather than blindly try to apply all 80 reasoning modes, the "lead agent" first studies the project and determines which of the 80 modes are most applicable and complementary, then creates and manages a swarm for you using ntm with an agent for each selected reasoning mode. Then it attempts to synthesize the results of their interactions and compiles this into a markdown report for you. You can sort of conceptualize this approach as the "fresh eyes review" approach on steroids, in that it's attempting to force something akin to a gestalt shift to each agent so that it will look at the project in a different way that might reveal new angles it otherwise wouldn't perceive. It's a bit hard to explain, so I asked Claude to give its best summation of what the skill does and how it works and why it's useful (also see the two screenshots showing how it starts out on two different software projects; you can access it on my skills site, jeffreys-skills.md): --- This is a multi-agent epistemological analysis tool. Here's what it does and why it matters: What It Is It spawns a swarm of AI agents (default 10, configurable), each assigned a distinct reasoning mode drawn from a taxonomy of ~80 modes. Each agent analyzes the same project but through a completely different analytical lens — then their outputs are synthesized into one comprehensive report. How It Works (7 Phases) 1. Context Pack — Profile the target project (structure, tech stack, maturity) 2. Mode Selection — Pick 10 reasoning modes from 7 taxonomy axes (e.g., abductive reasoning, adversarial analysis, Bayesian inference, normative ethics, game-theoretic reasoning, etc.) 3. Spawn Swarm — Launch agents via NTM (my tmux-based multi-agent orchestrator) 4. Dispatch Prompts — Each agent gets a mode-specific prompt constraining it to reason from that single perspective 5. Monitor — Watch for convergence or early stopping conditions 6. Score & Collect — Each agent produces structured findings (thesis, risks, recommendations, assumptions, uncertainties) 7. Synthesize — A triangulation protocol classifies findings: - Kernel (3+ modes agree) — high confidence - Supported (2 modes agree) — moderate confidence - Hypothesis (1 mode only) — worth investigating - Disputed (modes disagree) — needs resolution Why It's Useful The core insight: a single analytical perspective has blind spots. Multiple independent perspectives triangulate toward truth. Concrete use cases: - Pre-release audit — Before shipping, get 10 fundamentally different takes on what could go wrong. An adversarial reasoner finds attack surfaces, a probabilistic reasoner finds unlikely-but-catastrophic failures, a normative reasoner flags ethical concerns. - Architecture decisions — When choosing between approaches, different reasoning modes weigh tradeoffs differently. Game-theoretic reasoning considers incentive structures, abductive reasoning asks "what best explains the constraints," analogical reasoning pulls patterns from similar systems. - Breaking groupthink — If your team has converged on an approach, this surfaces objections you wouldn't naturally generate. The "Kill Thesis" operator card explicitly tries to destroy the consensus view. - Due diligence on acquisitions or dependencies — Evaluate an unfamiliar codebase from economic, security, maintainability, and social/community perspectives simultaneously. - Finding unknown unknowns — The "Blind Spot Scan" operator card specifically asks: which axes of the taxonomy are underrepresented in current findings? What would a mode from that axis notice? The key differentiator from just "ask an AI to review my project" is structured epistemic diversity — it's not 10 agents doing the same thing, it's 10 agents that are cognitively constrained to reason differently, with a formal synthesis protocol that tracks where they agree, disagree, and what falls through the cracks.

folks who are calling @openclaw pure hype are telling on themselves openclaw is like the early internet, it's raw, unrefined, and takes a little doing to get things to work, but when you figure it out, it's transformative. here are some real use cases that are having material impact on our $2.5M ARR business: 1. ad creative pipeline. our head of growth @ArjunShukl95550 built an end-to-end creative pipeline to go from ideation to publish adds to meta, greatly increasing our creative iteration speed. it's producing winning creatives. it lives in slack, and anyone on the team can share their ideas and have them enter the pipeline. 2. data analytics agent. another bot lives in our slack that connects to bigquery and lets our team ask any questions of the data, it produces charts and answers questions in real time. no one needs to write SQL anymore. 3. recruiting. i told my agent about a role we're hiring for, and it scoured linkedin and the web, found 30 candidates, portfolio, email addresses, and stack ranked them based on fit with our criteria this is just in the past week. i have twenty more success stories for you i can share another time. you have to understand, this is the shittiest it will ever be. everyone is going to have one or more personal self-improving agents that they use every day, and openclaw is what revealed this future to us. if you can't see this, i encourage you to look harder there will be many competitors (and already are), and the large labs will start to converge on this (they already are) too. openclaw may not win, but it opened pandora's box and uncorked the agentic future.