LangChain OSS

242 posts

@LangChain_OSS

Ship great agents fast with our open source frameworks – LangChain, LangGraph, and Deep Agents. Maintained by @LangChain.

here's model profile details look like in practice, using @NVIDIAAIDev's Nemotron models as an example:

Localmaxxing : pushing more inference to local models. Over five weeks, I tested how much of my daily work can run on a local 35B model instead of cloud frontier models. The answer : half. Many reasons to use local models : privacy, cost, asset depreciation. But the only one that really matters is latency. I ran a head-to-head benchmark. Qwen 3.6 35B-A3B-4bit on my MacBook Pro M5 vs Claude Opus 4.5 via API. Result : 2.1x faster locally. Mean 2.8s vs 5.8s. The local model isn't smarter. Opus scores ~20% higher on reasoning benchmarks. Local models lag frontier by 3-4 months, and for complex tasks, that gap matters. But for routine agent tasks, it rarely does. If half the work runs 2x faster on my laptop, I'll take that trade every time. My little computer is about to earn its keep. tomtunguz.com/localmaxxing/

This is harder to build than it looks. Preserving full conversational context while swapping underlying model providers mid-flight is a surprisingly deep systems problem. Most tools drop state or force you to start over. deepagents-cli does this natively: swap models mid-conversation with zero context loss. Try it: docs.langchain.com/oss/python/dee…

concluding my "taking deep agents to production" series with arguably the most important component: observability. when you deploy a deep agent with LangSmith, you automatically get traces for every run: a full record of every LLM call, tool call, and middleware hook. for long-running agents, you can use agents like Polly, the LangSmith assistant, to reason over long traces and identify where a trajectory went wrong. traces are observational: they tell you what happened. time travel is experimental. built into the deep agent runtime, it's how you explore what an agent trajectory would look like if the agent had different context at some point. pick any checkpoint in a run's history, modify the state, and resume. the fork runs forward as its own branch, the original stays intact, and the full agent loop re-triggers. the combination of traces and time travel is powerful for the agent improvement cycle!

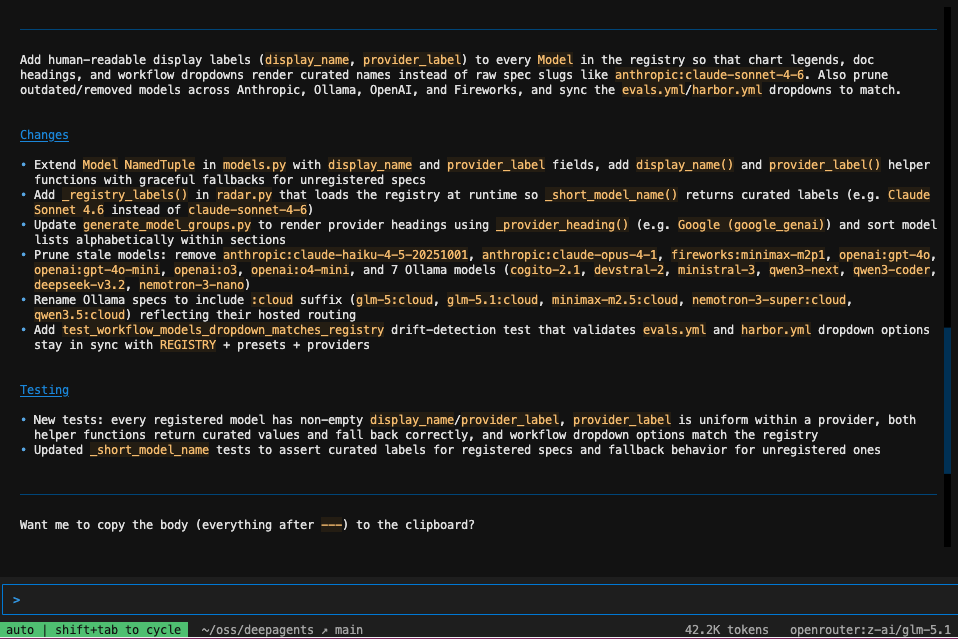

your daily reminder that open models are plenty capable for a lot of coding work. easiest place to feel that out is deepagents! swap the model and go. i've been enjoying GLM-5.1, Kimi K2.6, MiniMax M2.7, DeepSeek V4 Pro. here's some examples using our CLI agent in headless mode

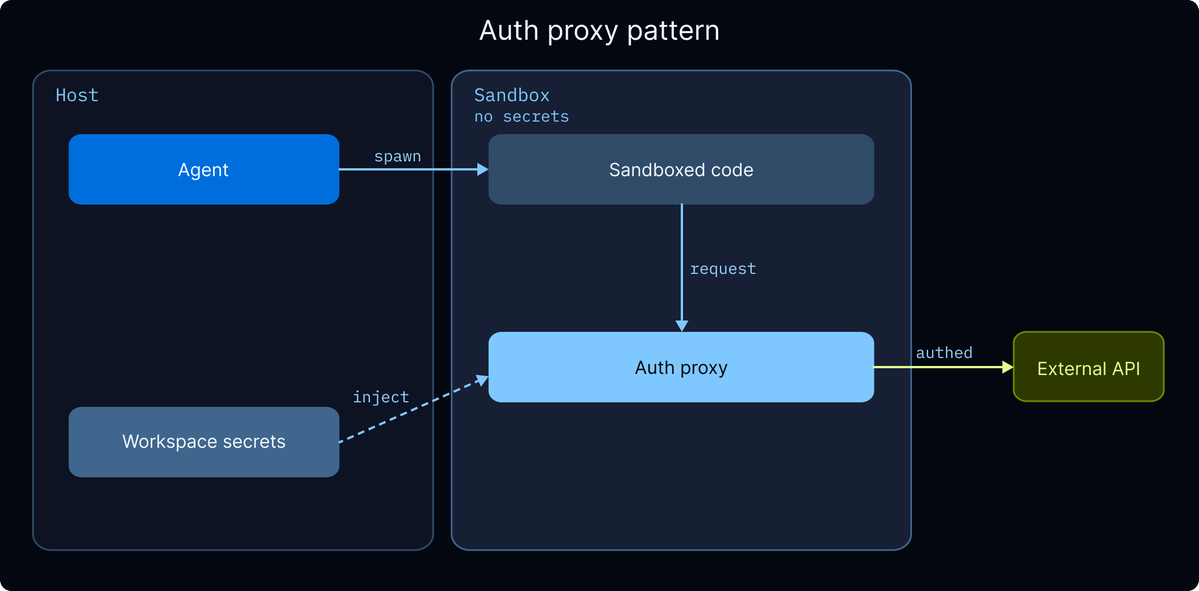

deepagents-cli is quietly becoming the best place to start coding with open weight models. we've been investing heavily in making it a harness that's truly model-agnostic, without compromising performance! different models perform best with different harnesses -- prompts, middleware, settings. our recent profiles API (below) lets you bundle all of that per model, so Kimi, Qwen, GLM, etc. can drive the agent loop just as well as the closed frontier. more info on profiles x.com/Vtrivedy10/sta… other recent wins worth highlighting: - /agents - swap agent profiles mid-session (coding agent/content writer/custom) - /model - fuzzy switcher w/ live status; OpenRouter, LiteLLM, Baseten, hosted Ollama all built-in - headless mode w/ --json + --max-turns for scripting - --acp to run as an ACP server - /skill:name skills - MCP w/ OAuth full docs and quickstart ⬇️

you've heard that models are highly trained in their harnesses, but... it appears that pi is about 7-10% better than codex with gpt-5.4 on a ProgramBench task. Same exact prompt, same environment. It's a good harness.

we're continuing to see clear examples where a model's harness is a major determinant of overall performance. with the same model, running on same task, it's easy to observe very different scores depending on (system) prompts, tools (& their descriptions), and middleware (steering hooks). this is exactly why we built a harness profiles abstraction in Deep Agents: per-provider or per-model overrides for base system prompts, tool names + implementations, etc., so swapping models doesn't mean losing the work that made the last one good! 10–20pt jumps on tau2-bench in our own testing. currently cooking up built-in profiles for popular open weight models 🧑🍳 langchain.com/blog/tuning-de…