Sabitlenmiş Tweet

Second Glue Coast EP, Douglas, on all the streamers today. But catch them live! Buy a cassette! Buy a shirt! Mosh your big body! Have big feelings!

open.spotify.com/album/1tW8FVwy…...

English

Jake Linford

8K posts

@LinfordInfo

I profess the law, especially w/r/t trademarks. Husband to one, father of four. Gamer geek. Loula Fuller & Dan Myers Professor, FSU College of Law.

Oy. According to a new paper in The Lancet, the rate of made-up citations in biomedical papers has increased by more than 12x since 2023. thelancet.com/journals/lance…

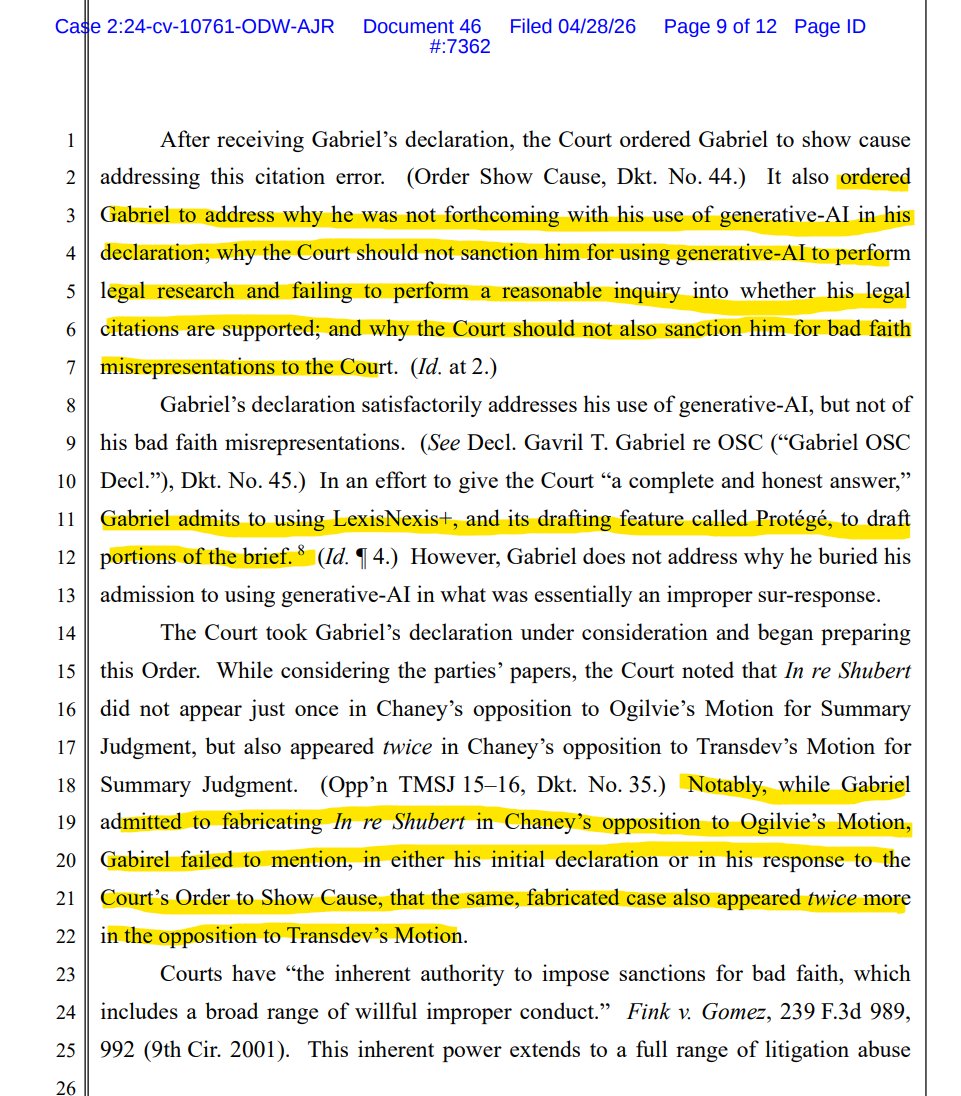

The Justice Department fired assistant US attorney Rudy Renfer the day after he said he was resigning over his error-riddled AI-generated brief at a hearing over the matter. news.bloomberglaw.com/ip-law/doj-fir…