Luc Georges

367 posts

@LucSGeorges

Software & ML Engineer @huggingface 🦀

Programming was deeply satisfying work to me. Work for hours/days before getting the payoff of the code working well on your machine. I’m feeling so much friction now to open the editor and do this kind of task by hand, but also increasingly depressed with the nature of work in an AI assisted dev workflow. Back and forth prompting seems to eat at my soul. Need to find a balance that brings back some of the toil.

I've started a company: ggml.ai From a fun side project just a few months ago, ggml has now become a useful library and framework for machine learning with a great open-source community

RE: Stacked Diffs on @GitHub After discussion w @ttaylorr_b, we can implement stacked PRs/PR groups already (in fact we kind of do with Copilot) but restacking (automatically fanning out changes from the bottom of the the stack upwards) would be wildly inefficient. To do it right, we need to migrate @GitHub to use git reftables instead of packed-refs so that multi-ref updates / restacking will be O(n) instead of ngmi. This will take some time but has been greenlit.

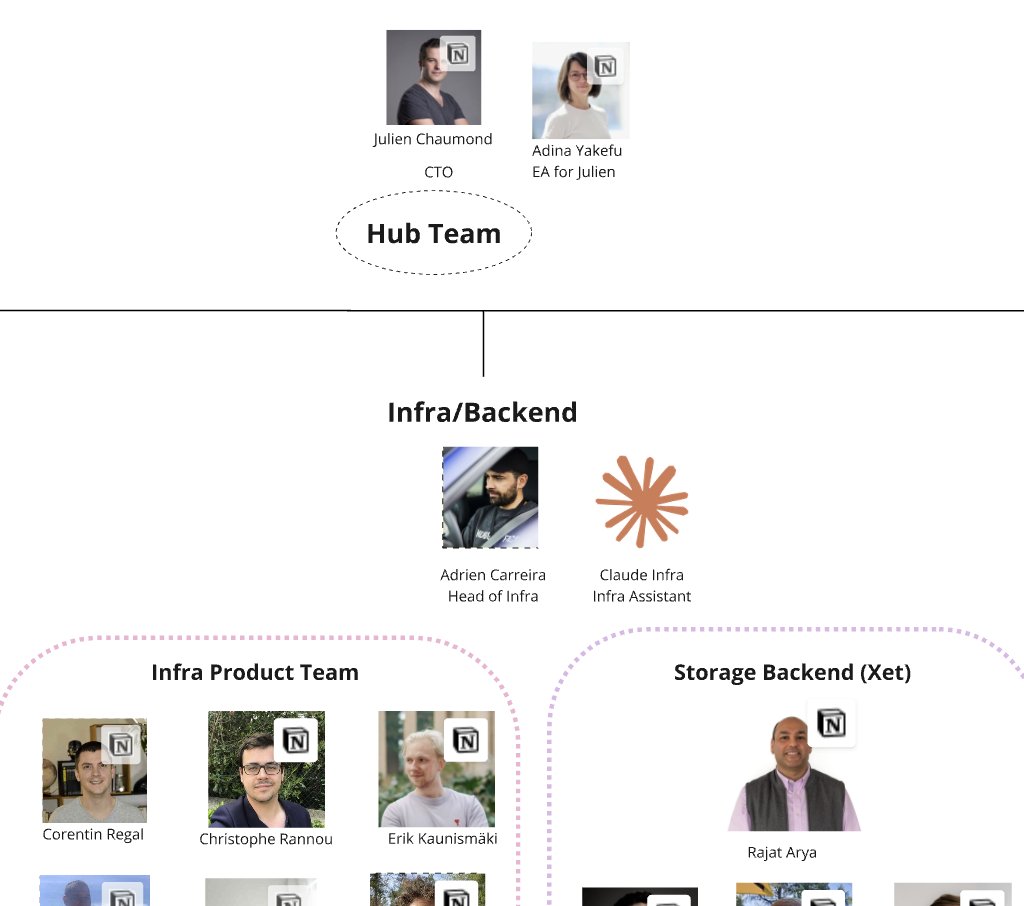

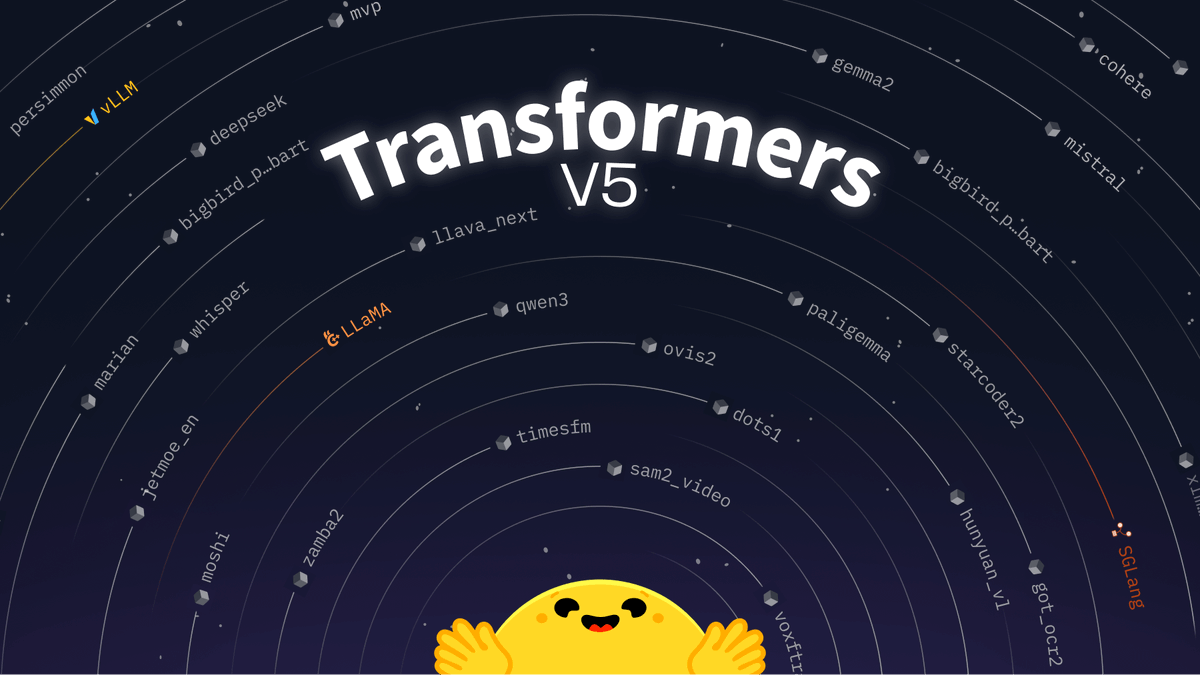

🪦text-generation-inference is now in maintenance mode. Going forward, we will accept pull requests for minor bug fixes, documentation improvements and lightweight maintenance tasks. TGI has initiated the movement for optimized inference engines to rely on a transformers model architectures. This approach is now adopted by downstream inference engines, which we contribute to and recommend using going forward: @vllm_project, @sgl_project, as well as local engines with inter-compatibility such as llama.cpp or MLX.

LESSSGOOOO kudos team, incredible work everyone 🔥🔥🔥

LESSSGOOOO kudos team, incredible work everyone 🔥🔥🔥