Matthias Minderer

76 posts

@MJLM3

Research Scientist at @GoogleResearch.

Have you ever wondered how to train an autoregressive generative transformer on text and raw pixels, without a pretrained visual tokenizer (e.g. VQ-VAE)? We have been pondering this during summer and developed a new model: JetFormer 🌊🤖 arxiv.org/abs/2411.19722 A thread 👇 1/

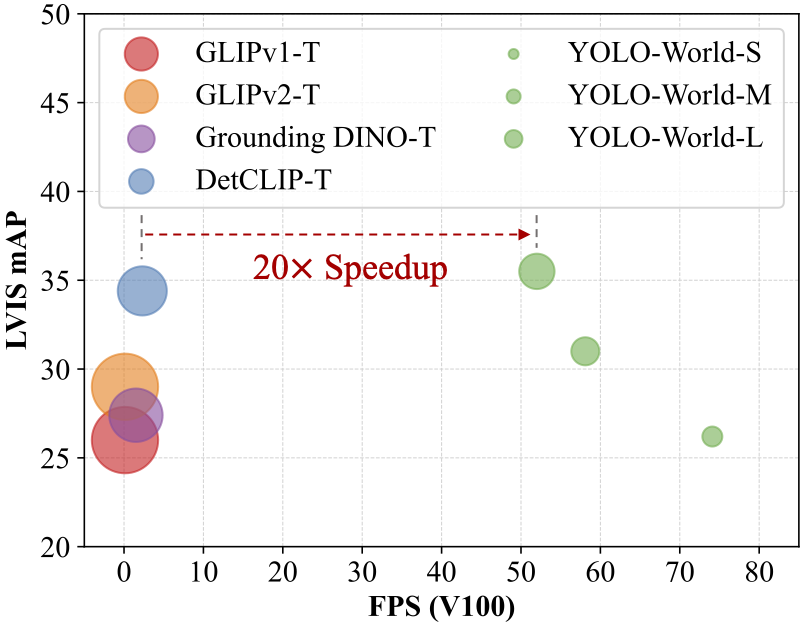

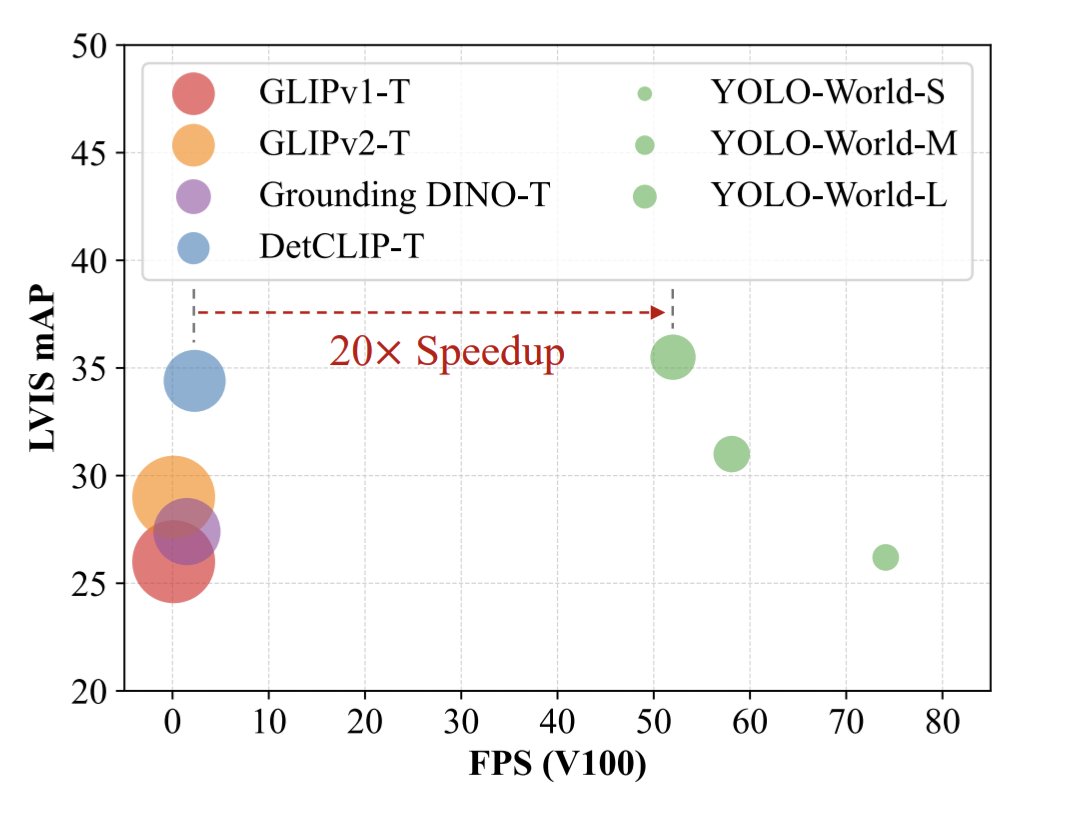

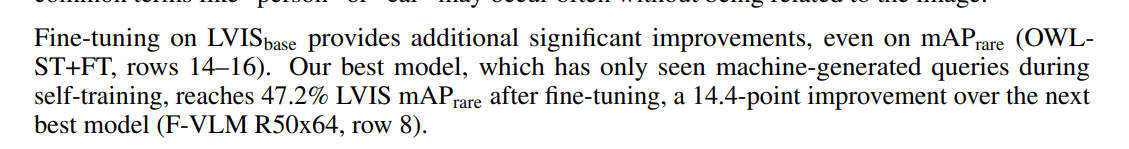

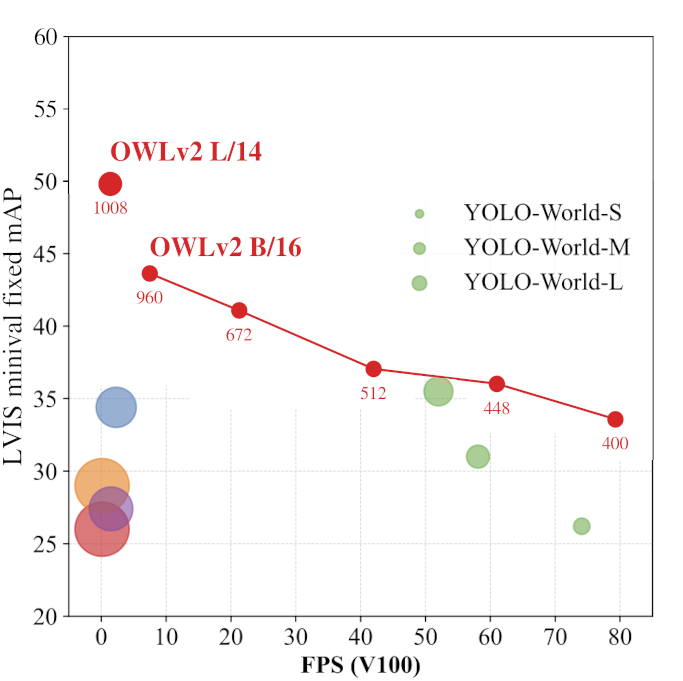

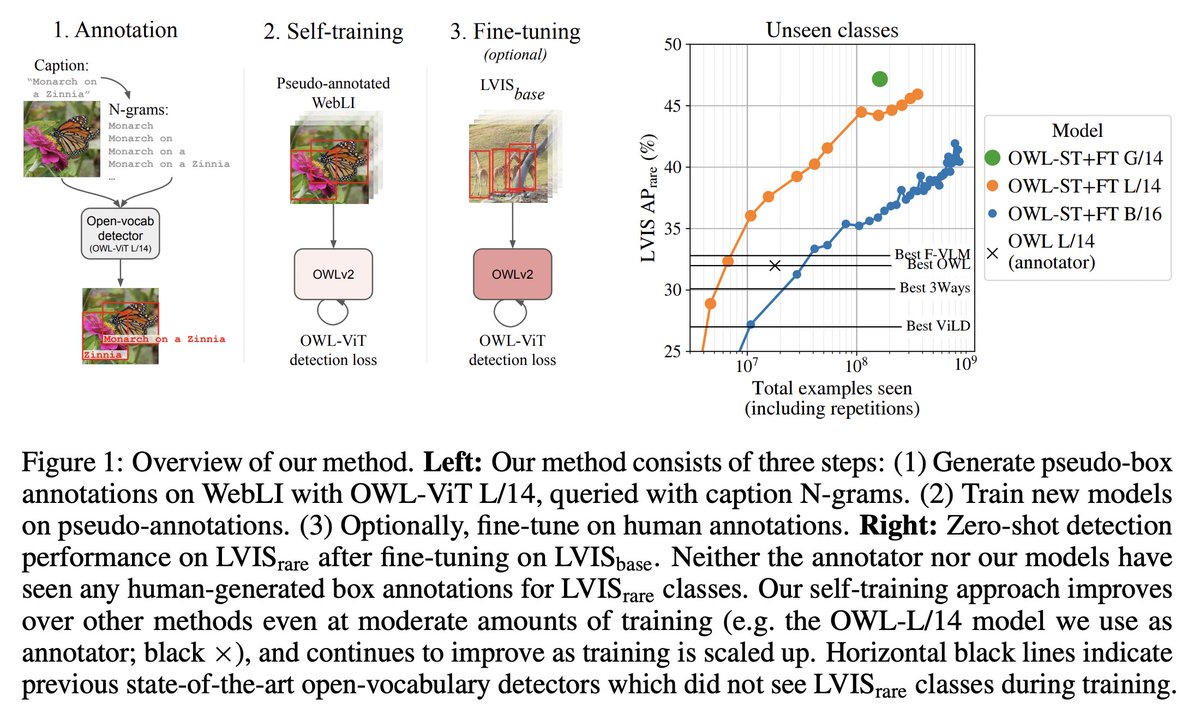

@_akhaliq I added OWL-ViT v2 to the plot. A single OWLv2 B/16 model, finetuned on O365+VG, covers all speed/accuracy combinations: Simply adjust the inference resolution to match your latency requirements. No re-training needed. arxiv.org/abs/2306.09683

Excited to announce DORSal: a 3D structured diffusion model for generation and object-level editing of 3D scenes. DORSal is “geometry-free” and learns 3D scene structure purely from data – no expensive volume rendering! 🖥️ sjoerdvansteenkiste.com/dorsal/ 📜 arxiv.org/abs/2306.08068 1/6

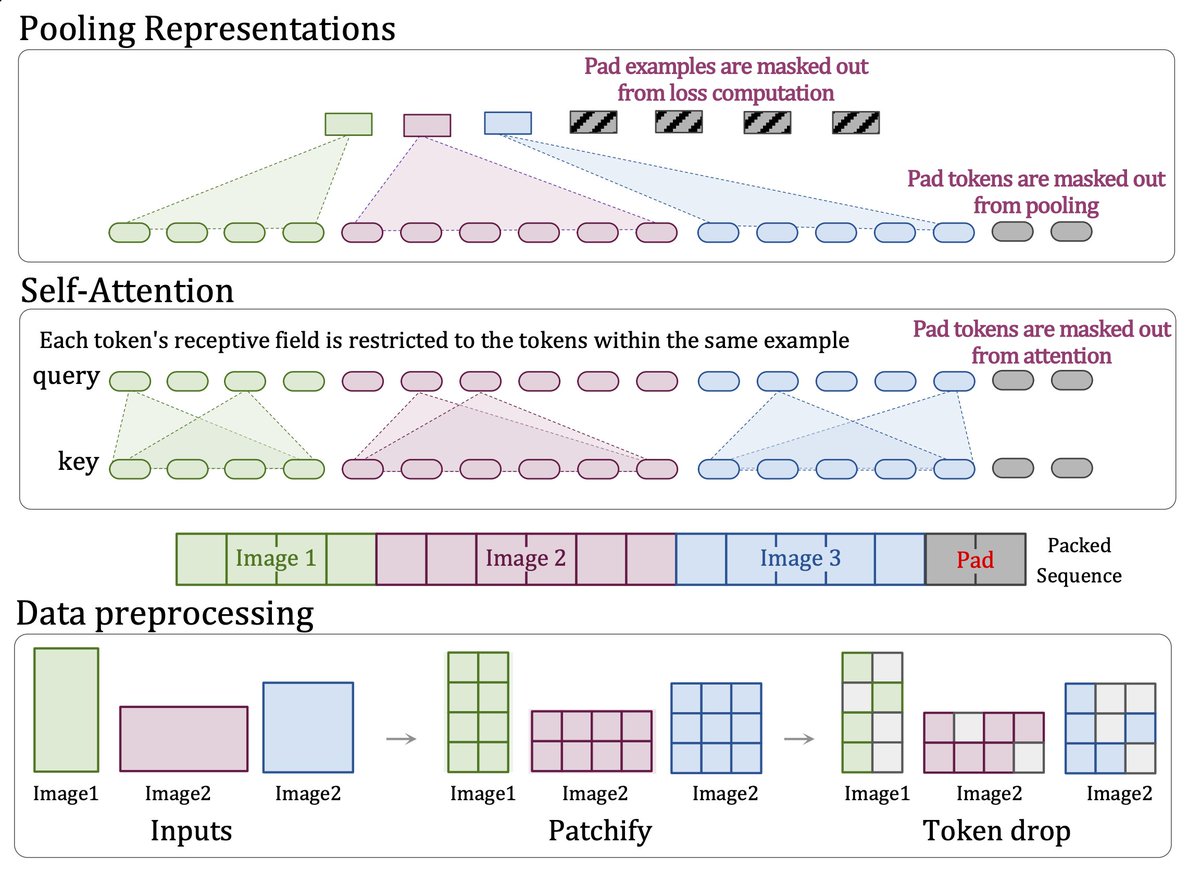

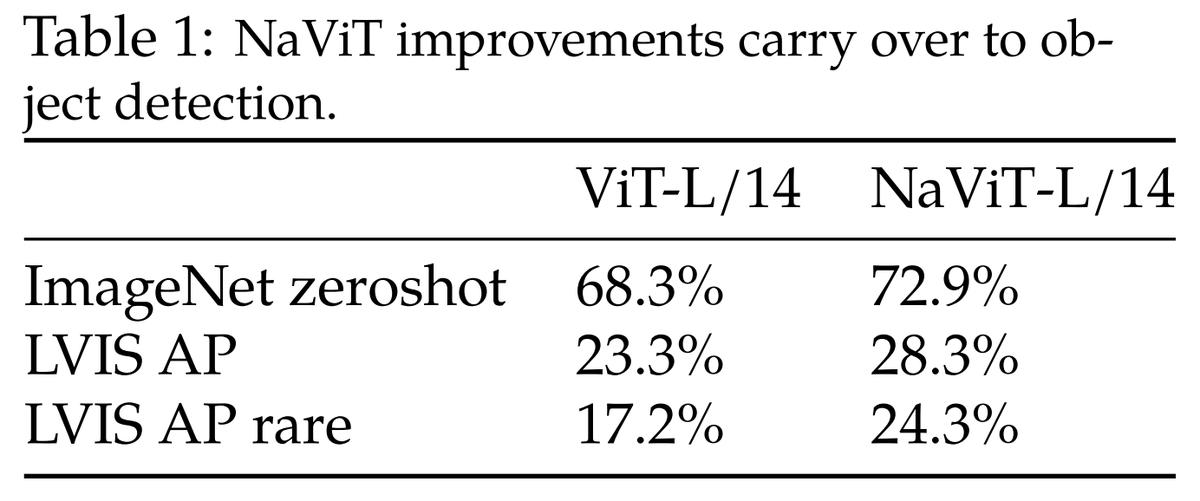

Patch n' Pack: NaViT, a Vision Transformer for any Aspect Ratio and Resolution paper page: huggingface.co/papers/2307.06… The ubiquitous and demonstrably suboptimal choice of resizing images to a fixed resolution before processing them with computer vision models has not yet been successfully challenged. However, models such as the Vision Transformer (ViT) offer flexible sequence-based modeling, and hence varying input sequence lengths. We take advantage of this with NaViT (Native Resolution ViT) which uses sequence packing during training to process inputs of arbitrary resolutions and aspect ratios. Alongside flexible model usage, we demonstrate improved training efficiency for large-scale supervised and contrastive image-text pretraining. NaViT can be efficiently transferred to standard tasks such as image and video classification, object detection, and semantic segmentation and leads to improved results on robustness and fairness benchmarks. At inference time, the input resolution flexibility can be used to smoothly navigate the test-time cost-performance trade-off. We believe that NaViT marks a departure from the standard, CNN-designed, input and modelling pipeline used by most computer vision models, and represents a promising direction for ViTs.