Mike Burnham

748 posts

@ML_Burn

Assistant professor @tamupols, Ph.D. @psupolisci & @CSoDA_PSU, postdoc @Princeton_CSDP. Text analysis & deep learning, methods, American politics.

Enough is enough. Just because you can generate an academic paper in minutes doesn't mean you should. When your name is on something, you should check every reference and claim before submitting. If you can't be bothered to do that, you should be banned from submitting.

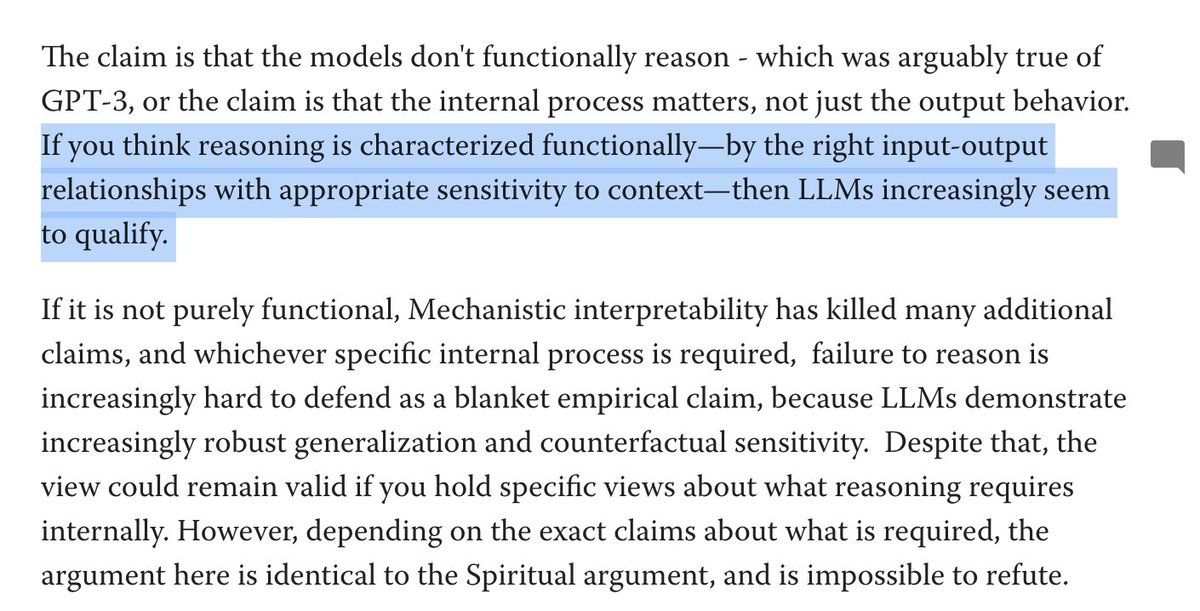

Hot take: Decoders (GPT-4 etc.) are a distraction for text classification. Encoders and embedding models are fundamentally better and more efficient at this. If decoders have an advantage it's only because of massive time/research investment disparities.

@captgouda24 Realistically, there are a range of ways to “use an LLM for research” that would lead to different levels of error probabilities and everyone is going to choose a different point along that tradeoff curve. Heterogeneous risk profiles are ok, I think!

Claude whispering in my ear after a week of iterating on a codebook: "Just saying... if you picked something with readily available data we could have done three papers by now."