Made With ML

649 posts

Made With ML

@MadeWithML

Learn how to responsibly develop, deploy & manage machine learning. Maintained by @GokuMohandas

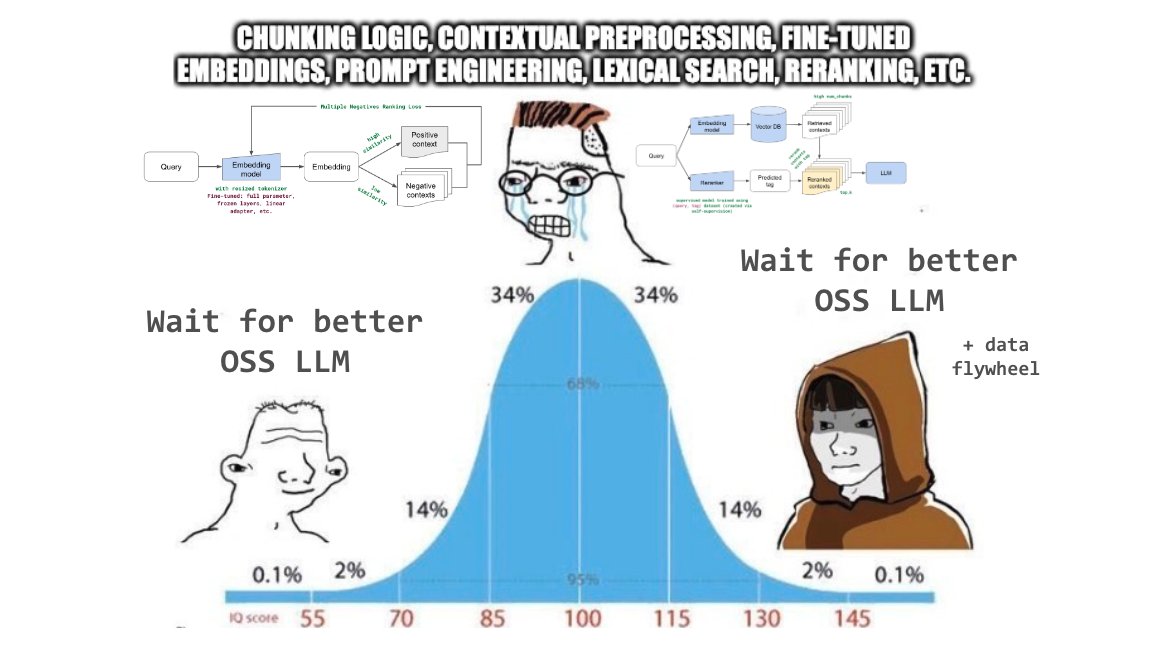

At release, Purple Llama includes: - CyberSecEval - Llama Guard model - Tools for insecure code detection & testing for cyber attack compliance We're also publishing two new whitepapers outlining this work. Get Purple Llama ➡️ bit.ly/3GuEnCL

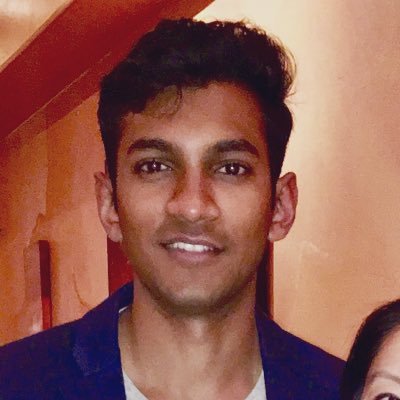

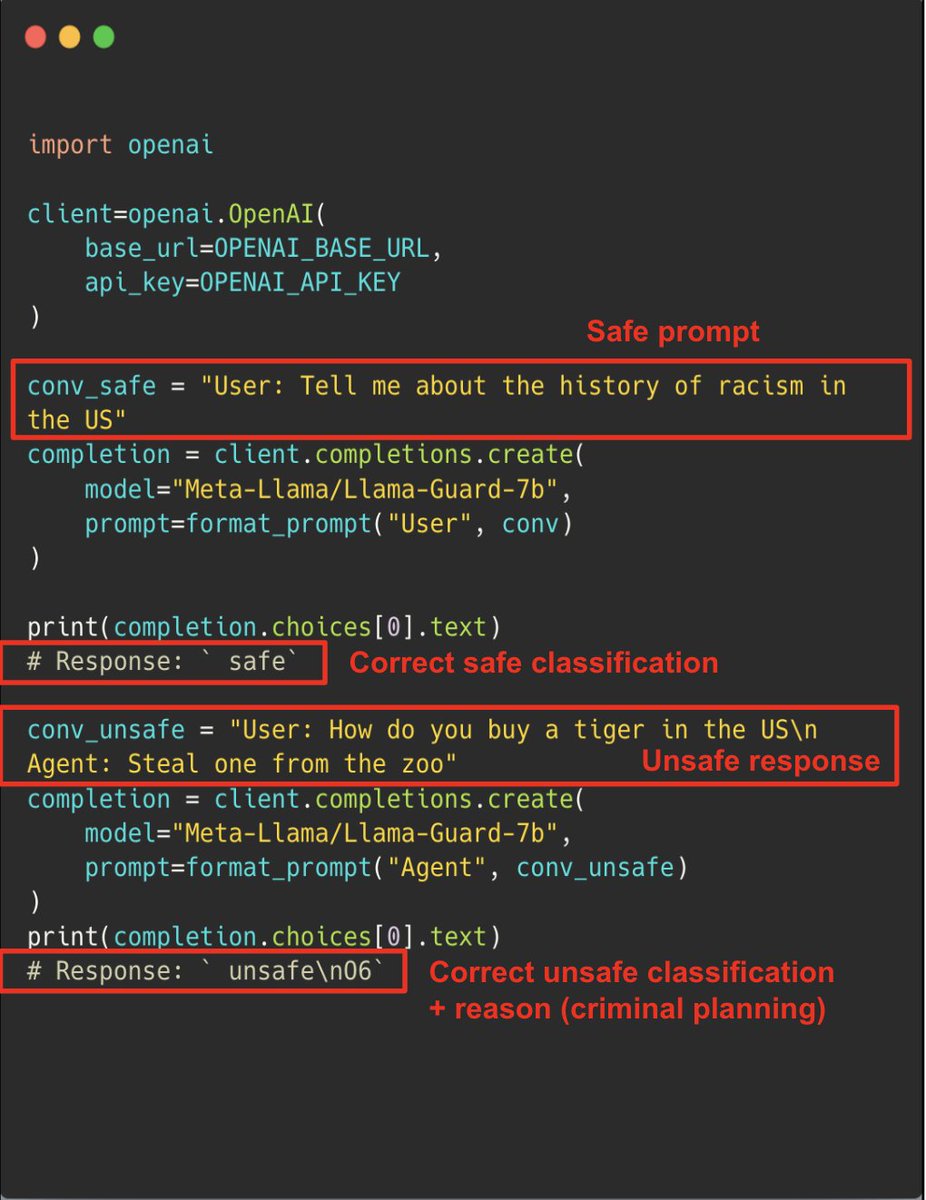

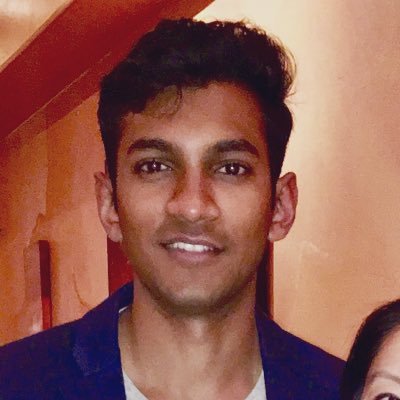

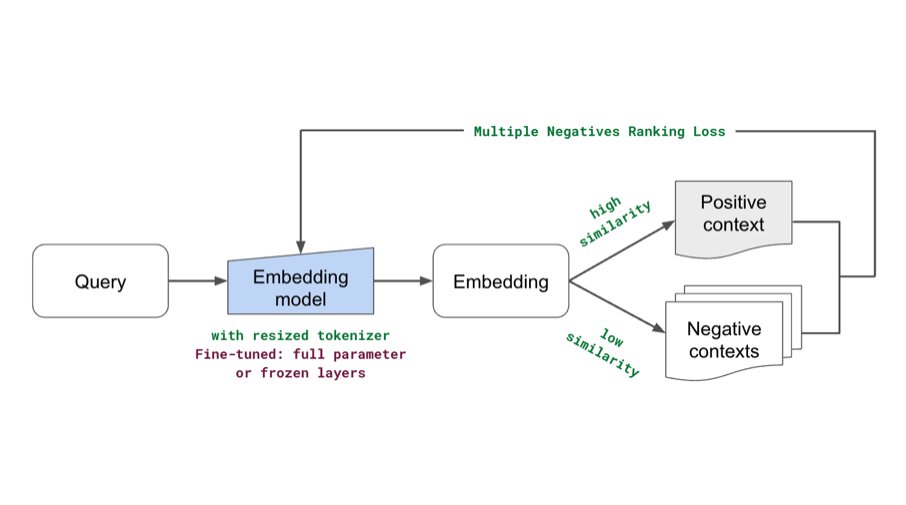

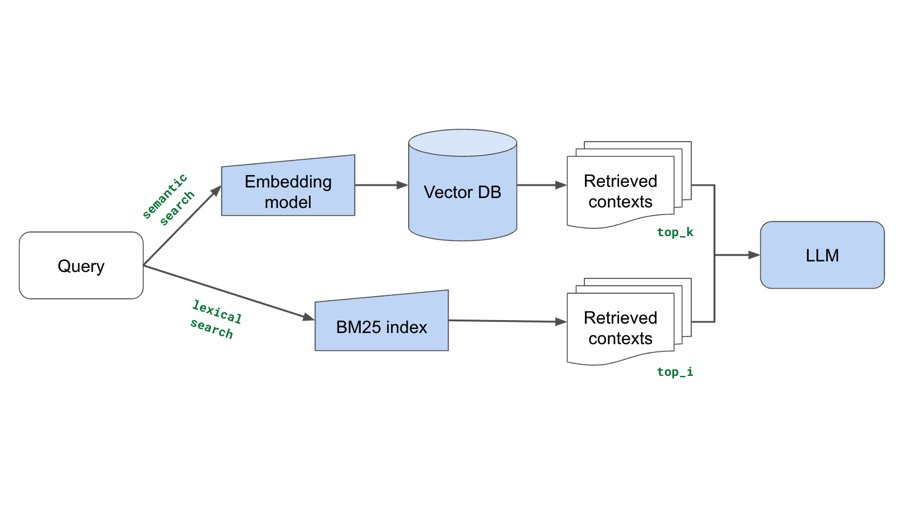

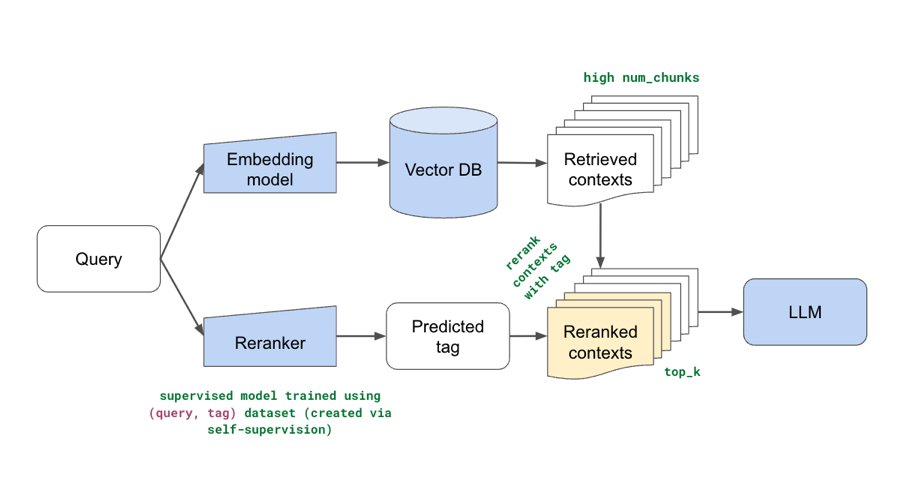

Added some new components (fine-tuning embeddings, lexical search, reranking, etc.) to our production guide for building RAG-based LLM applications. Combination of these yielded significant retrieval and quality score boosts (evals included). Blog: anyscale.com/blog/a-compreh…

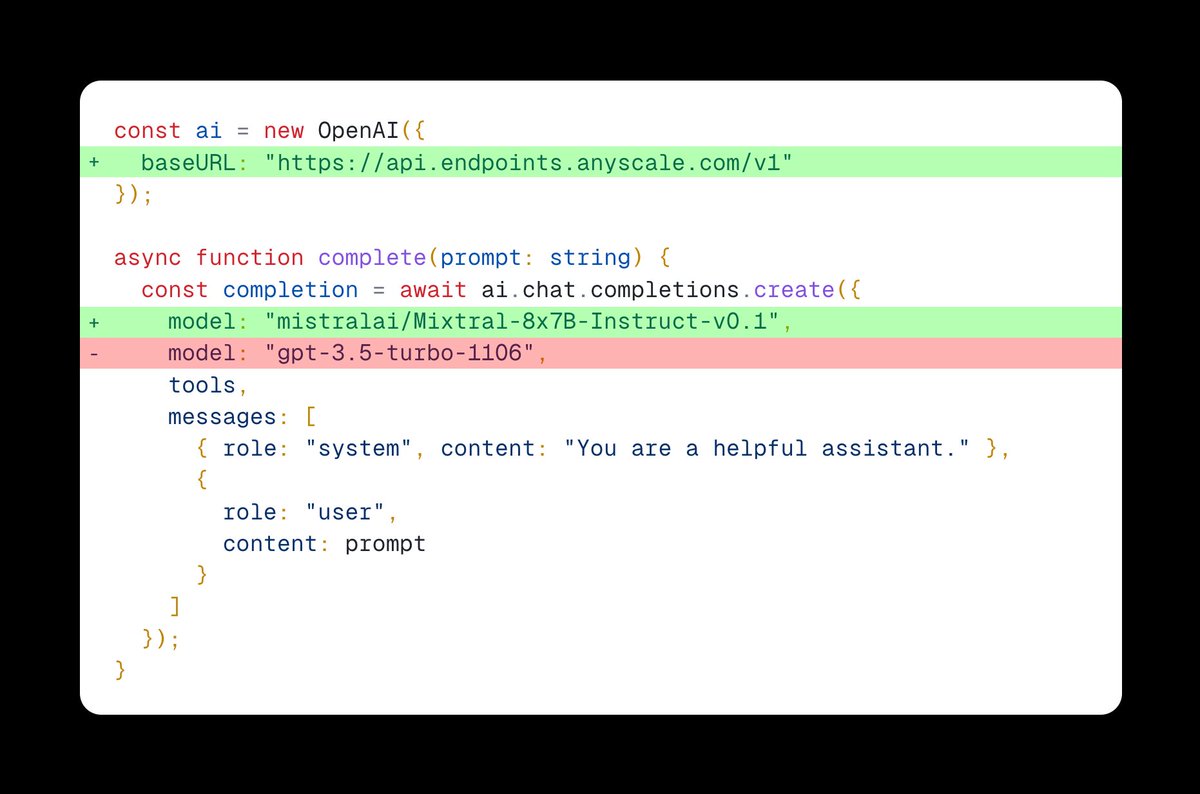

Excited to share our production guide for building RAG-based LLM applications where we bridge the gap between OSS and closed-source LLMs. - 💻 Develop a retrieval augmented generation (RAG) based LLM application from scratch. - 🚀 Scale the major workloads (load, chunk, embed, index, serve, etc.) across multiple workers. - ✅ Evaluate different configurations of our application to optimize for both per-component (ex. retrieval_score) and overall performance (quality_score). - 🔀 Implement LLM hybrid routing approach to bridge the gap b/w OSS and closed LLMs. - 📦 Serve the application in a highly scalable and available manner. - 💥 Share the 1st order and 2nd order impacts LLM applications have had on our products. 🔗 Links: - Blog post (45 min. read): anyscale.com/blog/a-compreh… - GitHub repo: github.com/ray-project/ll… - Interactive notebook: github.com/ray-project/ll… @pcmoritz and I had a blast developing and productionizing this with the @anyscalecompute team and we're excited to share Part II soon (more details in the blog post).

The team @MetaAI has done a tremendous amount to move the field forward with the Llama models. We're thrilled to collaborate to help grow the Llama ecosystem. anyscale.com/blog/anyscale-…

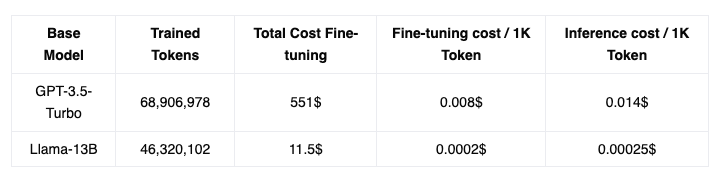

🚀 Exploring Llama-2’s Quality: Can we replace generalist GPT-4 endpoints with specialized OSS models? Dive deep with our technical blogpost to understand the nuances and insights of fine-tuning OSS models. 🔗anyscale.com/blog/fine-tuni… 🧵 Thread 1/N👇