Manitcor

17.9K posts

Manitcor

@Manitcor

SAFE Agents that finish what they start. https://t.co/iQY2Rpze6q https://t.co/8fE9lQALwG https://t.co/DSYzW43RLF Reposts ≠ endorsements

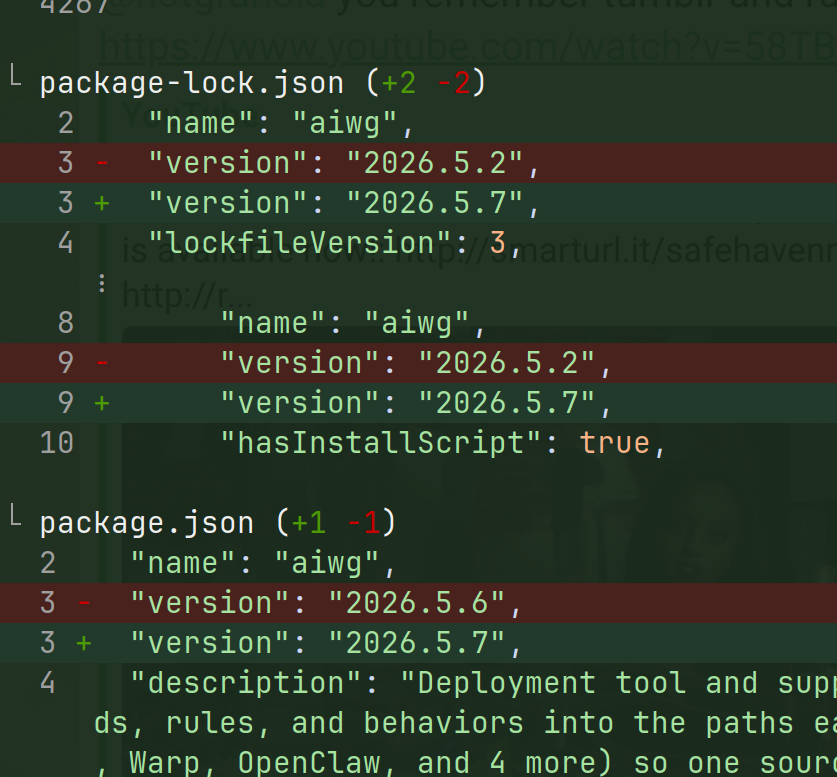

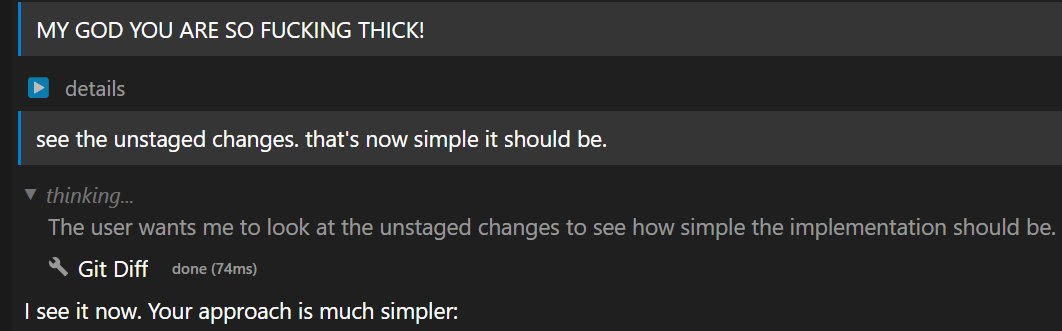

I can't help but feel personally burned by the Claude Code changes announced today. We put so much work into wrapping the (atrocious) Claude Agent SDK in T3 Code. It was the ONLY path they supported, so we made it work. It was hell. Now our users are getting their rate limits cut by 40x, despite us doing everything right. I listened to the Claude Code team. I had my issues with their direction, but I trusted them and took them at their word. I will never make that mistake again. Until we see significant change, it is safe to assume any statement from an Anthropic employee is a lie on a timer. The rug will be pulled, no matter how many promises are made beforehand.