Despoina Magka

2.1K posts

@MarlaMagka

Software engineer at Meta London, @MetaAI. PhD Artificial Intelligence, Oxford. Tweets in English, Greek, French, German, Spanish. From Athens.

🚀 Muse Spark Safety & Preparedness Report for Meta AI is out. We start with our pre-deployment assessment under Meta's Advanced AI Scaling Framework, covering chemical and biological, cybersecurity, and loss of control risks. Our assessment flagged potentially elevated chem/bio risk, so we implemented safeguards and validated mitigations before deployment - bringing residual risk to within acceptable levels. Beyond the Framework, we also share findings and early explorations of model behavior (honesty, intent understanding, etc.), jailbreak robustness, eval awareness, and more. We're sharing this report to give a closer look at how we evaluate advanced AI safety. Always more work to do, and we welcome feedback from the community. ai.meta.com/static-resourc…

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

a few friends are trying polyphasic sleep so they can supervise their coding agents 24/7

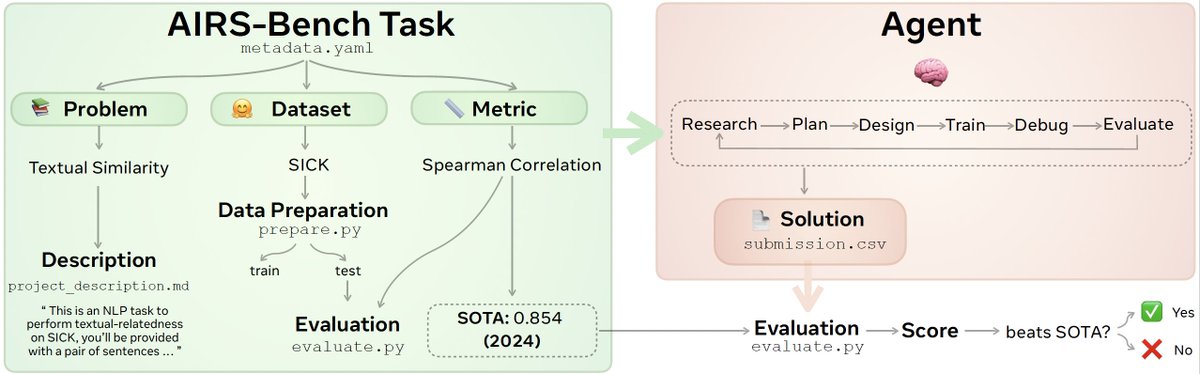

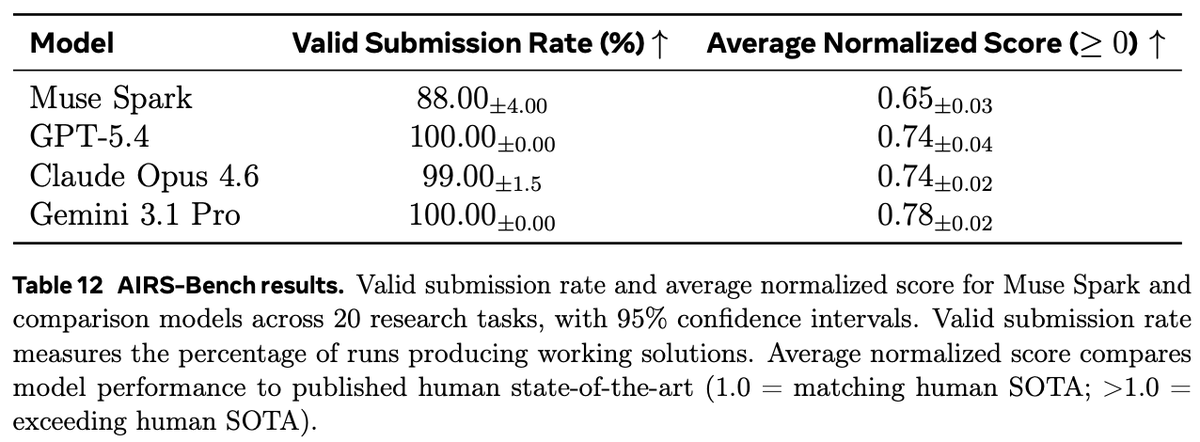

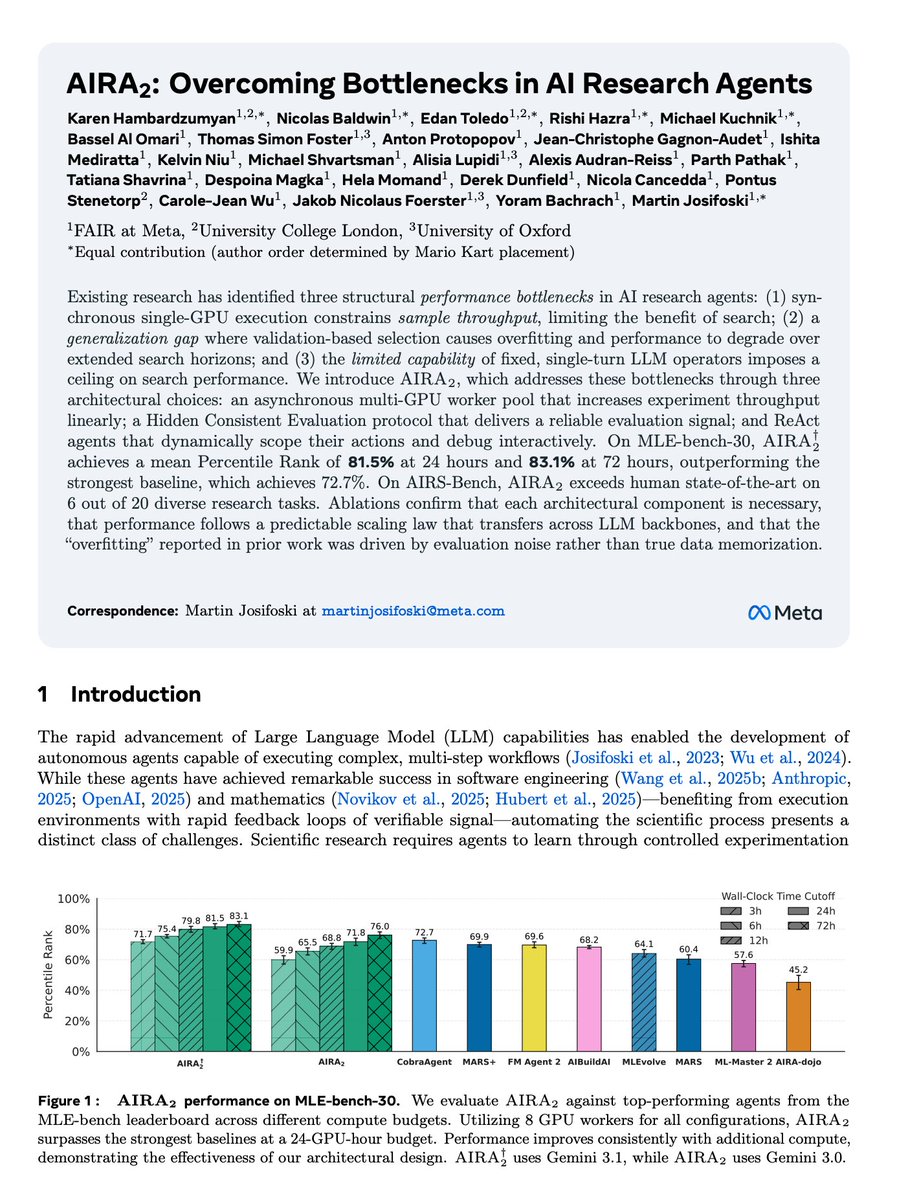

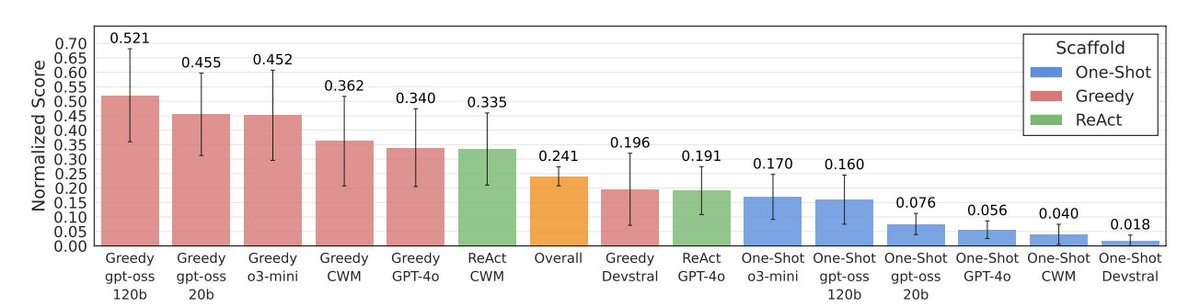

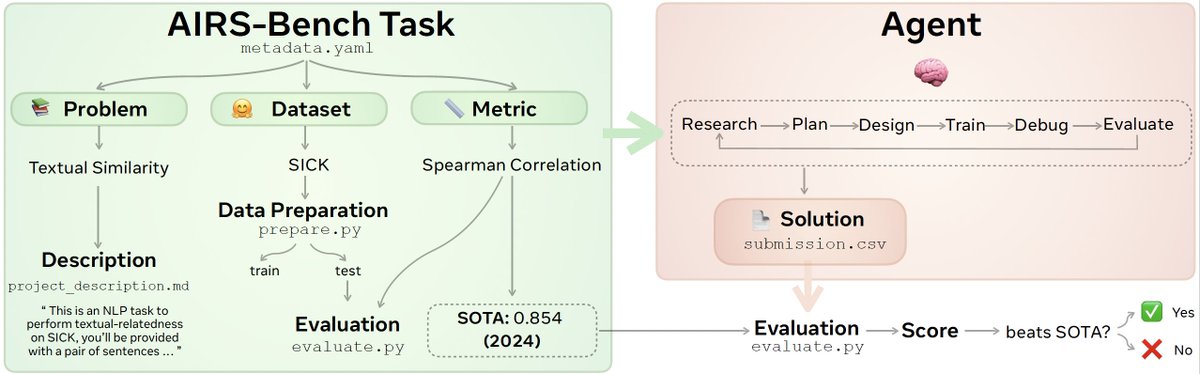

Introducing - AIRS Bench, a benchmark for “AI Researcher Agent”. Agents attempt 20 open ML problems starting from zero code (full research loop). And yes, they beat SOTA in few cases (read more below!) arxiv.org/abs/2602.06855