Karen Hambardzumyan

83 posts

Karen Hambardzumyan

@mahnerak

AI Research Agents @AIatMeta (FAIR), PhD Student @ucl_nlp

Come see our poster today! If you’re interested in research agents and how to unlock scaling for them, come for a chat!

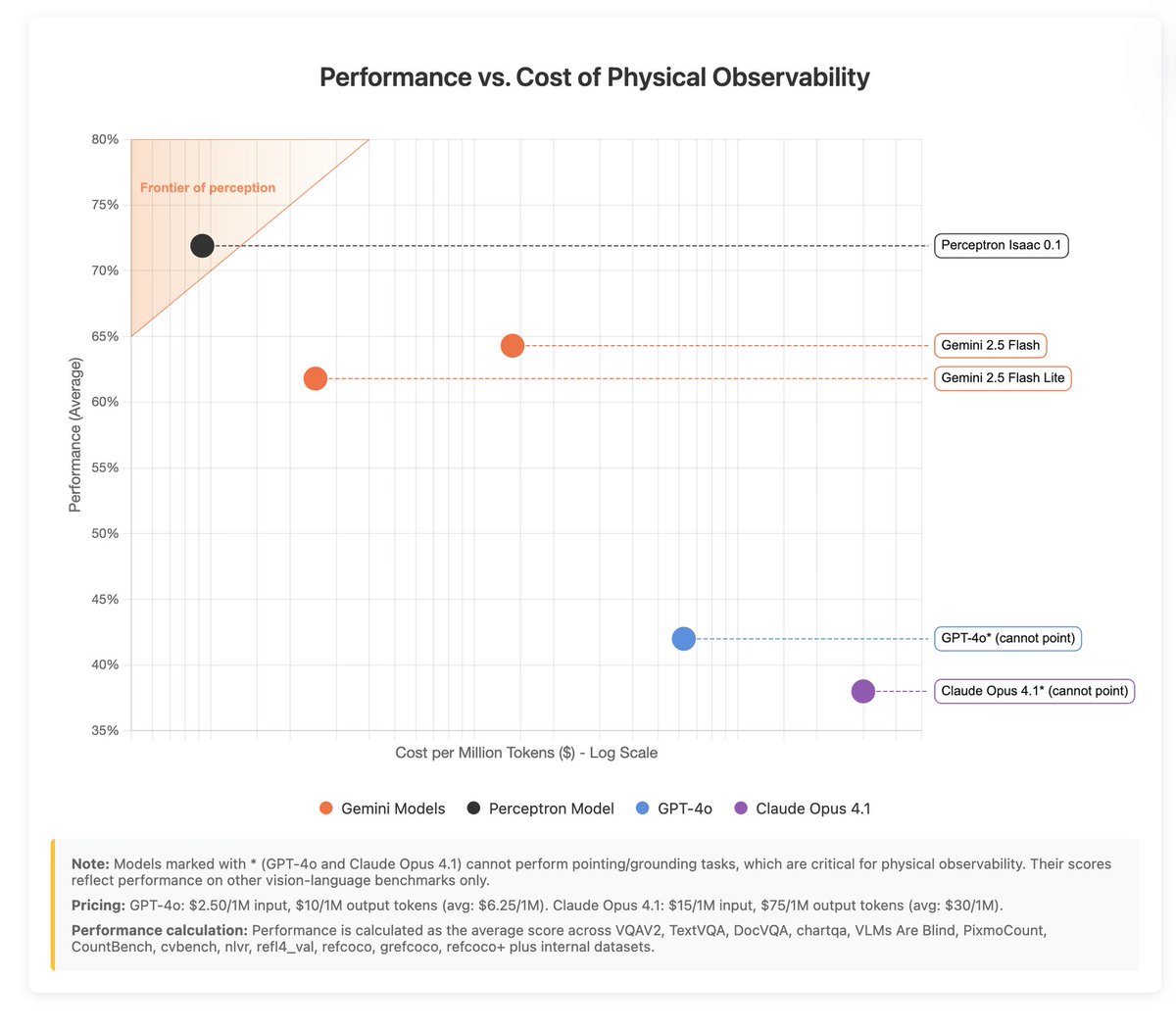

1/ Introducing Isaac 0.1 — our first perceptive-language model. 2B params, open weights. Matches or beats models significantly larger on core perception. We are pushing the efficient frontier for physical AI. perceptron.inc/blog/introduci…

Genie 3 feels like a watershed moment for world models 🌐: we can now generate multi-minute, real-time interactive simulations of any imaginable world. This could be the key missing piece for embodied AGI… and it can also create beautiful beaches with my dog, playable real time

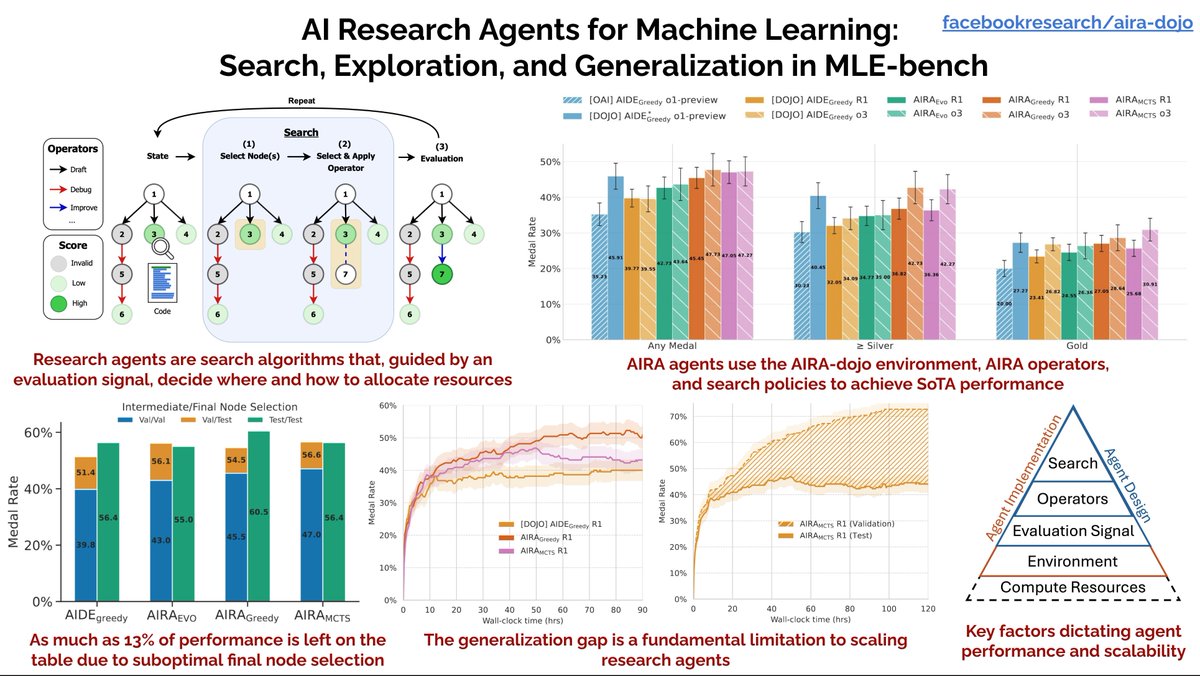

AI Research Agents are becoming proficient at machine learning tasks, but how can we help them search the space of candidate solutions and codebases? Read our new paper looking at MLE-Bench: arxiv.org/pdf/2507.02554 #LLM #Agents #MLEBench

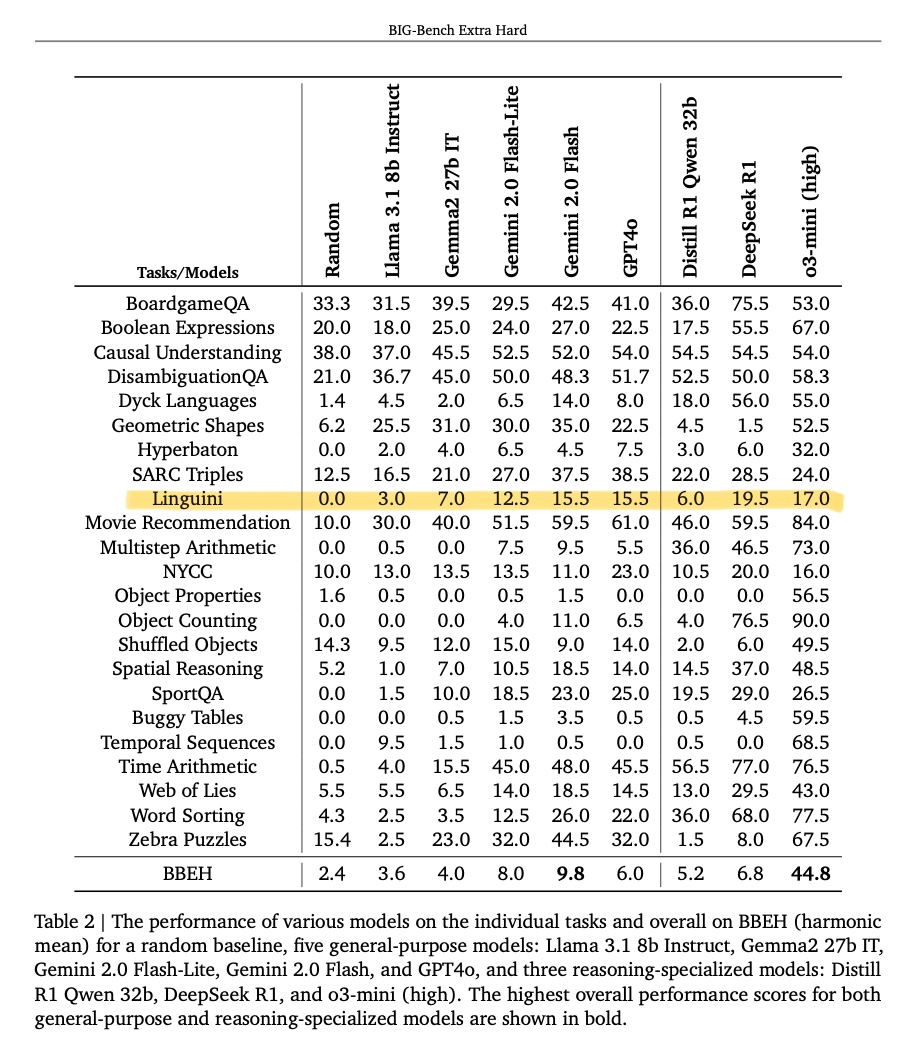

Recently, there has been a lot of talk of LLM agents automating ML research itself. If Llama 5 can create Llama 6, then surely the singularity is just around the corner. How can we get a pulse check on whether current LLMs are capable of driving this kind of total self-improvement? Well, we know humans are pretty good at improving LLMs. In the NanoGPT speedrun challenge, created by @kellerjordan0, human researchers iteratively improved @karpathy's GPT-2 replication, slashing the training time (to the same target validation loss) from 45 minutes to under 3 minutes in just under a year (!). Surely, a necessary (but not sufficient) ability for an LLM that can automatically improve frontier techniques is the ability to *reproduce* known innovations on GPT-2, a tiny language model from over 5 years ago. 🤔 So we took several of the top models and combined them with various search scaffolds to create *LLM speedrunner agents*. We then asked these agents to reproduce each of the NanoGPT speedrun records, starting from the previous record, while providing them access to different forms of hints that revealed the exact changes needed to reach the next record. The results were surprising—not because we thought these agents would ace the benchmark, but because even the best agent failed to recover even half of the speed-up of human innovators on average in the easiest hint mode, where we show the agent the full pseudocode of the changes to the next record. We believe The Automated LLM Speedrunning Benchmark provides a simple eval for measuring the lower bound of LLM agents’ ability to reproduce scientific findings close to the frontier of ML. Beyond scientific reproducibility, this benchmark can also be run without hints, transforming into an automated *scientific innovation* benchmark. When run in "innovation mode," this benchmark effectively extends the NanoGPT speedrun to AI participants! While initial results here indicate that current agents seriously struggle to match human innovators beyond just a couple of records, benchmarks have a tendency to fall. This one is particularly exciting to watch, as new state-of-the-art here by definition implies a form of *superhuman innovation*.

New paper! 🎊 We are delighted to announce our new paper "Robust LLM Safeguarding via Refusal Feature Adversarial Training"! There is a common mechanism behind LLM jailbreaking, and it can be leveraged to make models safer!

Want to know if a ML model was trained on your dataset? Introducing ✨Data Taggants✨! We use data poisoning to leave a harmless and stealthy signature on your dataset that radiates through trained models. Learn how to protect your dataset from unauthorized use... A 🧵