Sabitlenmiş Tweet

Matt Dowle

1.9K posts

@NA_user_ @statquant And 43 of those 112 PRs are tagged WIP anyway. So there's 69 not 112 (out of 1,415 closed) to be prodding about on twitter. Have a look at merged PRs and compare them to open PRs to see why they aren't merged and perhaps you can help.

English

@NA_user_ @statquant And a better opener would have been to appreciate that 1,415 PRs have been merged leaving 112 currently open. Then if you actually look at the details of what happens in the PRs maybe discussing kindly will be helpful and fruitful.

English

hello #rstats #rdatatable devs, I see > 100 prs open on github.com/Rdatatable/dat… some for years (or am I mistaken). I was wondering if you had a particular policy wrt testing prs or if it is particularly hard to ensure it’s good enough

English

@collapse_R @ApacheArrow I am surprised that #rdatatable is not faster even in cases that are GForce optimized. @MattDowle has found issues in benchmarks in the past so tagging him in case he is interested.

English

Added a much requested small benchmark with @ApacheArrow for #rstats and #fastverse (github.com/SebKrantz/coll…). Short:

- grouped sum: arrow seems faster with few groups, #rcollapse with many

- slower on advanced operations, and does not support many of them

Corrections welcome.

English

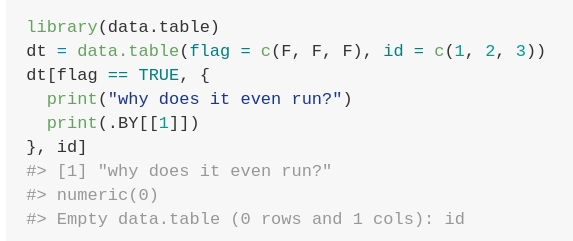

@D_O_Arantes print in j returns NULL. Try something other than NULL as the last value of {} and you'll see V1 present in the result with that type, albeit empty, for consistency. It needs to eval j even on empty to know the names and types to return in the zero-row result.

English

#rdatatable

#rstats

Why?

library(data.table)

dt = data.table(flag = c(F, F, F), id = c(1, 2, 3))

dt[flag == TRUE, {

print("why does it even run?")

print(.BY[[1]])

}, id]

English

@MyKo101AB @kar9222 @Bootvis @EalesJames @statquant Perhaps then I could have replied: 'standard column names' and 'internal dataset always the same' are too vague for anyone to help about any software. If you can provide more details using verbose=TRUE we can try to understand the query and go from there.

English

@MattDowle @kar9222 @Bootvis @EalesJames @statquant And posting that on SO with the data.table tag would have only attracted data.table users demonstrating how to speed up data.table. That was not what I was after. And if it were, I would have done exactly that 🤷🏻♂️

English

I have a function that needs to merge a large user dataset with standard column names (upto ~10M rows) with an internal dataset (~5k rows). The internal dataset is always the same.

We currently use data.table for this. Are there any faster options?

#rstats #rlang #DataScience

English

@MyKo101AB @kar9222 @Bootvis @EalesJames @statquant If you've said "I've posted this query to S.O. <link> and it seems it is the fastest way in data.table. Is there any other software that can do this task faster?" Then that would have come across a lot better.

English

@MattDowle @kar9222 @Bootvis @EalesJames @statquant If you’d have said you were trying to learn to improve your own software, then that would have been informative and created an equal playing field for discussion. But it certainly didn’t come across that way

English

@MyKo101AB @kar9222 @Bootvis @EalesJames @statquant In other words, you're misquoting me. That's not on.

English

@MyKo101AB @kar9222 @Bootvis @EalesJames @statquant That's unfair for the reason it followed after I had asked you for verbose=TRUE output and you said you couldn't, and, you're restating it as if it was the first response, and you've dropped words from the quote that are important in context.

English

@MyKo101AB @kar9222 @Bootvis @EalesJames @statquant And this is where you're mistaken. We know data.table has flaws, and there are known slowdowns. I was even thinking of accelerating efforts to fix them, if you had just sent me the details I asked for. Instead it's you that got offended and called me patronising.

English

@MattDowle @kar9222 @Bootvis @EalesJames @statquant And, admittedly that bottleneck is fractions of a second, but it is still the slowest part of my process. If I can outsource that delay, then great. But it does seem like data.table is still my best option

English

@MyKo101AB @kar9222 @Bootvis @EalesJames @statquant I'm not intending to be patronising. But I'm looking, again, at your original question, and you seem to be looking for a faster solution than data.table for a vague join task. Responding to check details seems utterly reasonable.

English

@MattDowle @kar9222 @Bootvis @EalesJames @statquant If I wanted to know that, I would have asked that. This was a question about technologies available, not how to use data.table with patronising responses.

English

@MyKo101AB @kar9222 @Bootvis @EalesJames @statquant Again, and revised, so even if we could tell you that you were doing something inefficiently that you didn't know, you didn't want to know that?

English

@MattDowle @kar9222 @Bootvis @EalesJames @statquant If I were hitting a bug or a problem then I would post my question on SO. Or if it were a known bug, I'd probably have found the solution before writing my own post. I asked on Twitter because I wanted a broader look at what other options there were 😅

English

@MyKo101AB @kar9222 @Bootvis @EalesJames @statquant So even if you were hitting a known bug, known slowdown, or you didn't know you were doing something inefficiently, you didn't want to know that? (Again: I provided the names of two faster package at joins in some cases, anyway, too.)

English

@kar9222 @Bootvis @EalesJames @statquant @MattDowle Thanks for the benchmarks. I'll be sure to check them out. But again, the point of the original tweet has been missed by almost everyone. I was asking for alternatives and not how to speed up data.table, so there would be no use in an SO or GH post.

English

@0xeinar @MyKo101AB So often there is something wrong that we can address: an index that can be added, or a different type. That's what I was trying to get at. Even so, I pointed him to our benchmarks which show 2 other packages that are faster on join in some cases. I give up.

English

Lesson learned. Don't ask.

Dr. Michael Barrowman@MyKo101AB

@Bootvis @EalesJames @statquant @MattDowle The point I've made repeatedly during this debate (which seems to have turned very defensive by the data.table crowd) is that I wasn't sure if there was something faster than data.table out there. Clearly I've touched a nerve with fans and developers by even asking the question.

English

@MyKo101AB @Bootvis @EalesJames @statquant I wasn't offended before, but now I am. I pointed you to our benchmarks twice which show 2 other packages that are faster in some cases on join, and you didn't even acknowledge that.

English

@Bootvis @EalesJames @statquant @MattDowle The point I've made repeatedly during this debate (which seems to have turned very defensive by the data.table crowd) is that I wasn't sure if there was something faster than data.table out there. Clearly I've touched a nerve with fans and developers by even asking the question.

English

@MyKo101AB @statquant @rstatstweet 'because of the preset column names' is a strange phrase to use. Well, anyway, if you find a way to post obfuscated verbose output (many people have) then I'm happy to look. What have you got to lose? And I pointed you to Polars and cuDF already too.

English

@MattDowle @statquant @rstatstweet I've set appropriate keys, I've tried different data types and I'm fairly sure I've sped data.table to its limits without doing something niche & bespoke (because of the preset column names). Judging by other benchmarks, it's probably the fastest it's going be.

English

@MyKo101AB @statquant @rstatstweet And so absolutely nothing useful from verbose=TRUE? Are you merging character, or factor columns, low or high cardinality, numeric, integer? You're just giving us nothing to go on.

You could try Polars and cuDF. See our benchmarks: h2oai.github.io/db-benchmark/.

English

@MattDowle @statquant @rstatstweet I never said it was slow due to data.table. it's merging large files so it's bound to be slow. I'm sorry if I've offended you by using the word "slow" but no harm was meant. Just investigating options. Yes I've turned verbose on, obviously.

English

@MyKo101AB @statquant @rstatstweet That would be possible. But there are more amazing things to do before that surely. Currently the queue of PRs from other people who have quietly contributed is the priority.

English

@MattDowle @statquant @rstatstweet Also, is there anyway to turn on a progress bar, similar to `fread()` has but for merging? That would be amazing!

English

@MyKo101AB @statquant @rstatstweet If an SQL query was slow, would you ask the same question in the same way? I hope not. You should look at the query plan, see if indexes are there and being used, etc. Have you even turned on verbose=TRUE and looked yourself?

English

@MattDowle @statquant @rstatstweet It's not really a query that's SO suitable. Just wondering whether data.table really is the fastest option. Other than figuring out a way to hardcode the merge and manually searching, I think data.table is the fastest option. My python colleagues will be disappointed

English