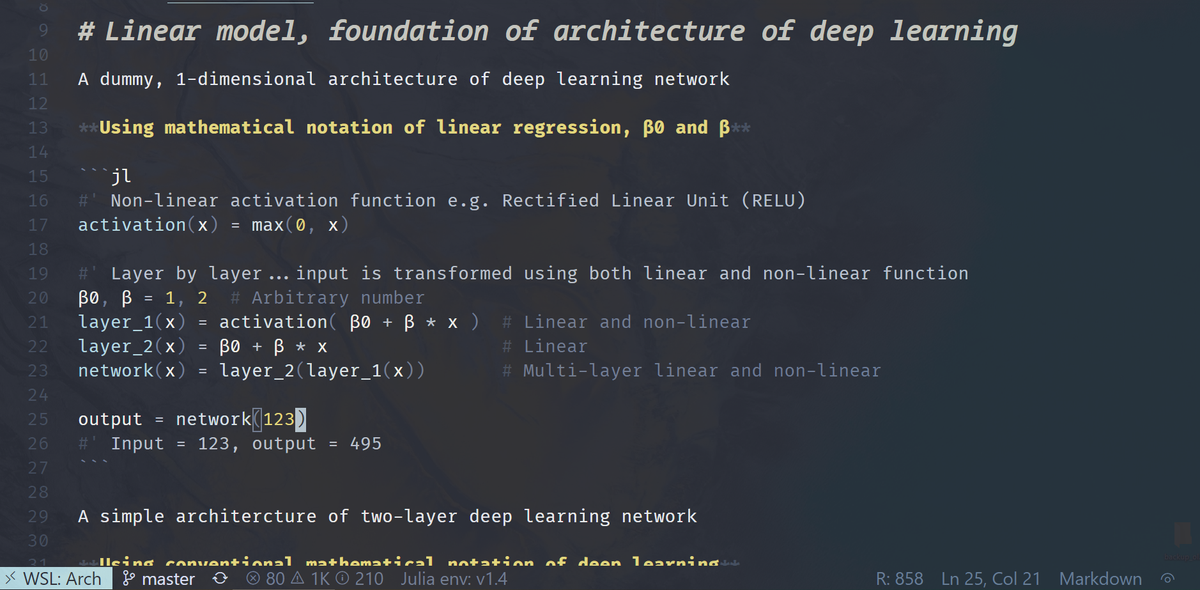

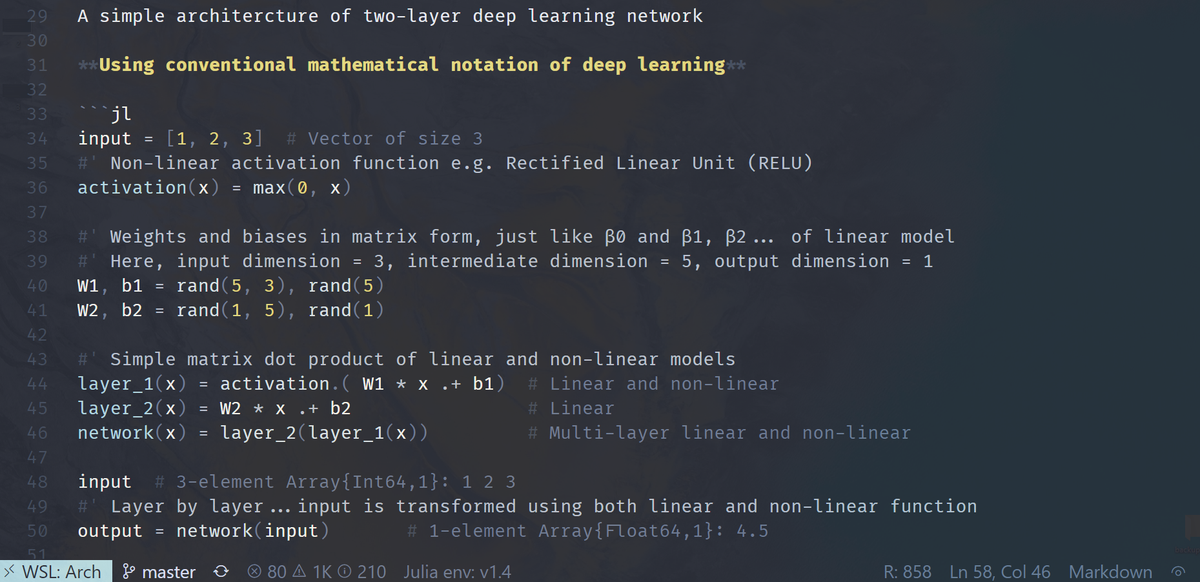

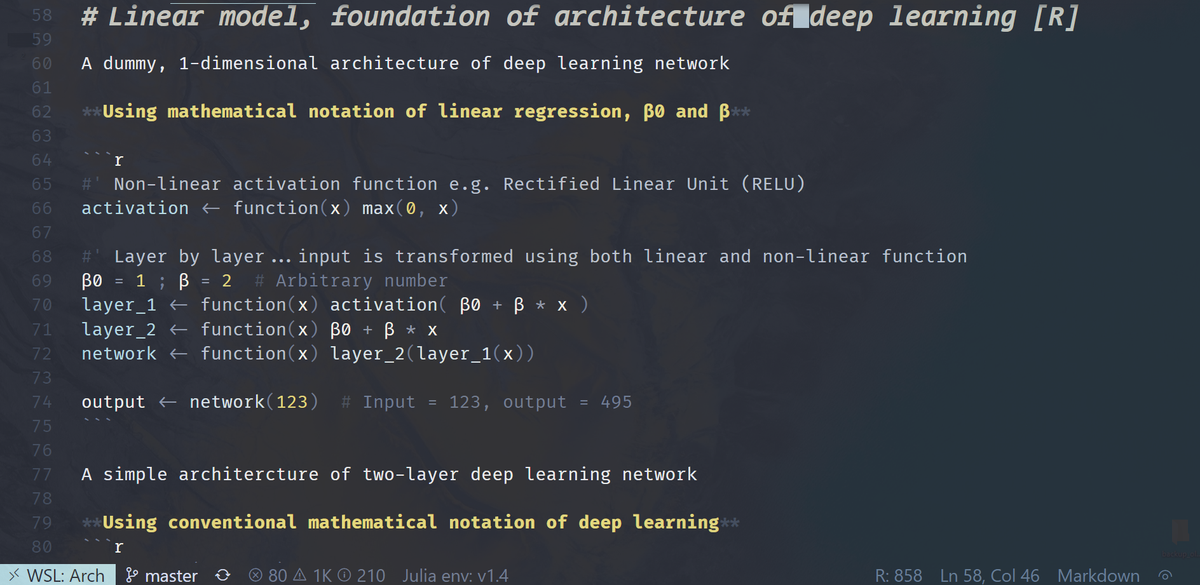

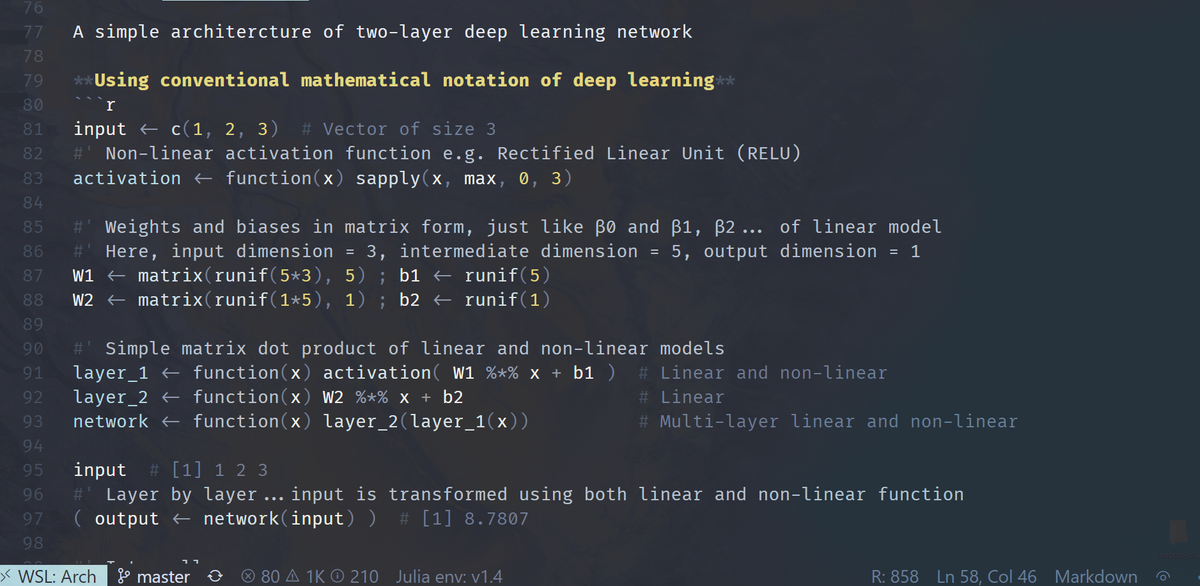

How about deep learning? Super non-linear, right? Well, as a function of some non-linear activations, it's IJALM. You can put lipstick on a linear model, but it’s still a linear model. Fit it w/least squares … w/ bells & whistles like dropout, SGD, & regularization. 11/