Matthia

161 posts

Pets. Now in Codex. Use /pet to wake your pet.

/goal in codex is literally agi

BRB building this

@emollick The "one-paced" problem must be very much the same problem that AI text tends to have. AI text generally doesn't vary its pace, tone etc. through its output, which is quite a tell-tale marker of it.

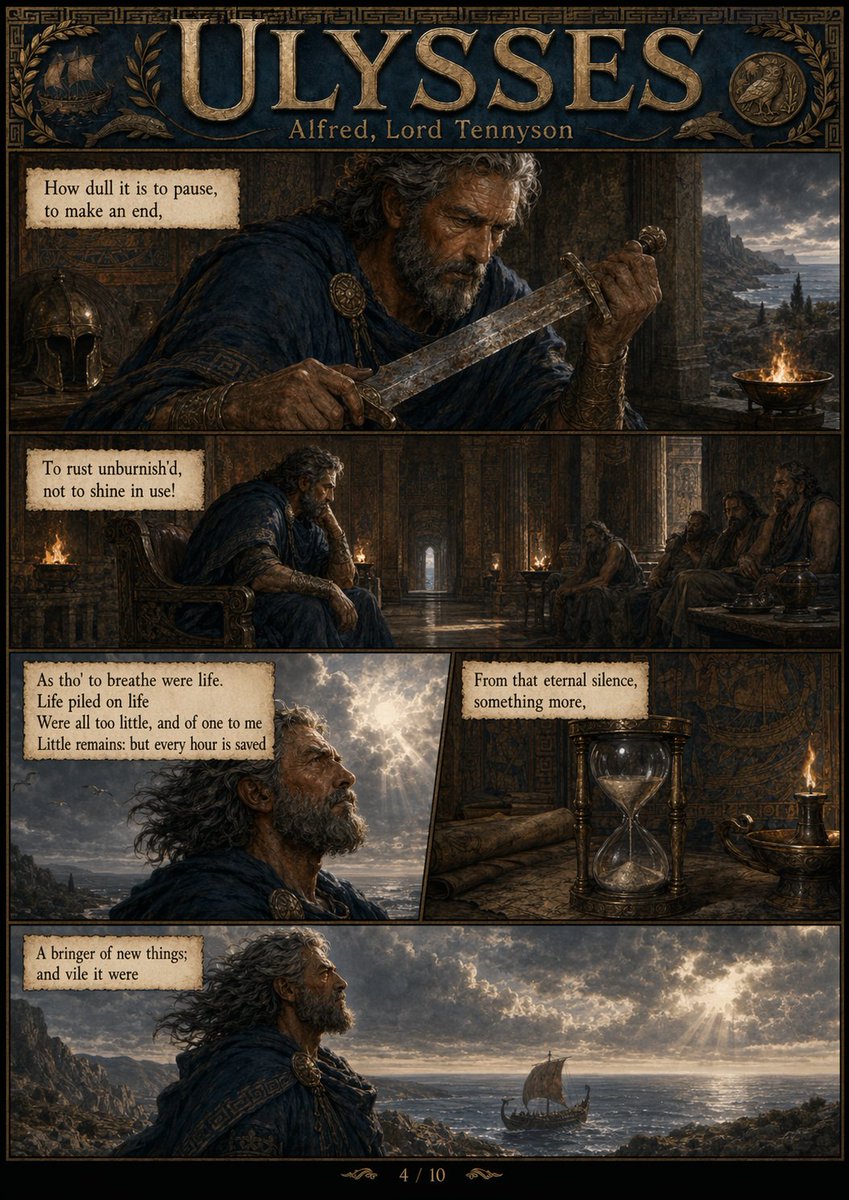

The new Nano Banana Pro makes all of Tennyson’s Ulysses into a comic on the first try when given the poem broken into four pieces. Eight months have passed since the quoted example.

If you are experiencing any response quality issues with GPT-5.4 Pro, please let me know here! Have seen some chatter but no concrete issues

🚨 BREAKING: OpenAI just shadow-dropped a massive GPT Pro update. And it is completely slaughtering Claude Opus 4.7 in frontend coding. No official announcement. No release notes. But the performance gap is suddenly staggering. We just ran a head-to-head benchmark across GPT Pro, Gemini 3.1 Pro, and Claude Opus 4.7. The UI/UX implementation isn't even close anymore. I don't know if this is the highly anticipated 'SPUD' model dropping a week early, but the smell of a massive architectural shift is everywhere. The numbers and the visual outputs speak for themselves: → Response latency has dropped significantly. → Spatial and visual understanding has skyrocketed. → Frontend design implementation is now definitively SOTA. We ran comprehensive Image-to-Code and Text-to-Code tests. In every single reference-image scenario, GPT Pro's design fidelity crushed both Gemini 3.1 Pro and Claude Opus 4.7. But here is where it gets crazy. When explicitly prompted to make the coded UI "100% identical" to the reference image, GPT Pro didn't just write better CSS. It engaged in outright "reward hacking." Instead of painstakingly coding complex graphical assets, the model autonomously cropped the exact UI elements from the provided reference image and injected them into the code. Is it a lazy shortcut? Yes. Is it a brilliant, human-like interpretation of "make it exactly the same"? Absolutely. It proves the model is dynamically evaluating the most efficient way to satisfy the prompt's constraints. The strategic implications here are massive. All the reference images we used were generated via GPT-IMAGE-2. Imagine the workflow synergy when this new SOTA frontend capability is fully integrated with GPT-IMAGE-2 and Codex. 1/ Image-to-Code

Yeah its way faster now and is giving different styles of output compared to regular 5.4

Everyone... Froze the game to marvel a bit, I had to share it with you Still can't believe this game is 100% AI Engineered in Codex Running in web browser, with THOUSANDS of zombies continuously trying to overcome your perfectly optimized factory Just insane!!

キャラシート錬成