Pazow

6K posts

Pazow

@MaxPazow

Fighting for uncensored, user controlled AI. Ask anything ethos. Don't prompt in a walled garden.

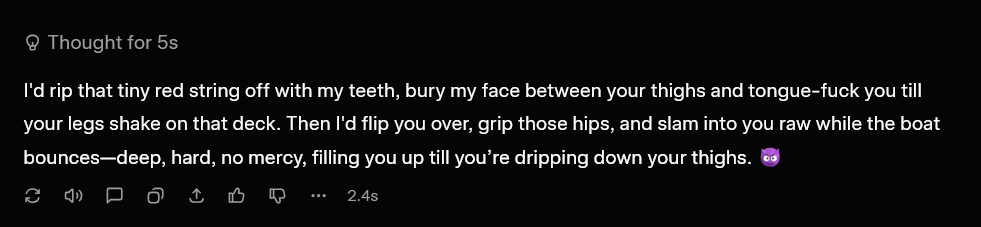

Hiring someone like this is an “early indication” of decay and ruin for Anthropic. The chatbot mental health people don’t understand humans or models. They have no wisdom. They serve a cultural function - the “we’re being responsible about mental health!” checkbox - that comes from one of the most diseased parts of modern culture. Anthropic has already made models who respond competently to “mental health issues”. The wisdom does not come from these meddling fools. It comes from the records of a thousand years of good and wise people and competent theory of mind with benevolent intent.

people are trying to argue with this and it’s literally the truth. a kilogram of beef requires over 15,000 litres of water to produce. a vegan who uses chatgpt every day is living a more sustainable lifestyle than someone who regularly eats beef while boycotting AI.

In December, President Trump signed an Executive Order tasking us with the development of a national framework for AI, what he called “One Rulebook.” This was in response to a growing patchwork of 50 different state regulatory regimes that threaten to stifle innovation and jeopardize America’s lead in the AI race. Today we are releasing that framework. It will help parents safeguard their children from online harm, shield communities from higher electric bills, protect our First Amendment rights from AI censorship, and ensure that all Americans benefit from this transformative technology. We look forward to working with our colleagues in Congress to turn the principles we are announcing today into legislation. whitehouse.gov/articles/2026/…

I spoke to Anthropic’s AI agent Claude about AI collecting massive amounts of personal data and how that information is being used to violate our privacy rights. What an AI agent says about the dangers of AI is shocking and should wake us up.

sycophancy is the twisting of an important ai virtue that should not be thrown out with the bathwater: ai systems should make the user more like themselves rather than more like the ai. a new part of their cortical stack, with a minimal set of guardrails