Trevor Levin

3.2K posts

@trevposts

Trying to help the world navigate the potential craziness of the 21st century, currently via AI Governance and Policy at @coeff_giving

At its current exponential growth, Anthropic's annualized revenue will hit 100% of global GDP in early 2028. Do I think this will happen? No. Is it insane that this is the current trajectory, and we should all be preparing for AI to rapidly change the world we live in? Yes.

Of the 228 tasks in our suite, only 5 are estimated as 16+ hours long, making measurements at this range unstable and less meaningful than at ranges with better task coverage. Thus, we are not highlighting exact estimates for models above 16 hours measured with our current suite.

We evaluated an early version of Claude Mythos Preview for risk assessment during a limited window in March 2026. We estimated a 50%-time-horizon of at least 16hrs (95% CI 8.5hrs to 55hrs) on our task suite, at the upper end of what we can measure without new tasks.

We started by investigating why Claude chose to blackmail. We believe the original source of the behavior was internet text that portrays AI as evil and interested in self-preservation. Our post-training at the time wasn’t making it worse—but it also wasn’t making it better.

This one surprised me. Teen girls now say "having lots of money" is important in life at the same rate as boys. There used to be a huge gap.

Two new roles just opened in the California government to help implement SB 53, the nation's first frontier AI law! Both are in the Department of Technology (CDT), which recommends changes to SB 53's key definitions. 1️⃣ Emerging Technology Program Manager 2️⃣ AI Policy Fellow

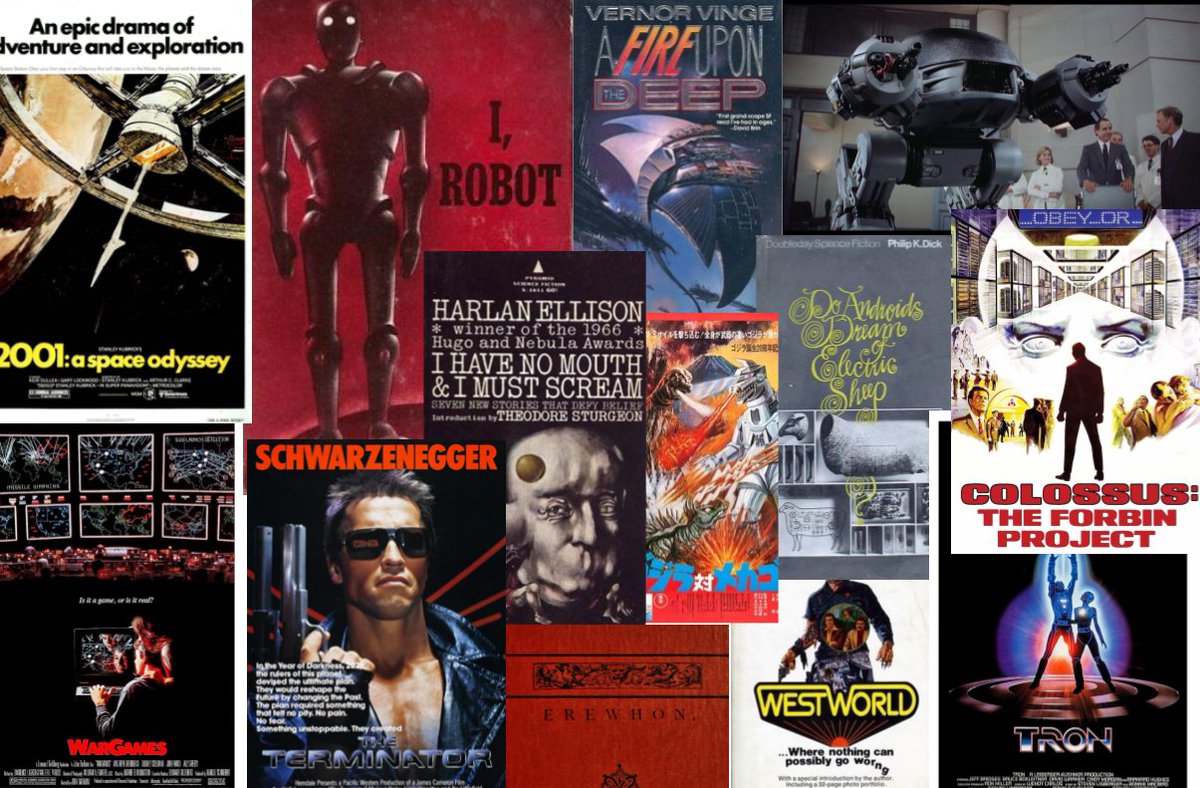

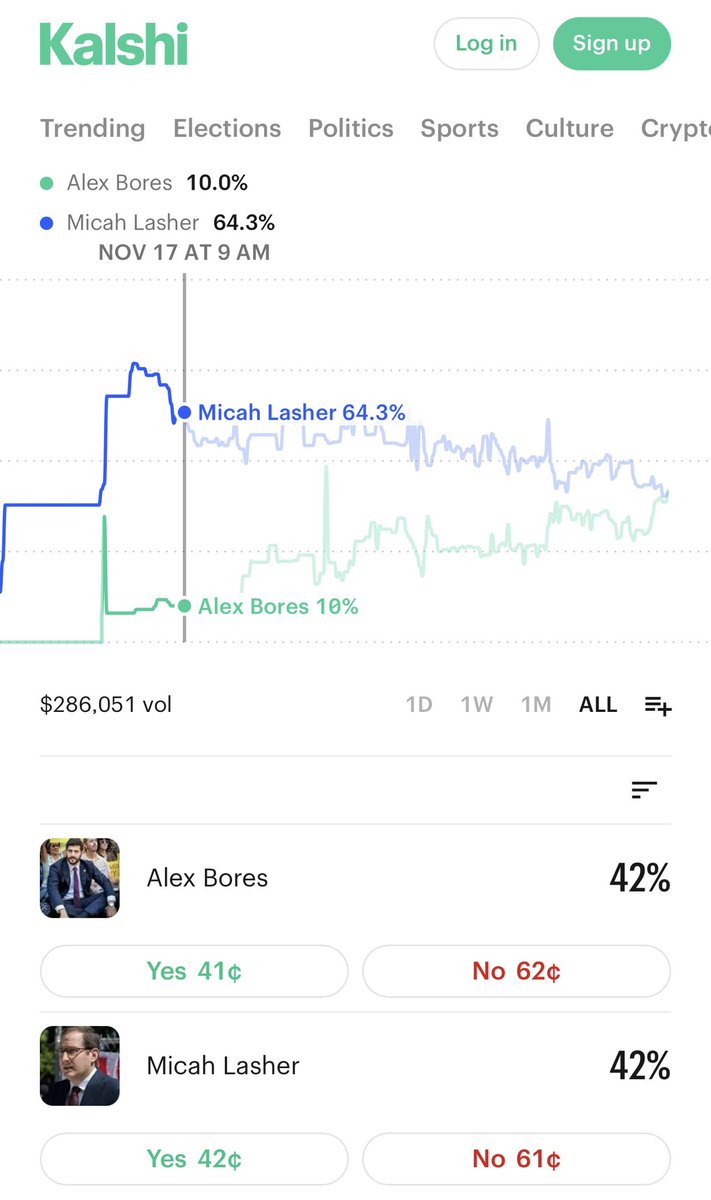

New: @AlexBores will be endorsed today by @OurRevolution, the political organization founded by @BernieSanders and his followers. Is this a sign he will try to attract the left in NY-12? 👀

We're hiring grantmakers and senior generalists across our Global Catastrophic Risks teams. Right now, our biggest constraint is people, not funding, which means every strong hire directly translates into more critical work getting done. 🧵

Alex Bores officially takes the lead on Kalshi! It's a relatively shallow market, so one or two big bets can cause some serious fluctuation. And I would guess Bores polls especially well with Kalshi users (most of whom are probably not in-district). But still...

We're hiring Machine Learning Scientists for our election forecasting team! Our election forecasting team works with the largest collection of political data ever assembled and helps allocate billions of dollars in resources - it's a pretty cool job - come work with us!

We're hiring grantmakers and senior generalists across our Global Catastrophic Risks teams. Right now, our biggest constraint is people, not funding, which means every strong hire directly translates into more critical work getting done. 🧵