“If your $500K engineer isn’t burning at least $250K in tokens, something is wrong.”

John C

32.5K posts

@MeJohnC

Will you fight? or will you perish like a dog? AI Automation Engineer

“If your $500K engineer isn’t burning at least $250K in tokens, something is wrong.”

Jensen Huang is loving the new Dell Pro Max with GB300 at NVIDIA GTC.💙 They asked me to sign it, but I already did 😉

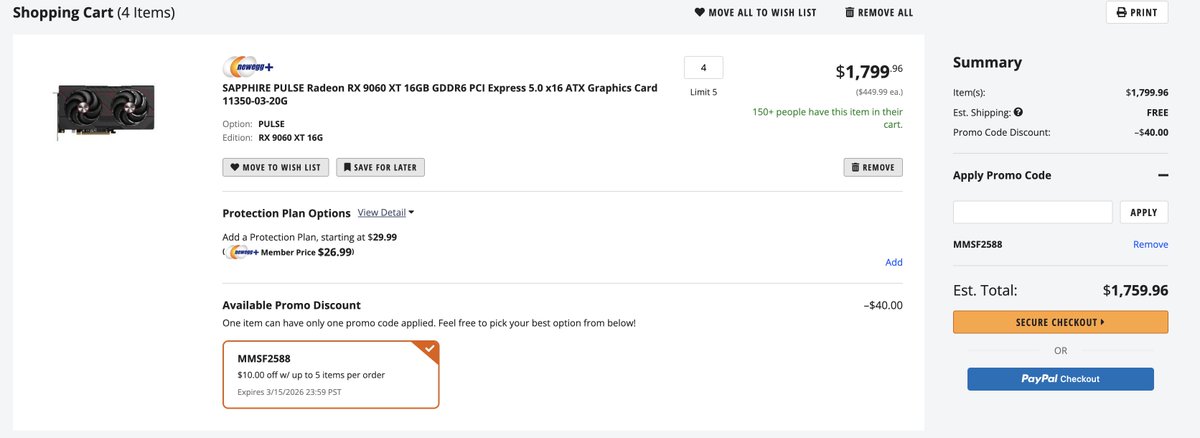

inspired by auto-research, I made hermes-agent make itself better, infinitely (sort-of). I gave hermes-agent a rented 5090 and Qwen3.5:4b and told it to make the best research-agent for hermes. The workflow proposed was: -Run benchmark on model -add a QLoRA or finetune -load model into system memory -repeat and so on, it ended up making a model that outperformed Qwen3.5:27b (and almost doubling its own performance) in DeepPlanning (17.8 to 31.2) and related benchmarks I'm sure with a longer time given (this was done in 7 hours) this model could exceed 31.2 and keep iterating. this is a submission to the @NousResearch @Teknium hackathon, amazing product they have here. Below is a graphic of the improvement per-finetune (image made with gpt-image-1.5)

Damn, they actually passed it? Unlicensed operation of 3D printers and CNCs is now a felony in Washington? I get that it's fashionable to hate manufacturing in some places but how many kids and FIRST robotics teams are going to end up with criminal records because of this?

@MeJohnC This genuinely made our day. Thank you. That's the whole point: cut through the noise, show the math, protect the people who are just getting started. Appreciate you.

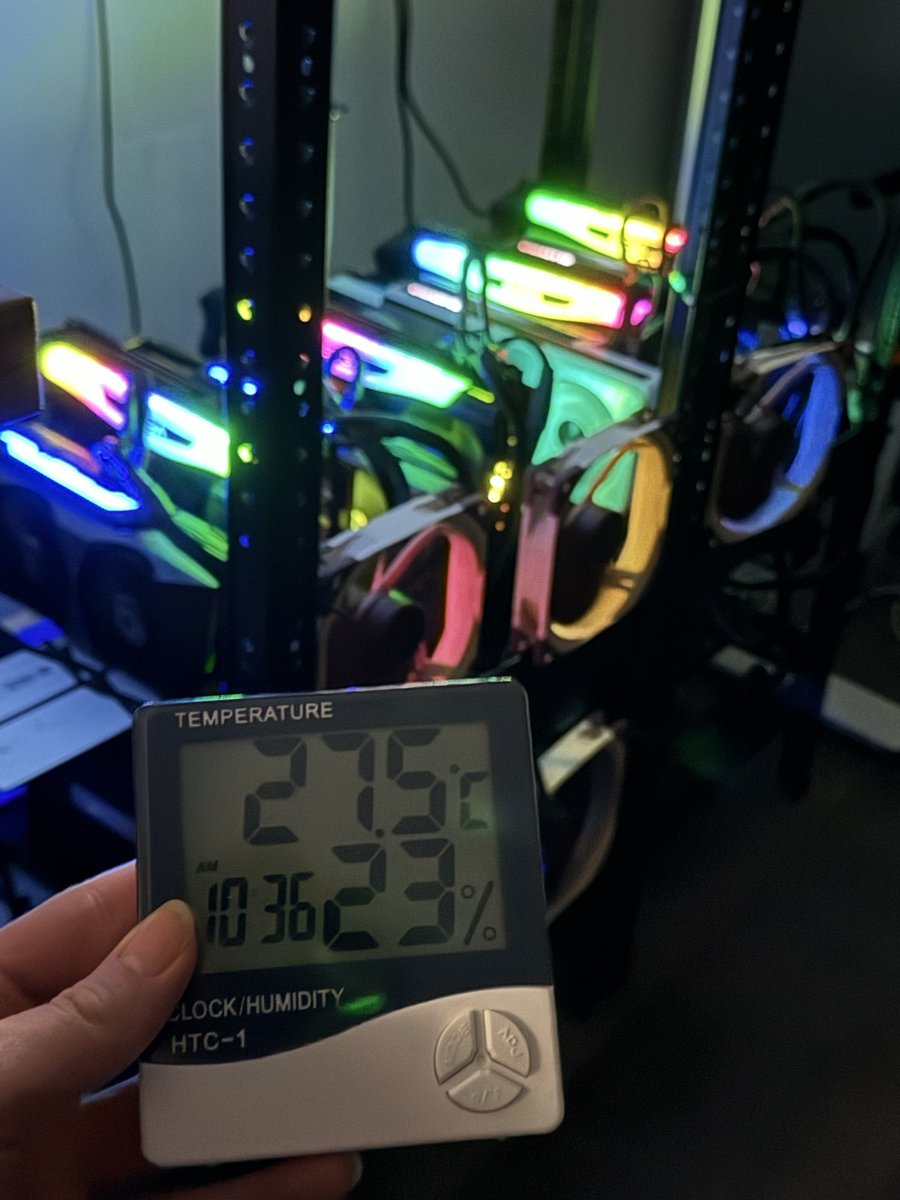

I am not one to interact with a stream, like ever, but I found Kai late last night while looking into Hermes agent stuff, and this stream ended up being a fun day full of learning, experiementation and lots of great convos. The AI community opens a lot of doors when one puts themself out there. Kai is a real one!!