Michael Sutton

3.3K posts

Michael Sutton

@michaelsuttonil

Computer science, graph theory, parallelism, consensus; taking Kaspa to the next level

To me the bottleneck on this "legit defi usage" is the aggregate bizdev talent in kas ecosystem. I guess we'll be able to assess how we score on that once Tocatta launches with the necessary primitives and features. (And since we can't know for sure, and while others are making hopefully successful efforts to attract existing defi activity from other chains, I am attempting a parallel trajectory to bootstrap coordination markets on kaspa. Hopefully at least one of these efforts succeeds.)

To me the bottleneck on this "legit defi usage" is the aggregate bizdev talent in kas ecosystem. I guess we'll be able to assess how we score on that once Tocatta launches with the necessary primitives and features. (And since we can't know for sure, and while others are making hopefully successful efforts to attract existing defi activity from other chains, I am attempting a parallel trajectory to bootstrap coordination markets on kaspa. Hopefully at least one of these efforts succeeds.)

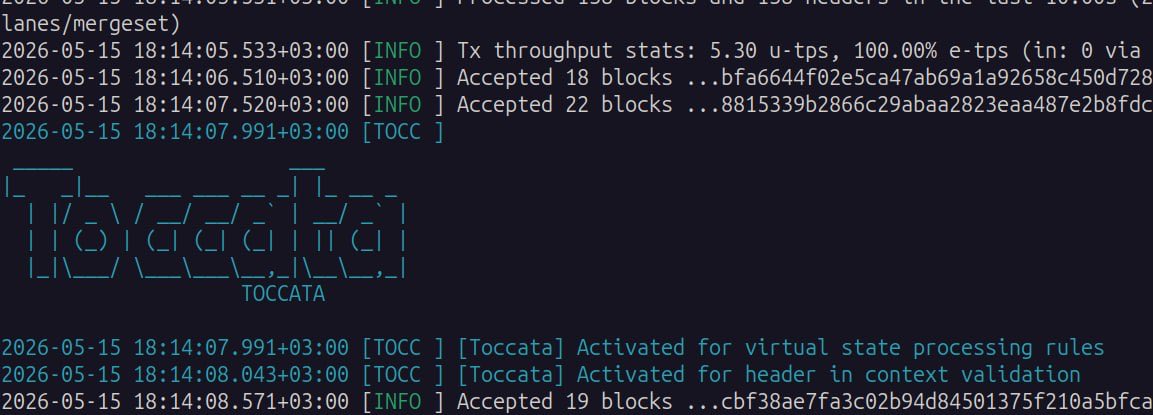

I did not follow the full convo, but a few clarifications came to mind from the parts I did read. 1. @maxibitcat’s idea of “ordering of this type of transaction only at merge time, using some randomness coming from the whole round” obviously requires a consensus change. btw I’d apply it to all txs in order to reduce MEV also for based apps etc. 2. there is a ladder here: step 1 (what I referred to in the main post): non-consensus change that can be implemented post Toccata activation: accept the tx to the mempool without resolving the shared-state UTXO (call this abstract entity X). when building the block template, resolve to the most updated UTXO of X. requires indexing the UTXO set by covenant ids. con: parallel miners can resolve different txs to the same UTXO so some of them will not count (effectively serializing access to X to 1 per round). step 2: a future consensus change that resolves X at block merge time according to the final mergeset order. as I’ve told bitcat, this would break fundamental assumptions of the UTXO model and I highly doubt we can do this. eg it breaks the linkage between a tx id and the UTXOs the tx spends (in graph terms it breaks the hashable edge structure of the tx DAG). step 3 (independent of 2): randomize the per-round order and don’t give the merging block full control. relevant to any MEV-hardness claim over Kaspa. 3. interestingly, step 1 does not allow easy front-running and sandwiching. that’s bcs Kaspa rules forbid chained txs within the same block, so the miner can’t have both his tx and the attacked tx in his block (the latter must use the output of the earlier as input).

@_fnal @michaelsuttonil Yes this is what I had in mind and proposed in the r&d chat. It's not in the next update though, it's still just an idea up for discussion. That would allow native L1 DeFi which would be pretty cool imo.