Minae Kwon

117 posts

Tool use is now available in beta to all customers in the Anthropic Messages API, enabling Claude to interact with external tools using structured outputs.

New (2h13m 😅) lecture: "Let's build the GPT Tokenizer" Tokenizers are a completely separate stage of the LLM pipeline: they have their own training set, training algorithm (Byte Pair Encoding), and after training implement two functions: encode() from strings to tokens, and decode() back from tokens to strings. In this lecture we build from scratch the Tokenizer used in the GPT series from OpenAI.

Diffusion models are slow to sample from. Many methods propose to sample using *fewer* steps, but this can hurt sample quality. We introduce ParaDiGMS, a new method to speed up diffusion models by 2-4x while using the *same* number of steps! arxiv.org/abs/2305.16317

Today we’re joined by @ShreyaR to discuss LLM safety for production applications! We explore hallucinations, RAG, evaluation & tooling for LLMs as well as Guardrails, an open-source project enforcing correctness in LMs. 🎧/🎥 Check out the episode at twimlai.com/go/647

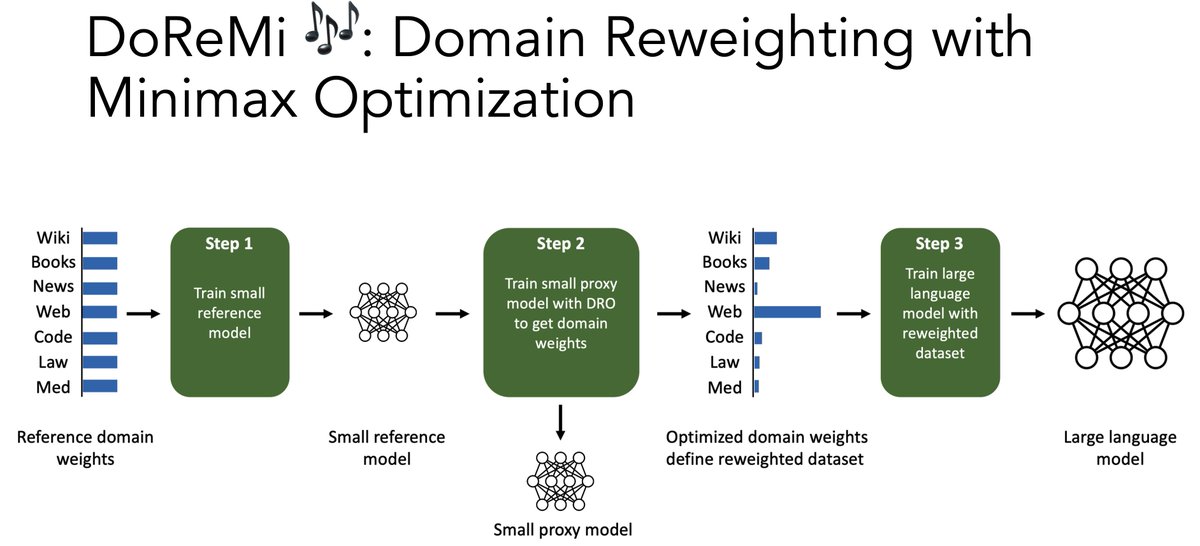

Should LMs train on more books, news, or web data? Introducing DoReMi🎶, which optimizes the data mixture with a small 280M model. Our data mixture makes 8B Pile models train 2.6x faster, get +6.5% few-shot acc, and get lower pplx on *all* domains! 🧵⬇️ arxiv.org/abs/2305.10429