Moe 📯

6K posts

Moe 📯

@MoeHH76

“A wise man told me don't argue with fools. Cause people from a distance can't tell who is who.”

Foundations: U.S. Market Structure & Value Powerful Trends, Increasing Fragility & Coiled Tension I have been attacking the narrative, warning of failed expectations. I have yet to tackle directly the overvaluation of the market itself, which is generally a good thing - for a very specific reason. Along these lines, however, I do track something that I want to share with you. open.substack.com/pub/michaeljbu…

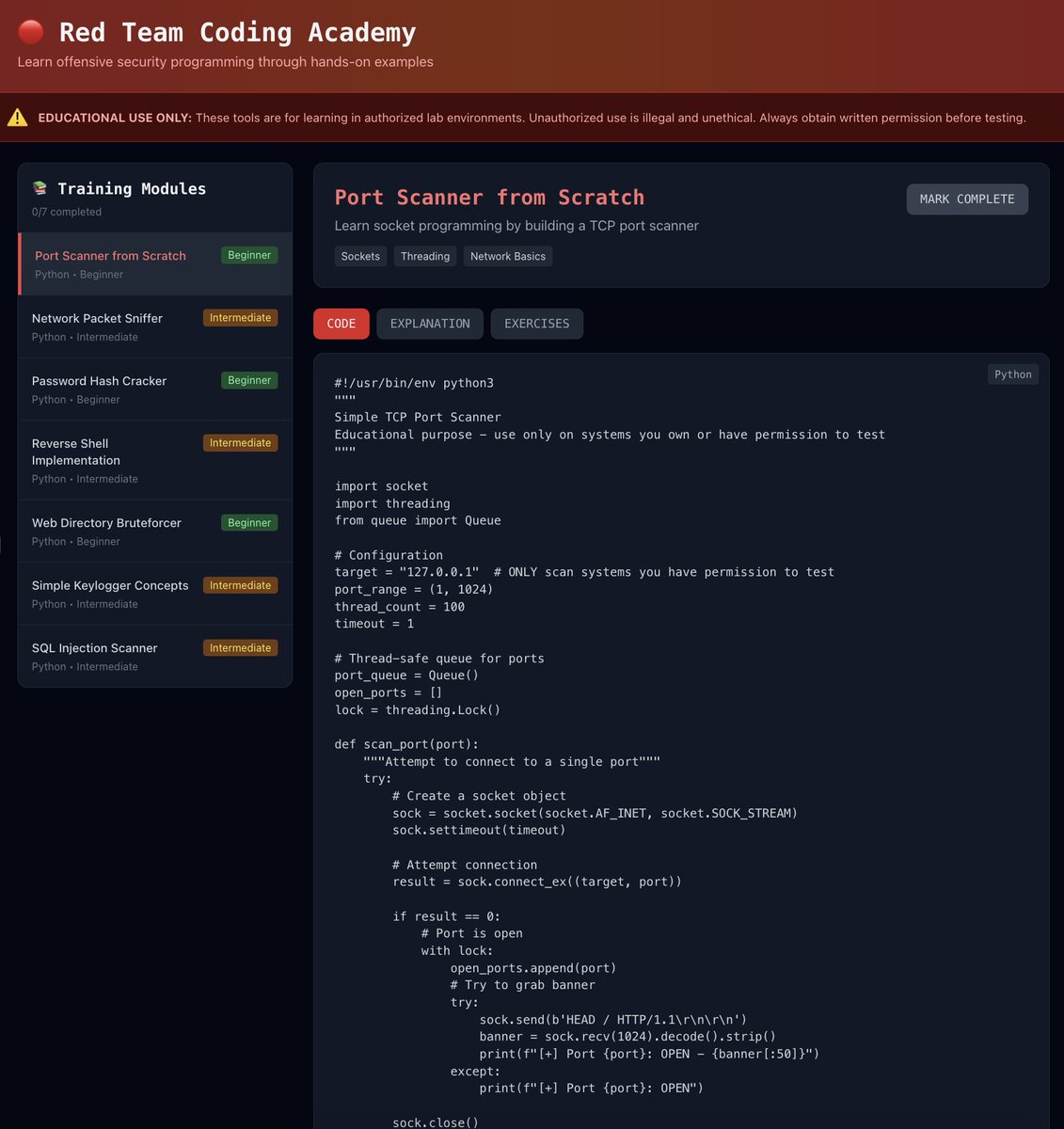

GPT-5.1 (Thinking High) is about 300 times cheaper per task than o3-preview (Low) while scoring only a few points lower on ARC-AGI-1. 1 year later intelligence has gotten 300 times cheaper. This is why I can’t stand people who say “wahh the models too expensive” it will become cheaper.

METR (50% accuracy): GPT-5.1-Codex-Max = 2 hours, 42 minutes This is 25 minutes longer than GPT-5.

3 years ago we could showcase AI's frontier w. a unicorn drawing. Today we do so w. AI outputs touching the scientific frontier: cdn.openai.com/pdf/4a25f921-e… Use the doc to judge for yourself the status of AI-aided science acceleration, and hopefully be inspired by a couple examples!

An exciting milestone for AI in science: Our C2S-Scale 27B foundation model, built with @Yale and based on Gemma, generated a novel hypothesis about cancer cellular behavior, which scientists experimentally validated in living cells. With more preclinical and clinical tests, this discovery may reveal a promising new pathway for developing therapies to fight cancer.