Poetica retweetledi

This model has been #1 trending for 3 weeks now.

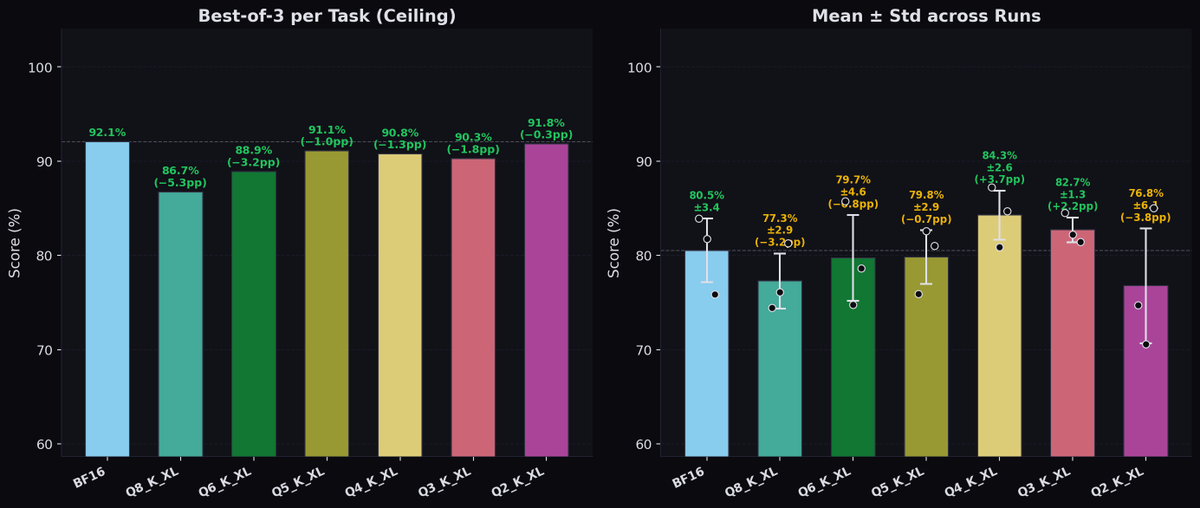

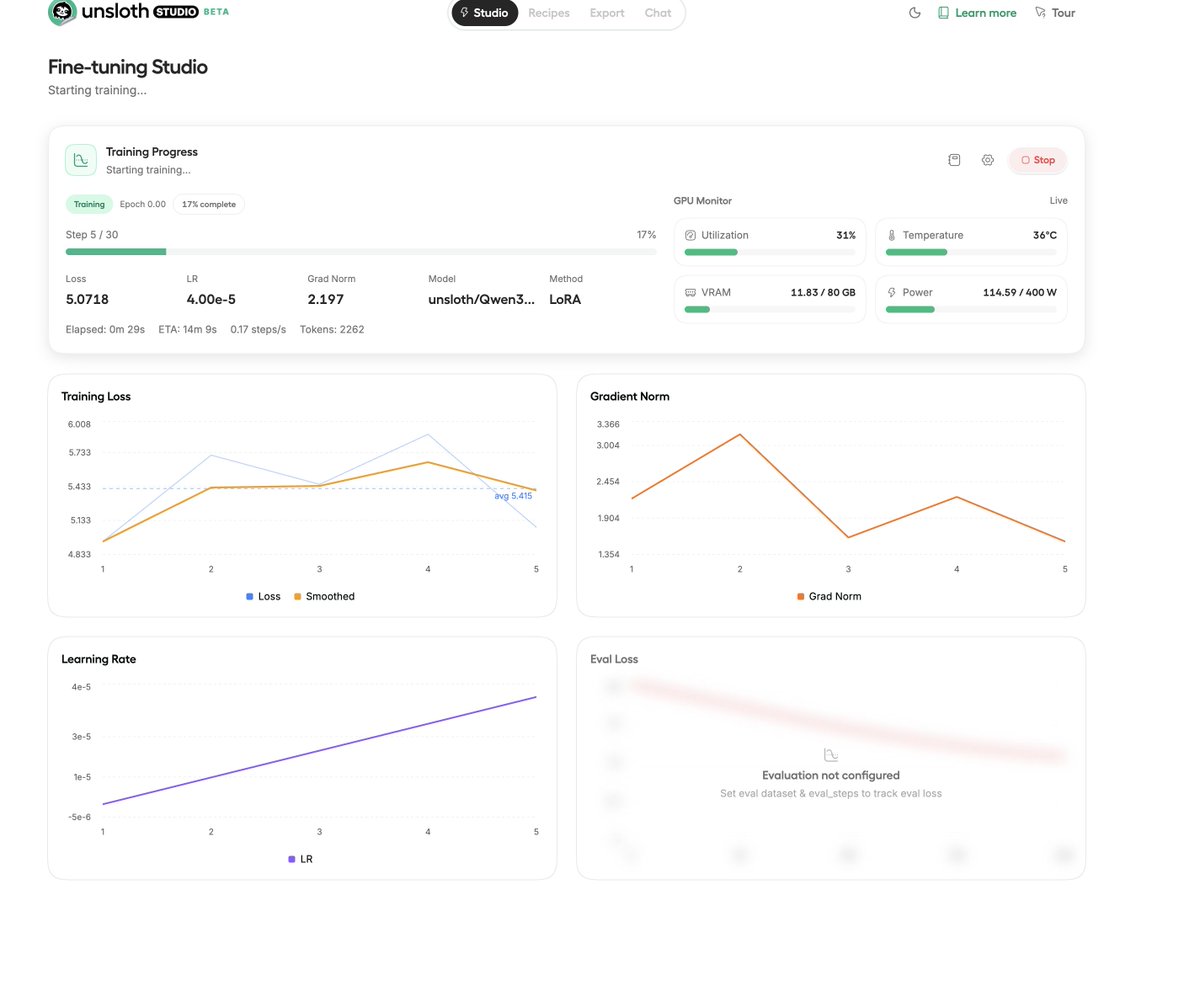

It's Qwen3.5-27B fine-tuned on distilled data from Claude-4.6-Opus (reasoning). Trained via Unsloth.

Runs locally on 16GB in 4-bit or 32GB in 8-bit.

Model: huggingface.co/Jackrong/Qwen3…

English