Nikolas Göbel

582 posts

Nikolas Göbel

@NikolasGoebel

Making mental models executable @RelationalAI

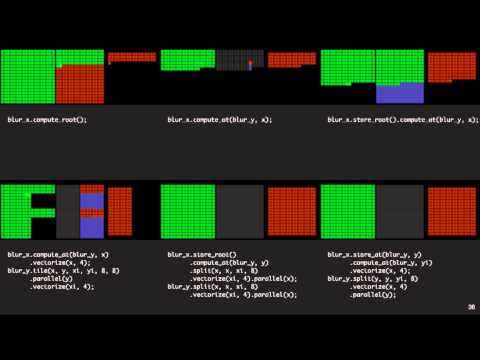

@cedar_db is incredibly cool and more people should know about it. They’re a team of PhDs in Munich building a new relational database, on top of almost 10 years of academic research, that crushes existing benchmarks and maybe (finally?) gets us to the HTAP grail. The core idea is that existing RDBMSes like MySQL and Postgres were built more than 30 years ago, on assumptions about hardware constraints that are just not true anymore. These ecosystems have evolved admirably but ultimately…it’s a database. It’s built not to change very much. Here are a few of the ways that CedarDB is rethinking every element of the database: 1) A better query optimizer In the last 30 years we’ve made a lot of progress on how to optimize SQL queries, to the point where an optimized query can easily outperform a not-so-optimized query by a ton. But not many query optimization improvements have made the leap from research into databases today. CedarDB did a few things on this front: Implemented the unnesting algorithm developed by Thomas Neumann (one of the leaders of the Umbra research project CedarDB came from) — an improvement of more than 1000x Developed a novel approach to join ordering using adaptive optimization that can handle 5K+ relations Created a statistics subsystem that tells the optimizer things that traditional databases can’t 2) What if your database was actually a compiler? CedarDB doesn’t interpret queries, it instead generates code. For every SQL query that a user writes, CedarDB processes, optimizes it, and generates machine code that the CPU can directly execute. This has been a holy grail for a while, and they implemented it via a custom low-level language that is cheap to convert into machine code via a custom assembler. Another way that CedarDB improves performance is through Adaptive Query Execution. Essentially they start executing each query immediately with a “quick and dirty” version, while working on better versions in the background. 3) Taking advantage of all cores / Ahmdal’s law Distributing fairly between all available cores is notoriously difficult, and the CedarDB team would argue that most databases underutilize their hardware. Their clever approach to this problem is called morsel-driven parallelism. CedarDB breaks down queries into segments: pipelines of self-contained operations. Then, data is divided into “morsels” per segment – small input data chunks containing roughly ~100K tuples each. You can read more in the original paper here: db.in.tum.de/~leis/papers/m… 4) Rethinking the buffer manager Modern systems come equipped with massive amounts of RAM; there’s actually much more “room at the club” than database developers initially assumed. So the idea of the revamped buffer manager in CedarDB is that you can (and should) expect variance not just in data access patterns, but in storage speed and location, page sizes and data organization, and memory hierarchy. CedarDB’s buffer manager is designed from the ground up to work in a heavily multi-threaded environment. It decentralizes buffer management with Pointer Swizzling: Each pointer (memory address) knows whether its data is in memory or on disk, eliminating the global lock that throttles traditional buffer managers. 5) Building a database for change Databases are built to not change. It’s exactly this stability that gives each generation the confidence to build their apps (no matter how different they are) on systems like Postgres. You know what you’re getting. But there’s also a clear downside to this rigidity. CedarDB’s storage class system employs pluggable interfaces where adding new storage types doesn’t require rewriting other components. E.g. if CXL becomes the go-to storage interface at some point in the future, you don’t need to write another whole component, you just need another endpoint for the buffer manager. Anyway these are just a few of the ideas they’re bringing to the table. Maybe it’s because they’re in Germany, maybe it’s because they’re just really humble, but more people should know about this team!! Check out the full post here: amplifypartners.com/blog-posts/the…

Blog post: On the Coming Industrialisation of Exploit Generation with LLMs sean.heelan.io/2026/01/18/on-… TL;DR: I ran an experiment with GPT-5.2 and Opus 4.5 based agents to generate exploits for a zeroday QuickJS bug. They're pretty good at it. Code: github.com/SeanHeelan/ana…