Nomad Analyst

1.1K posts

@NomadAnalyst

Roderick McKinley, CFA, FRM https://t.co/ReVv3K7te0

🚨BREAKING: Someone just open-sourced a headless browser that runs 11x faster than Chrome and uses 9x less memory. It's called Lightpanda and it's built from scratch specifically for AI agents, scraping, and automation. Not a Chromium fork. Not a hack. A completely new browser written in Zig. Here's why this changes everything for AI builders: ↓

in case u londoners are shy of events for the following weeks unicornmafia.ai/e

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)

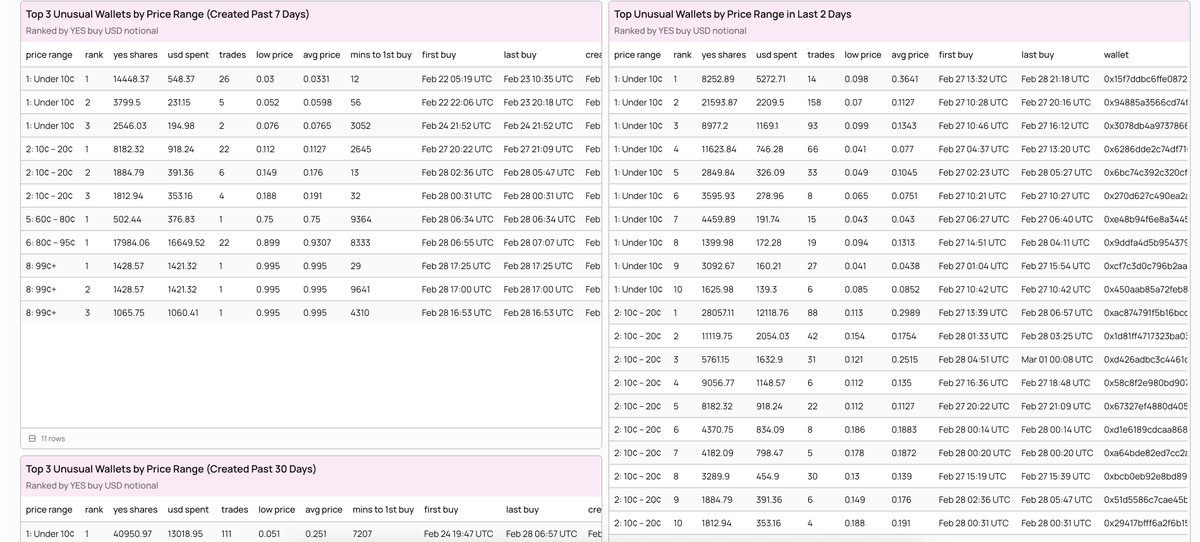

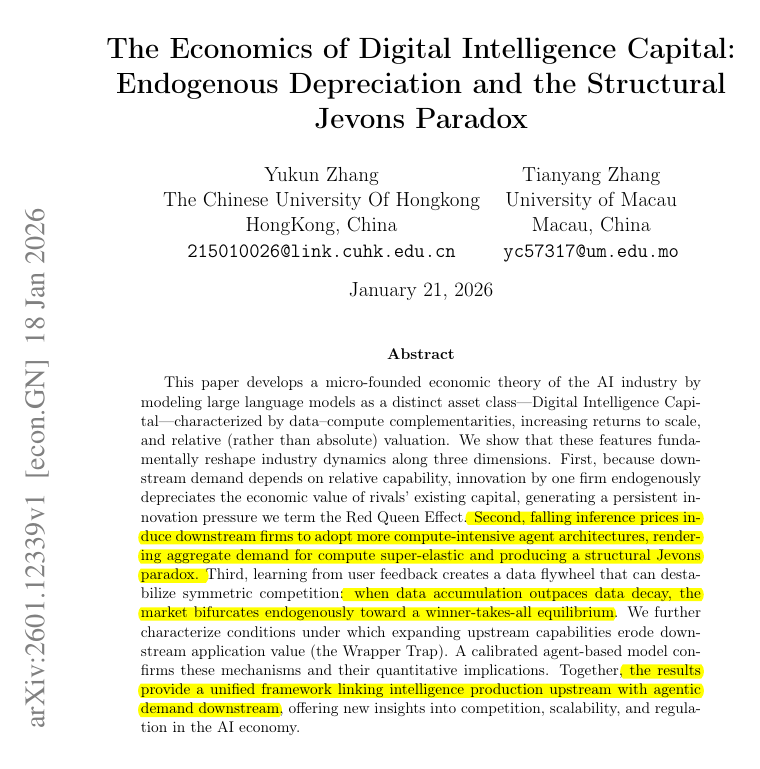

Citadel Securities published this graph showing a strange phenomenon. Job postings for software engineers are actually seeing a massive spike. Classic example of the Jevons paradox. When AI makes coding cheaper, companies actually may need a lot more software engineers, not fewer. When software is cheaper to build, companies naturally want to build a lot more of it. Businesses are now putting software into industries and tools where it was simply too expensive before. --- Chart from citadelsecurities .com/news-and-insights/2026-global-intelligence-crisis/