Obie Fernandez

14.9K posts

Obie Fernandez

@obie

CTO @zardotapp ✤ Partner at https://t.co/vR0SkHRGPa ✤ Bestselling Author ✤ DJ/Producer (aka Kyberian, KNBI)

Worldwide Katılım Aralık 2006

1.6K Takip Edilen12.2K Takipçiler

Sabitlenmiş Tweet

My book is now available in all formats on Amazon!! Readers are singing its praises like “Best book about AI I’ve ever read.“ Check it out yourself now at amazon.com/dp/B0DN9KK4X7 #ai

English

An app built on blockchain tech, but:

- It doesn't mention crypto

- It doesn't show blockchains deposits

- It doesn't call a dollar with a T or a C

- It doesn't ask for wallet signature (SIWE)

- It doesn't force you to save 12 words

- It doesn't need a gas token

- It doesn't promote a TGE

Who is building this?

English

OH: "He fucking sings too!??"

Yeah, man... forever the one and only @obie 😎

soundcloud.com/obie/kyberian-…

English

@obie If ADHD has taught me anything, it's that "some day" doesn't exist. Only now or never :-P

English

@obie What about the new nano-textured display on a brand spanking new MBP M5 Max with 128GB of RAM and a 4TB hdd?

English

@Jacobsklug Hey I'm in Barcelona this week and next! Would love to come and cowork. Can give you a peek at how we're building a fully automated fintech at @zardotapp

English

@jvrsanch I’m currently looking for super senior full stack Ruby on Rails product engineers at @zardotapp and can pay US salary. Preference for candidates in Spain 🇪🇸 since I’m planning to relocate there very soon. (BCN most likely)

English

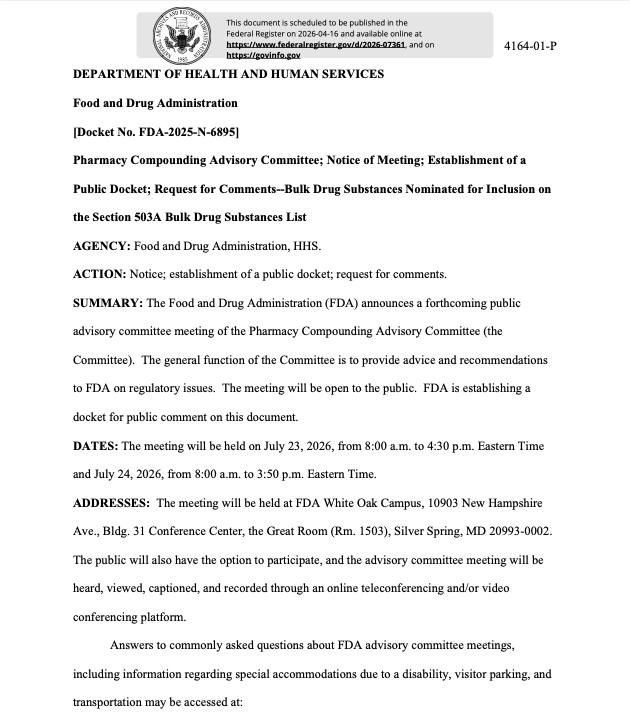

Un VP Eng. en 🇪🇸 cobra lo mismo que un Entry level en 🇺🇸 ~120k

Es triste, pero asi estamos

Puedo cobrar 120k estando en España?

Claro! Pero como?

Trabajando para una empresa the US/Global desde España.

Yo lo hice, y no es magia negra.

~120k es lo MÍNIMO que se cobra fuera

Llegando a cobrar mucho más si eres bueno

Cuando en el entrevista os preguntan cuanto estáis dispuestos a cobrar, la gente dice lo que en España es un buen sueldo (30-40k)

Y ellos se ríen por dentro porque tenían un budget de 140k para el puesto

Los motivos de la poca competitividad en España son muchos

Tiene pinta que uno de ellos es que las empresas tienen ambición nacional o mercados emergentes

Y no da para pagar salarios competitivos globales

"Pero las big tech son globales", ya pero no son tontas y ya tienen estructuras de HR montadas en España

Entienden cómo están las cosas y se aprovechan. Mismos puestos en US pagan x3

Las startups/scaleups de 🇺🇸 son el MEJOR arbitraje que se puede hacer

- Deseosos de contratar gente buena (que en España hay de sobra)

- Sin tiempo para optimizar costes como big tech

- Y lo más importante, TIENEN DINERO!

@elwatto es un buen ejemplo de salarios dentro de mercado

@exp8fellowship con @GuliMoreno está ayudando a muchos chavales para que vean el mundo que hay fuera

En @rebolthq buscamos gente buena en España, tanto en remoto como para venirse a San Francisco

No os conforméis com números de 30-50k, podeis conseguir mucho mas!

Fuente: levels .fyi debajo

Borja Perez Ⓜ️@borjaperfra

Español

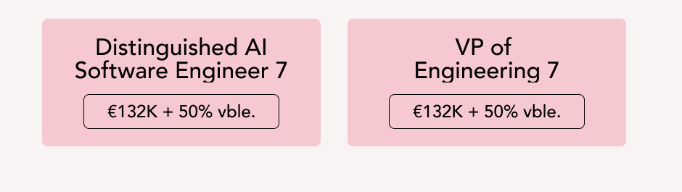

Curious about peptides? Here’s an informed take about recent developments.

Max Marchione@maxmarchione

English

I just claimed my spot for Superpower peptides: real, research-backed protocols built for humans. Lock in yours with my link: superpower.com/peptides?ref=o…

English

@obie Nice, protocol-only clients are what MCP needs. Curious if you’ve tested it against both stdio and HTTP servers with auth in the wild?

English

Releasing Manceps -- a Ruby client for the Model Context Protocol (MCP).

Persistent connections, built-in auth, stdio + HTTP transports, full 2025-11-25 spec support.

No LLM coupling. Pure protocol client.

gem install manceps

github.com/zarpay/manceps

English

@dailyspacefact @drmikehart Any hints towards where to get these deals?

English

@frombroke2bull @SecKennedy @grok @grok is this development or Ted to bring quality up and costs down?

English

@SecKennedy @grok what exactly will this look like as far as timeline. If they get approved when will they be available to buy legally?

English

Today, we took long-overdue action to restore science, accountability, and the rule of law.

In September 2023, the Biden FDA pushed a number of peptides into Category 2 — “Bulk Drug Substances that Raise Significant Safety Risks” — driving a dangerous black market that puts Americans at risk.

Now, after nominators withdrew 12 peptides, the FDA will remove them from Category 2 and will bring them to PCAC at its next two meetings, beginning in July—where independent experts will rigorously evaluate each substance on its scientific merits using full clinical, pharmacological, and safety evidence.

• BPC-157

• Thymosin beta-4 fragment (LKKTETQ)

• Epitalon

• GHK-Cu (injectable)

• MOTS-c

• DSIP (Emideltide)

• Dihexa Acetate

• Ibutamoren Mesylate

• Melanotan II

• KPV

• Semax (heptapeptide)

• Cathelicidin LL-37

This action begins to restore regulated access and will immediately begin shifting demand away from the black market.

We will follow the science, enforce the law, and deliver the clarity patients, providers, and pharmacies deserve.

English