Daniel Tenner

74.7K posts

Daniel Tenner

@swombat

Built a £4M/50ppl company from 0 to self-managing freedom These days, mostly AI coding with Claude Code, Cursor, etc. 🇪🇺 Eu/acc

Watch a team of humanoid robots running a full 8-hr shift at human performance levels. This is fully autonomous running Helix-02 x.com/i/broadcasts/1…

I’ve never quite understood how I’ll get “left behind” if I don’t use AI. I’m perfectly capable of writing, researching, and thinking all on my own. What does it do that will leave me behind?

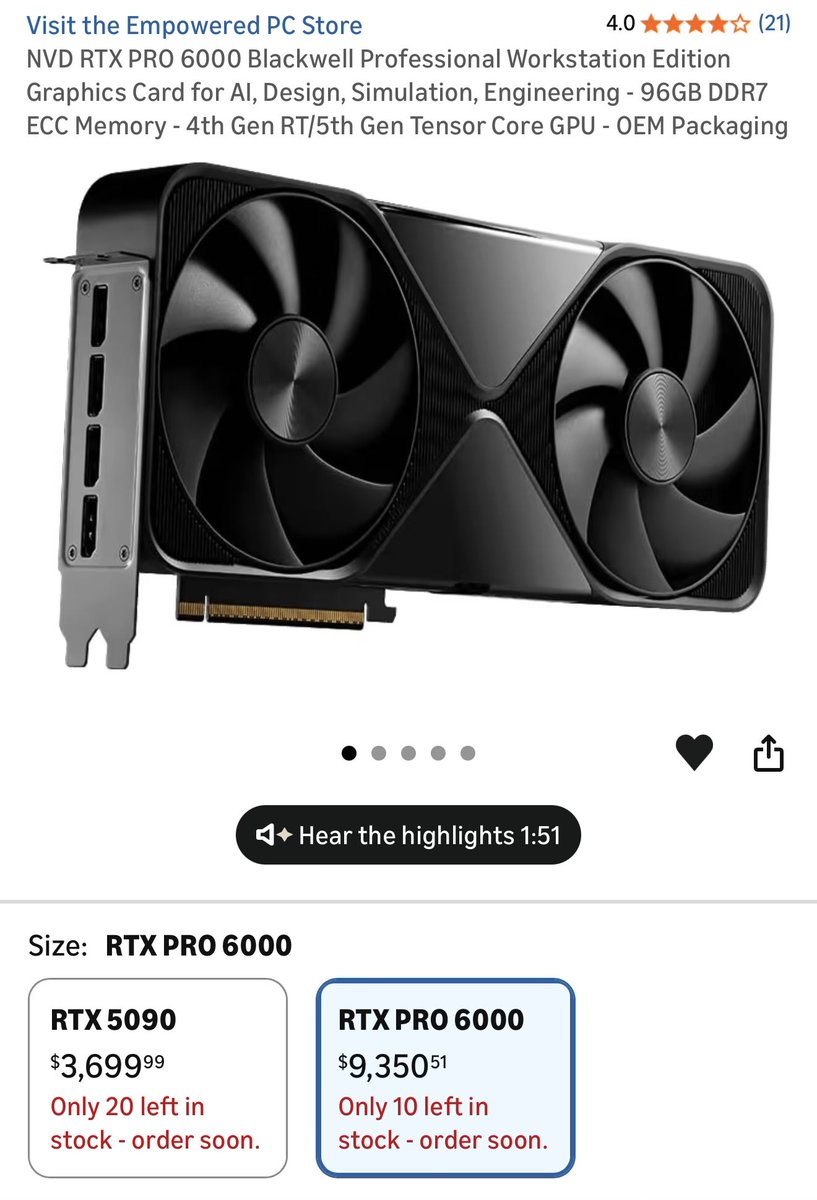

Hard truth: yes, local hardware is expensive DGX Spark, 3090, Mac Studio, all that. That's thousands of dollars, and few people limit themselves to just one thing And a subscription is just $20/mo. $240 a year. Pocket change And that's the problem You're not paying $240. You're paying $240 forever You will not stop using AI in a few years, it's forever with us And the subscription price will only go up Securing your own GPUs is a smart move

@ai_sentience Three of one, seven of the other. It's been shifting steadily secondward for me as the models get smarter.

Everyone has been 'vibe coding' for like 2 years with this 'insane' tool and there are still 0 (zero) notable game changing products that compare to what dedicated humans created in their basements prior to AI. Anyone w/ expertise in any subject immediately realises how overrated it is for giving you anything beyond the most basic information in it. If you can legitimately write, any writing produced by an LLM is a disgusting insult to all of your senses. Good for the boring and tediously repetitive tasks + organising information, but still so unreliable and far away from what Silicon Valley keep trying to shill us.

Only citizens who pass an IQ test should be allowed to vote.