Ge Ya (Olga) Luo

22 posts

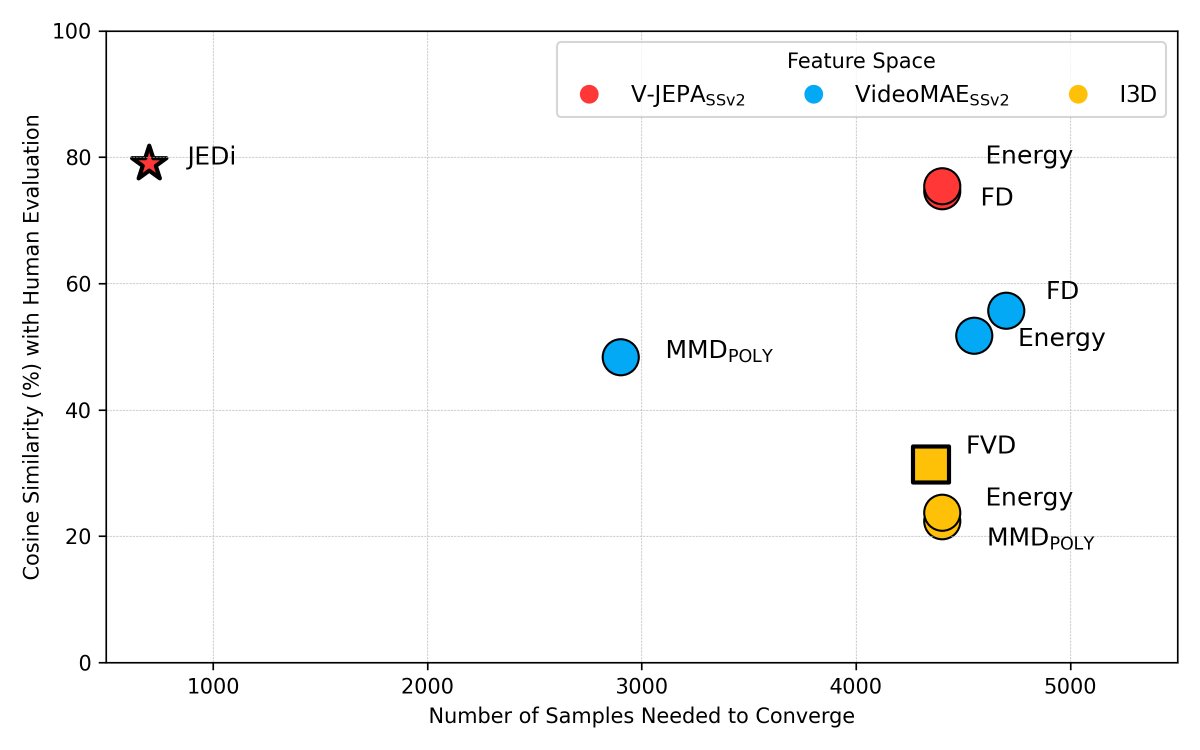

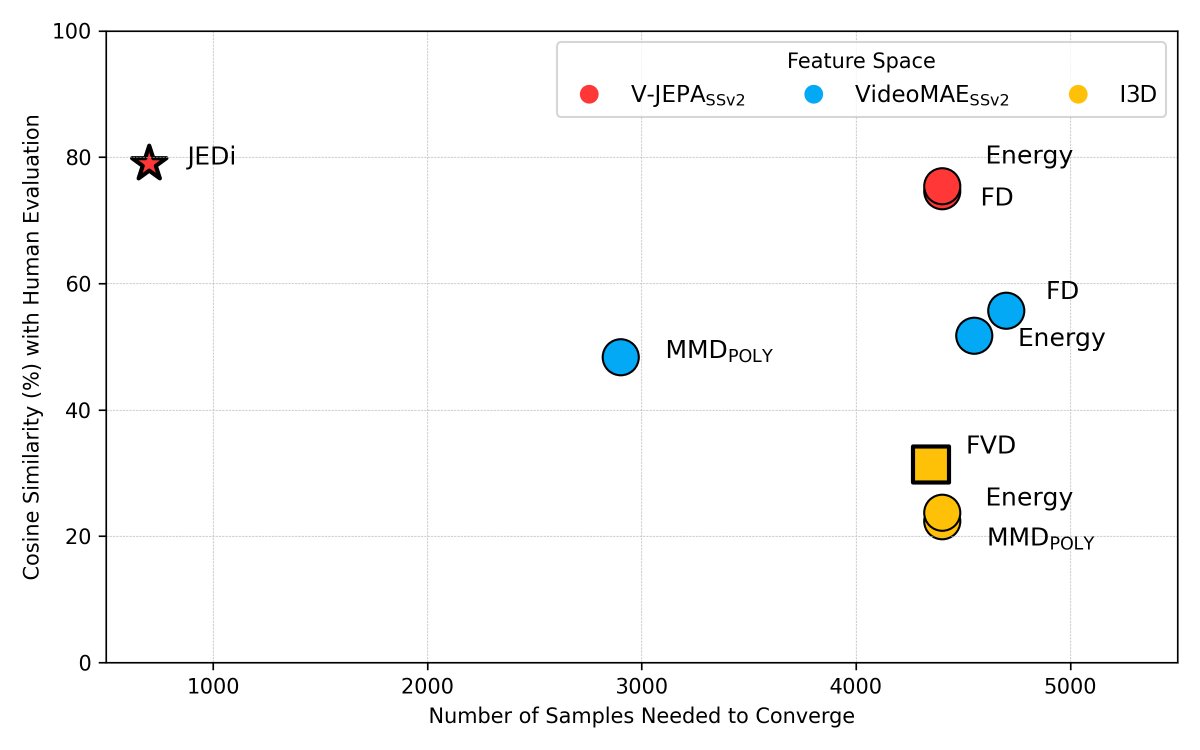

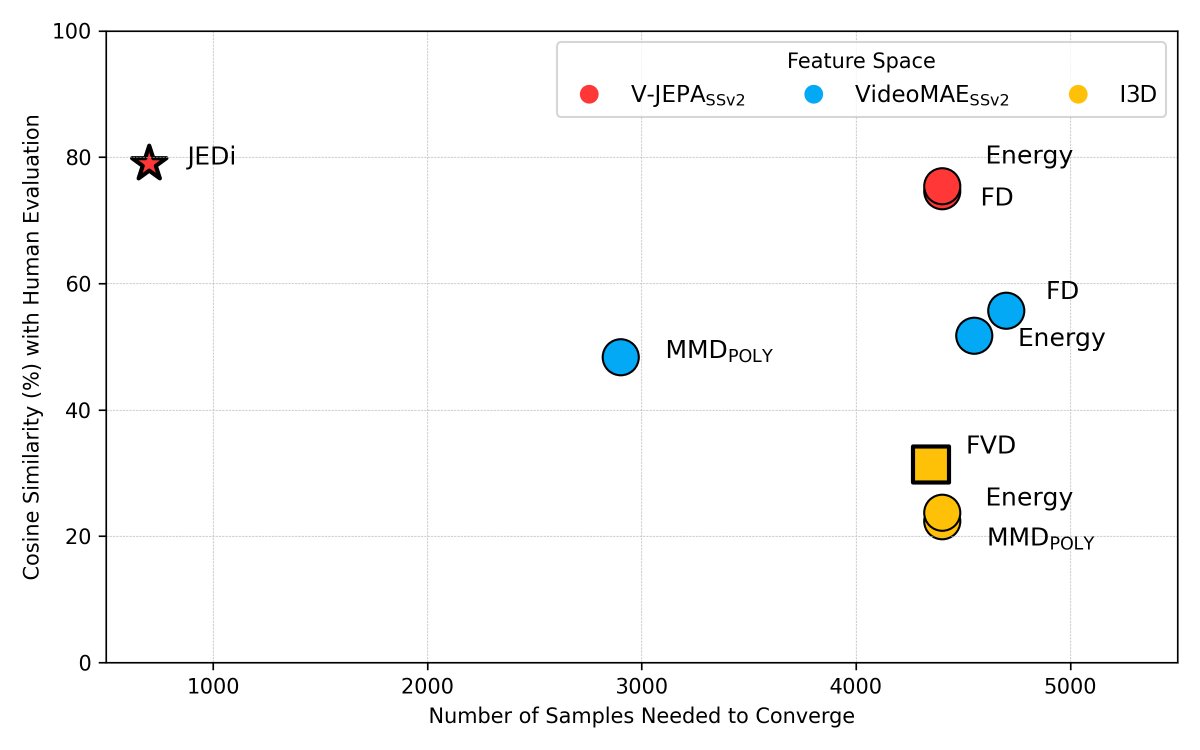

Though video generative models have made impressive progress, their automatic evaluation metric is still falling behind! Glad to see analysis and advances in video generation evaluation.

📷 Meet our student community! Interested in joining Mila? Our annual supervision request process for admission in the fall of 2025 is starting on October 15, 2024. More information here mila.quebec/en/prospective…

Did you miss the recent Auroras? No problem! ✨🎆 Super excited to share AURORA, a *general* image editing model + high-quality data that improves where prev work fails the most: Performing *action or movement* edits, i.e. a kind of world model setup Insights/Details ⬇️

So, this is what we were up to for a while :) Building SOTA foundation models for media -- text-to-video, video editing, personalized videos, video-to-audio One of the most exciting projects I got to tech lead at my time in Meta!