Saba

156 posts

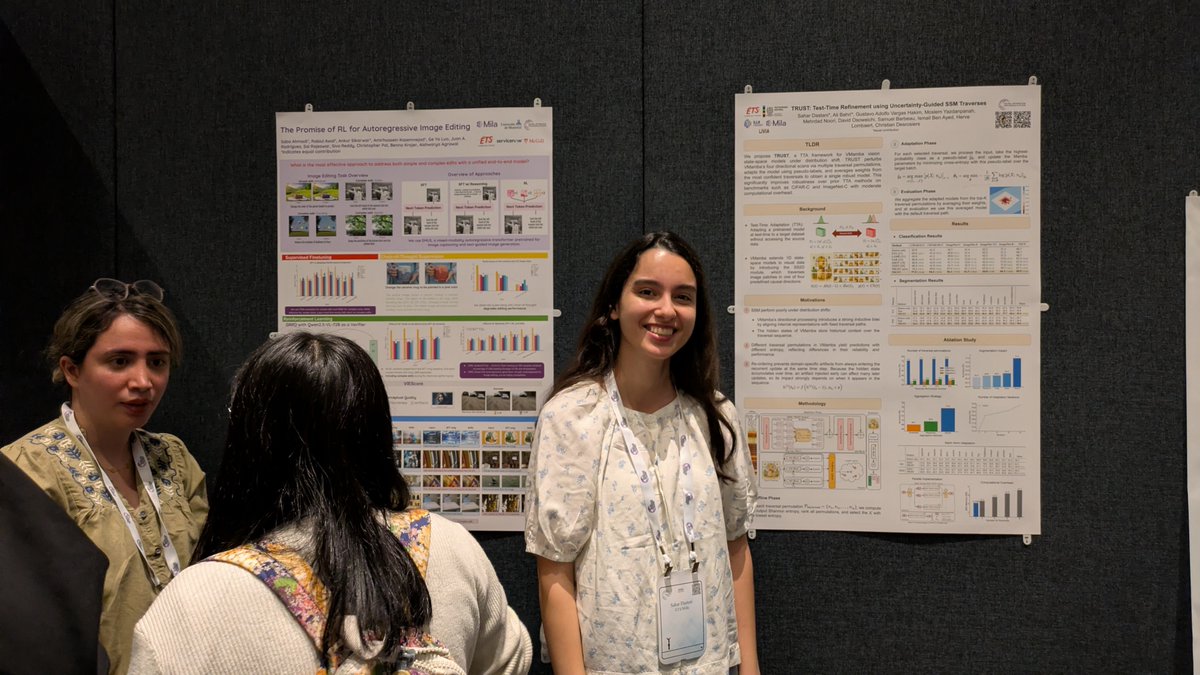

🔥 New work on AR-only image editing with RL! AR models usually trail diffusion, but our AR+RL (EARL) shows they can outperform the best diffusion baselines. We dive deep: SFT → RL → reasoning, showing a full pipeline for AR image editing. Details in the thread 👇

Congratulations to Siva Reddy (@sivareddyg), Core Academic Member at Mila, who has received the prestigious Outstanding Early Career Computer Science Researcher Award from @CSCan_InfoCan , the leading organization for the computer science community in Canada. mila.quebec/en/news/siva-r…

I am quite excited to share that our efforts in organizing and running "The VQA series of challenges" have been recognized with the 2025 Mark Everingham Prize -- thecvf.com/?page_id=529 for "stimulating a new strand of vision and language research". Thank you to the PAMI TC committee! I feel quite fortunate to have contributed to the VQA effort. Thank you @deviparikh @DhruvBatra_ for giving me this opportunity and for being awesome mentors! And thanks to my then colleague and now husband @yashgoyal_ for being my pillar of support throughout my research career! Last but not least, thank you @ayshrv for leading the organization of the last 3 VQA challenges! It was fun working with you! The vision-language landscape has evolved quite a bit since we first published our VQA paper! It's exciting to see how vision-language models are mainstream now! Back in those days, this line of work was a bit niche :) So, quite fortunate to have been a part of this exciting development!

I’m happy to share that our paper "Controlling Multimodal LLMs via Reward-guided Decoding" has been accepted to #ICCV2025! 🎉 w/ @proceduralia, @koustuvsinha, @adri_romsor, @michal_drozdzal, and @aagrawalAA 🔗 Read more: arxiv.org/abs/2508.11616 🧵 Here's what we did: