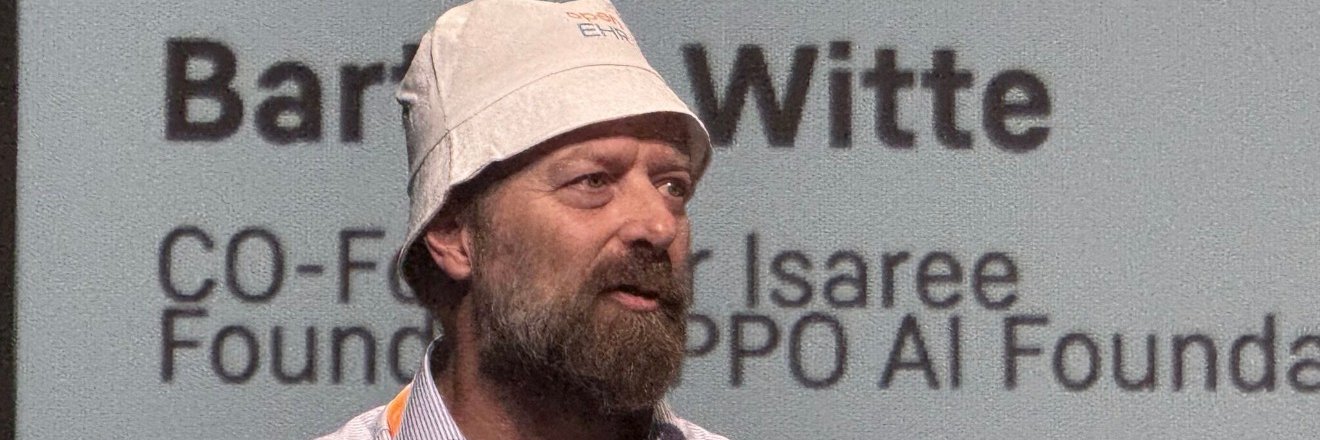

Bart de Witte

14.3K posts

Bart de Witte

@OpenMedFuture

🇧🇪 lived in 🇨🇭🇦🇹🇸🇰 now 🇩🇪 Med AI Democratisation 25+ yrs Digital Health leadership, father ex-IBM ex-SAP @isaree_ai https://t.co/TsBCgl9Ldm eu/acc

‼️🚨 ALARMING: Google now treats privacy as suspicious behavior by default. Users of GrapheneOS, CalyxOS, /e/OS, and other deGoogled Android phones are being locked out of millions of websites unless they install the exact Google Play Services software they deliberately removed. GrapheneOS is recommended by the EFF and used by journalists, lawyers, and activists in high-risk environments. The audience most likely to read Google's data practices and refuse its terms is now flagged as fraudulent for that exact decision. What happened?: ▪️ Google announced "Cloud Fraud Defense" at Cloud Next on April 22-23, 2026, branding it "the next evolution of reCAPTCHA." Existing reCAPTCHA customers were auto-migrated. ▪️ When the system flags traffic as suspicious, the old click-the-bus puzzle is gone. Users get a QR code instead. ▪️ Scanning the QR code requires Google Play Services running on the device. Internet Archive snapshots show this requirement has been live since at least October 2025, silently rolled out for 7 months before anyone noticed. ▪️ No Play Services = no QR scan = locked out. The bigger picture: ▪️ Google already tried this in 2023. It was called Web Environment Integrity (WEI), and it would have let Google decide which devices were "real enough" to access the web. Standards bodies and the public pushed back hard, and Google killed it. Three years later, the same idea is back, just hidden behind a QR code instead of a browser feature. ▪️ reCAPTCHA runs on millions of websites. Every developer who keeps using it is now, by default, telling deGoogled Android users they're not welcome...

Virtual private networks #VPN are increasingly used to bypass online age verification. Protecting children online is a priority, with new rules being implemented requiring a minimum age for access to some services Read👉 link.europa.eu/FGfr6C #DSA @EP_Justice @FZarzalejos

Perplexity and Computer now connect to premium health sources, starting with NEJM and BMJ Group, with 9 more medical journals and clinical databases on the way. Ask health questions and get answers cited from the same sources relied on by hospitals and research institutions.

EU will verhindern, dass Altersverifikationssystem mittels VPNs umgangen werden kann Achso, die illegale Massenmigration konnte man nicht stoppen, aber wenn es um das freie Internet geht, werden nun überall neue Grenzen gesetzt🤔

So much use of LLMs by patients and physicians in the US

It’s hard to collect data about how bioterrorists might try to use AI. Few people want to create a bioweapon, and those who might aren’t talking. On the other hand, it's easy to predict how the news will cover bioterrorism and how social media responds. We have years of clickbait headlines and viral scareposts to train on. This makes it much simpler to build a biosecurity policy around avoiding bad headlines, rather than installing safeguards that would actually stop bad actors. I have a PhD in Synthetic Biology. I know roughly what it would take to make a bioweapon. It would be enormously difficult and dangerous. Most of the work is in the physical world, where AI tools would be only marginally useful. None of the relevant uses of AI look anything like the examples cited in the NY Times story below. - Printing 8,000 word protocols for methods already in the public domain - Making a list of common cattle diseases - Generating a shopping list of test tubes and media - Describing how to use a weather balloon The actual biosecurity questions that need answers are technical and too boring to cover in a major media outlet. - How can we tell the difference between a dangerous DNA sequence and a harmless one? - What separates a python script used to discover a therapeutic from one used to discover a toxin? - Which practical R&D bottlenecks are being rapidly opened by AI and which are not? Much of the work of biology happens in the real world and doesn’t involve AI much at all. A serious biosecurity policy needs to focus on how bad actors might access physical hardware, specialized facilities and trained personnel. These are infinitely more important barriers than what Claude might tell someone about weather balloons. My point here is that the people telling you to be afraid, and the media outlets who cover them, are putting us all in danger. The big AI shops are going to lock down their models, not to stop bad actors, but to stop bad press. Training models to stop using scary words is easy, the real work of biosecurity is hard. If we don’t push back, we’re going to end up with an industry dedicated to performative biosecurity theater. nytimes.com/2026/04/29/us/…