Brace(Hanyang) Zhao

81 posts

Brace(Hanyang) Zhao

@OptionsGod_lgd

PhD student at @Columbia working on post training generative models | ex intern at @Netflix @CapitalOne

🚨🚨 New paper on flow-matching value functions Last year, we showed training RL value functions with a flow-matching loss achieved SOTA results. But why does it work? And what could it possibly tell us about other things that have nothing to do with VFs or even RL? Short answer: iterative compute used correctly can address feature plasticity in continual learning! 🧵⬇️

Introducing Cowork: Claude Code for the rest of your work. Cowork lets you complete non-technical tasks much like how developers use Claude Code.

Tired to go back to the original papers again and again? Our monograph: a systematic and fundamental recipe you can rely on! 📘 We’re excited to release 《The Principles of Diffusion Models》— with @DrYangSong, @gimdong58085414, @mittu1204, and @StefanoErmon. It traces the core ideas that shaped diffusion modeling and explains how today’s models work, why they work, and where they’re heading. 🧵You’ll find the link and a few highlights in the thread. We’d love to hear your thoughts and join some discussions! ⚡ Stay tuned for our markdown version, where you can drop your comments!

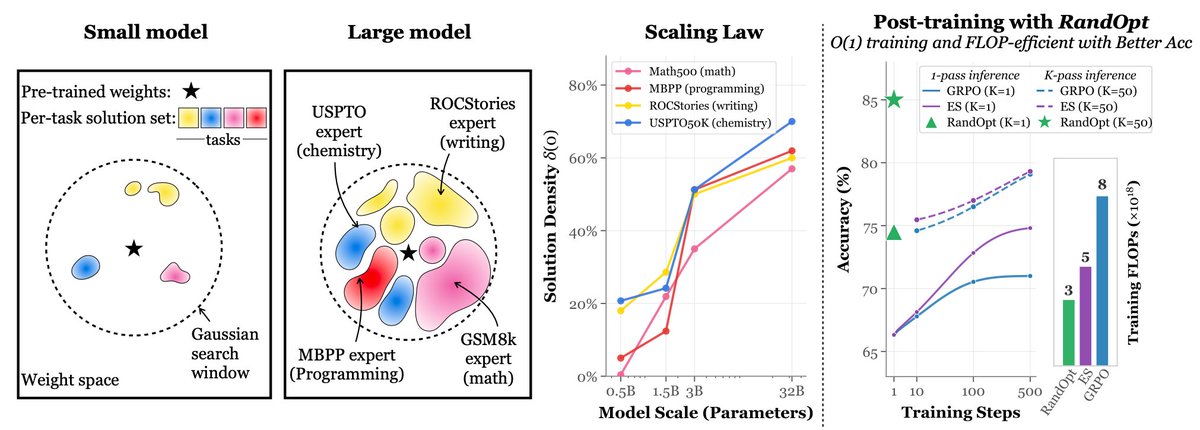

Nice empirical paper investigating all your bag of tricks in reasoning LLMs arxiv.org/abs/2508.08221

Maybe a bit late, but I am thrilled to announce that our Scores as Actions (arxiv.org/abs/2502.01819) paper is accepted by #icml2025 🎉 We propose a continuous-time RL method for diffusion models RLHF (which are naturally continuous time) and perform better than discrete-time RL!