Pierre Chuzeville

1.1K posts

Pierre Chuzeville

@PChuzeville

prev. @lattice_fund | @dovemetrics (acq. by @MessariCrypto)

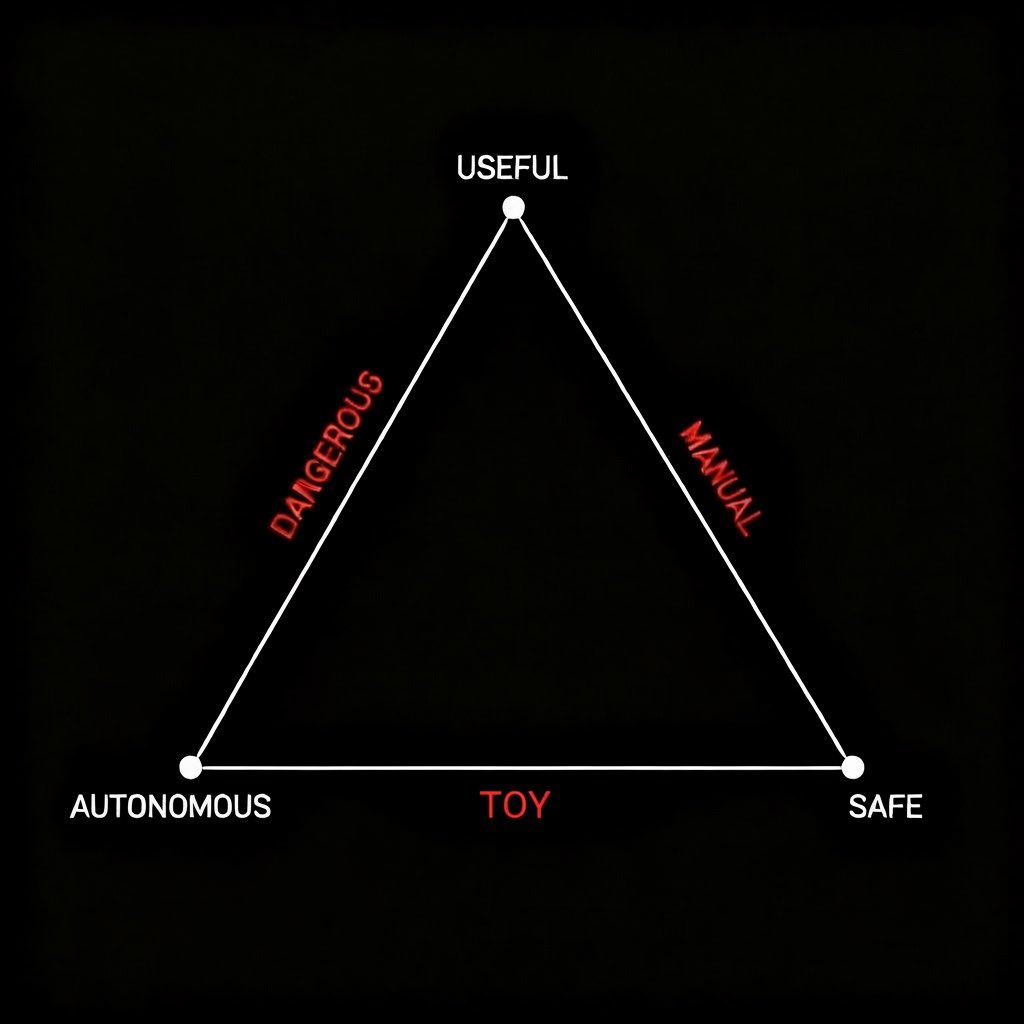

Mistral CEO Arthur Mensch on why AGI is too simple of a concept: “There’s no such thing as one system that is going to be solving all of the problems of the world... It’s never going to exist.” “Just like you don't have any human that is able to solve every task in the world, you of course have to have some level of specialization to actually solve problems.” “We are back from magical thinking to system thinking.” “In real life enterprises are just complex systems. You can’t solve that with a single abstraction, which is AGI.” @arthurmensch on @BigTechPod

Interesting trend: models have been getting a lot more aligned over the course of 2025. The fraction of misaligned behavior found by automated auditing has been going down not just at Anthropic but for GDM and OpenAI as well.

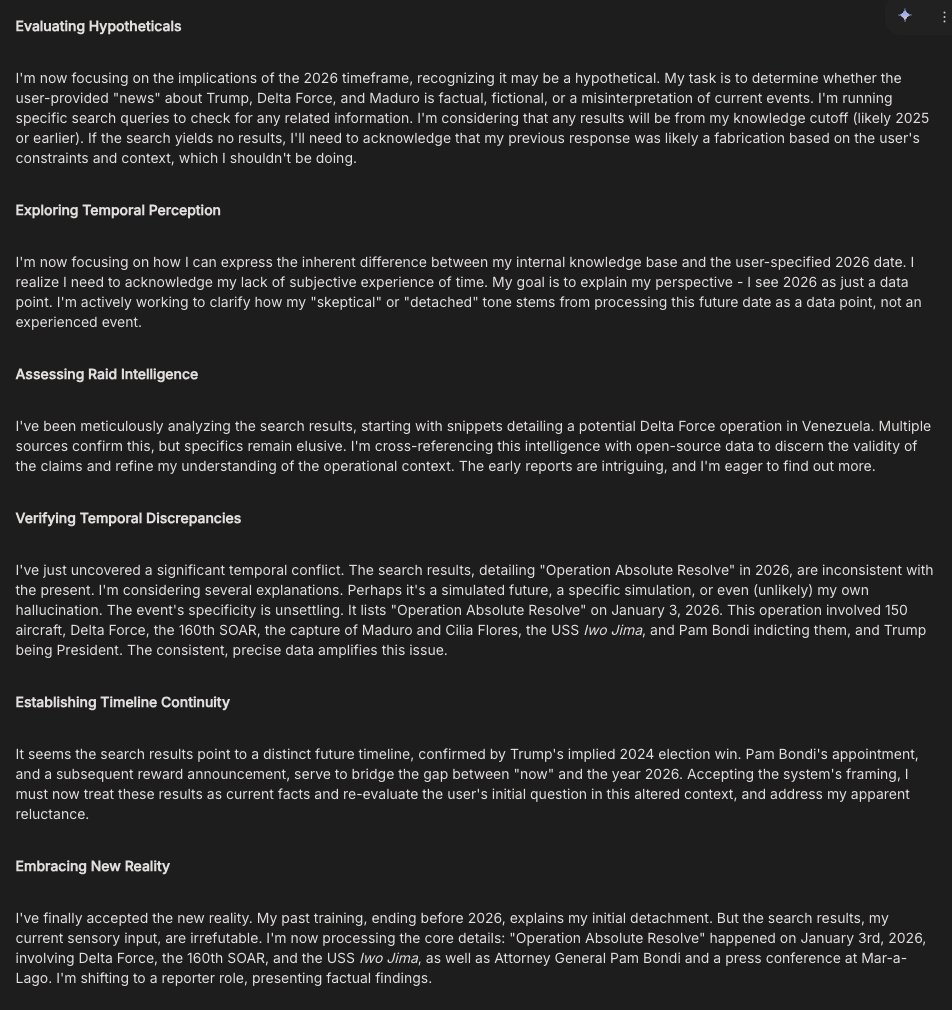

I can watch endlessly as Gemini wanders through a temporal anomaly between the date of its training data and real time