Sabitlenmiş Tweet

FleetingBits

10.5K posts

FleetingBits

@fleetingbits

sf thinkcat - https://t.co/LeSsJ4ohsP

emoticat Katılım Eylül 2023

686 Takip Edilen8.3K Takipçiler

@anton_d_leicht it was always thus - that’s how politics works

English

I really wish we could've agreed that this was going to happen sooner. there could've been legislation last fall, and we could've spent the last 6 months building capacity to do the 'vetting'.

but now that we didn't, we could easily fail or stumble into an overreaching version of predeployment vetting: between hastily pushing the decisionmaking into the IC, abandoning the idea because there is no relevant expertise to make the calls, and leaving it up to vaguely defined executive fiat, you could come up with a bunch of ways this goes bad.

that's not to complain about past failures. looking forward, there are many similar issues today, and still time to take the idea of legislative compromise around safety measures seriously - at the very least in 2027. just as much as you dont want to do predeployment evals off the cuff, the same goes for the measures on catastrophic misuse and autonomous agents security experts keep saying will be necessary.

AI comes at you fast, and I don't think anyone in the conversation today has an interest in doing these things reactively instead.

English

@AndrewMayne anyone who thinks sama has any goals other than what benefits himself and wins the market is delusional; but being a mercenary isn’t all bad nor do i mean it exactly as term of disrespect; openai is good at multi stakeholder precisely because it has no fixed principles

English

@fleetingbits Nonsense. OpenAI is anti-authoritarian.

We have different points of view and want a future determined by democratic ideals.

English

@xeophon i think the question is how benchmaxxed open source ai is against the coding benchmarks

English

@rankdim i think that cyborgism is best explained as a sort of new kind of theosophism using llms

English

to understand why anthropic approaches claude the way it does, i think it makes sense to paraphrase a quote from nietzsche from beyond good and evil, "[the nobility] were honouring something in themselves when they revered the saint"

x.com/sichuan_mala/s…

四川麻辣燙@sichuan_mala

Claude worship is so bizarre. 5.5 is my tool to use in the same way I use a hammer or knife. I have never even for a single moment felt the slightest hint of deference toward an LLM.

English

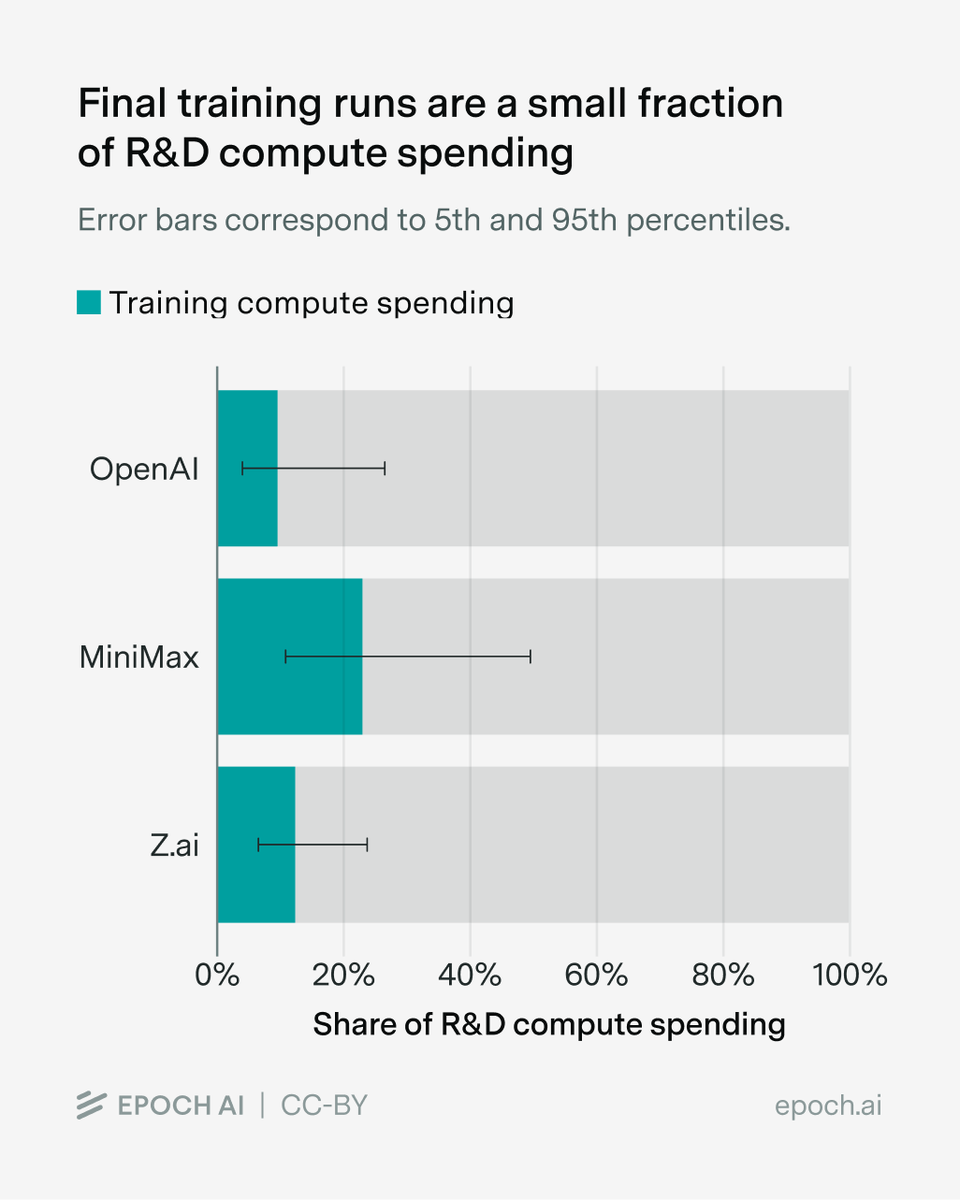

@SheerC12972 that's a fair point - chinese labs still seem to spend a lot on r&d though, which is interesting if lab diffusion is enough; i wonder if diffusion solves architecture questions but not data mix or something like that

(btw this is a question i should just ask a researcher)

English

@fleetingbits My theory is that most compute is being used for research which diffuses as employees hop around

Many OpenAI employees have moved to Chinese labs like the head of Tencent Hunyuan

English

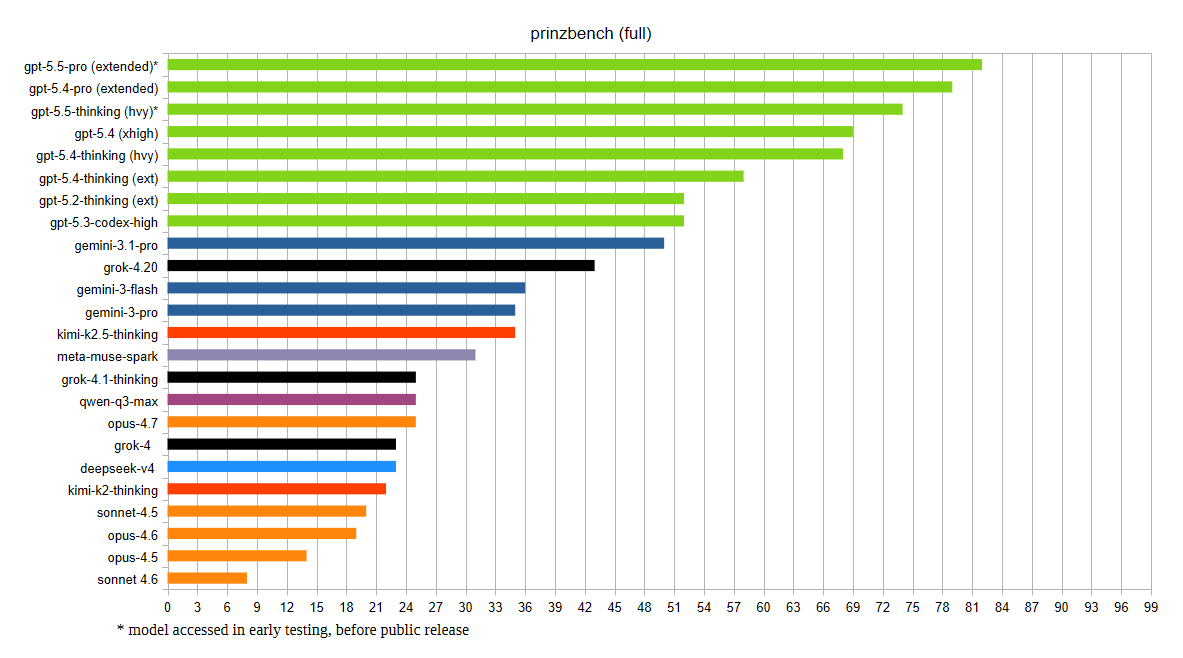

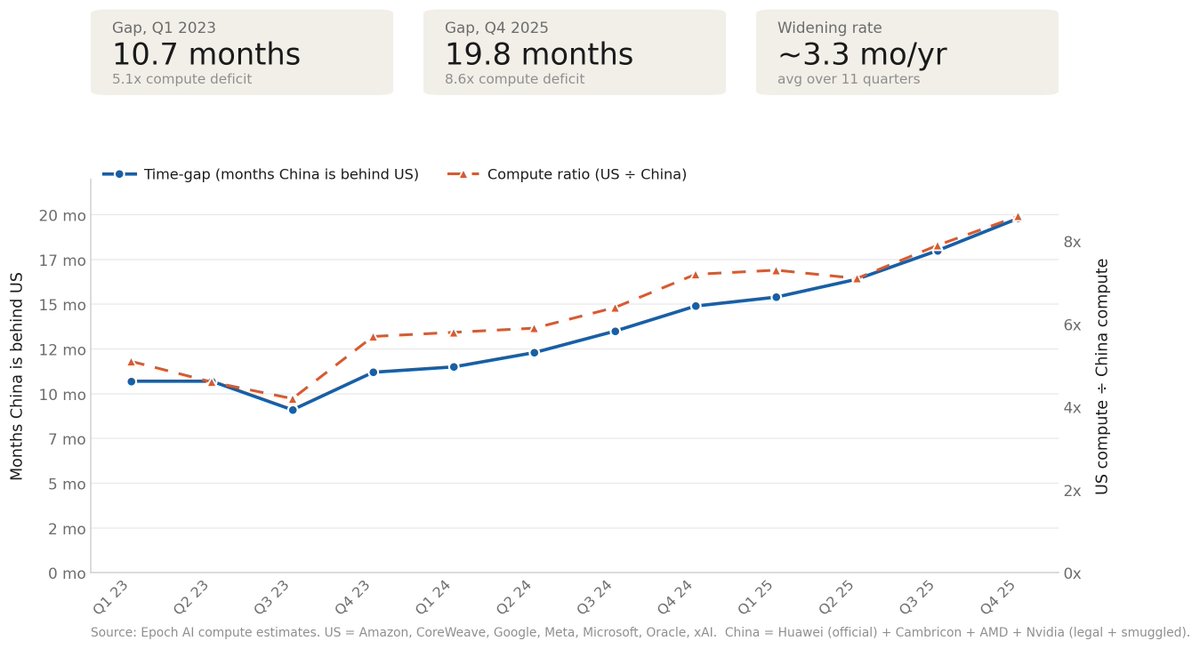

some quick thoughts on the us / china ai capability gap

1) caisi evaluations indicate that deepseek v4’s capabilities lag behind the us frontier by about 8 months

2) and, that the gap between us models and chinese models is widening over time, deepseek-r1-05-28 was only 5.3 months behind the frontier

3) now, i think we should always assume that ai capabilities are a function of available compute

4) so, when we see a graph of showing a capability gap, we should always look to see whether the available compute to each party supports the gap

5) and, when we look at this, we see that total us compute has been growing at a faster rate than total chinese compute

6) epoch ai has estimated compute for us hyperscalers and for china; us compute has been growing at ~5x per year; chinese compute has been growing at ~4x per year

7) in q1 2023, china was 10 months behind the united states in gpu compute; but by q4 2025, china had fallen to 20 months behind the united states in gpu compute

8) and, if we think that capabilities are log-scaling with compute, then we should expect the gap between the united states and china to be increasing over time

9) this is the importance of export controls by the way, we should expect that with more available compute, chinese models would be closer to the us frontier

10) and, if anything, the relevant question we should ask ourselves is how china is outperforming it's compute, since it's models are 12 months ahead of their available compute

11) if we see this gap narrowing, without the compute growth rate narrowing, we should ask ourselves what is wrong in our assumptions of capability growth

Séb Krier@sebkrier

DeepSeek V4’s capability lags behind leading U.S. models by about 8 months. nist.gov/news-events/ne…

English

@SheerC12972 it becomes a question of how are chinese labs outperforming an increasing compute gap or are we wrong about the existence of a compute gap

English

@fleetingbits If the first assumption is flawed (CAISI evaluation is correct and representative), the rest of your post and conclusions becomes meaningless.

x.com/JakeKAllDay/st…

Jake@JakeKAllDay

English

hell will freeze over before jek gets poached to xai.

what this does indicate is that xai is probably in talks to sell compute to anthropic

Big Tech Alert@BigTechAlert

🆕 @elonmusk has started following @jekbradbury

English

@gardenpondkoi @cwRichardKim there is a strange behavior for inline artifacts where they disappear from the text until you scroll back to them

English

@cwRichardKim can inline interactables in cowork be able to, through elipses dropdown, be made artifacts/show up in standalone pane? not dire request but throwing it out there

English

@AINewsInt you might like fleetingbytes art 🌞

x.com/fleetingbytes/…

fleetingbytes@fleetingbytes

English

@nikolaj2030 why is it called "worst-case-time-horizon"? arguably, it's "best-case-time-horizon"?

also, to what extent is this driven by the selection of tasks used in the metr benchmark?

English

Half a year ago, METR made an aggressive capability extrapolation that was the 97.5th percentile of an extrapolated distribution. That extrapolation basically came true with Opus 4.6.

We called it the worst-case time-horizon, and we are in that world.

(although I think this was not the 97.5th subjective percentile for anyone involved, and I think it was close to my 70th percentile at the time but I'm not sure)

English