Parv Kapoor

91 posts

Parv Kapoor

@Parvkpr

PhD-ing at CMU + interning at @msftresearch || previously @genrobotics_ai, USC, and @CompScienceCU

Pittsburgh, PA Katılım Şubat 2023

392 Takip Edilen214 Takipçiler

Sabitlenmiş Tweet

Parv Kapoor retweetledi

Parv Kapoor retweetledi

@JohnCLangford will be presenting our recent work on a principled way to learn compact world models in transformers. If you’re at NeurIPS, please do check out the workshop! 😊

John Langford@JohnCLangford

Today's world model workshop embodied-world-models.github.io looks fun. And yes I have my own definition :-), with the talk just after 1. For details see arxiv.org/pdf/2511.05963 , with the help of @jayden_teoh_ @manan_tomar @KwangjunA @pratyusha_PS @riashatislam and Alex Lamb.

English

@pratyusha_PS @pratyushmaini Congratulations @pratyusha_PS , it was a delight interacting with you over the summer!

English

📢 Some big (& slightly belated) life updates!

1. I defended my PhD at MIT this summer! 🎓

2. I'm joining NYU as an Assistant Professor starting Fall 2026, with a joint appointment in Courant CS and the Center for Data Science. 🎉

🔬 My lab will focus on empirically studying the science of deep learning and applying deep learning to accelerate the natural sciences.

Very broadly interested in questions at the intersection of language, reasoning and sequential decision making. (Plus any other fun problems that catch our eye along the way!)

🚀 I am recruiting 2 PhD students for this cycle! If you're interested in joining, please apply here: cs.nyu.edu/dynamic/phd/ad… cds.nyu.edu/phd-admissions…

English

Is it just me or did they use a thee sacred souls instrumental for the intro background music?? (the will i see you again song) @1x_tech

1X@1x_tech

NEO The Home Robot Order Today

English

Parv Kapoor retweetledi

How can we create a single navigation policy that works for different robots in diverse environments AND can reach navigation goals with high precision?

Happy to share our new paper, "VAMOS: A Hierarchical Vision-Language-Action Model for Capability-Modulated and Steerable Navigation"!

📜 Paper: arxiv.org/abs/2510.20818

🌐 Website: vamos-vla.github.io

English

I'll be joining the faculty @JohnsHopkins late next year as a tenure-track assistant professor in @JHUCompSci

Looking for PhD students to join me tackling fun problems in robot manipulation, learning from human data, understanding+predicting physical interactions, and beyond!

English

@chaitanya1cha thanks for the kind words! that is actually a very interesting direction, i might just look at this next 👀

English

Really interesting application of constrained decoding!

I think this could be scaled to test-time robot-embodiment adaptation as well, where VLA outputs are constrained to predict actions based on the current embodiment configurations.

Parv Kapoor@Parvkpr

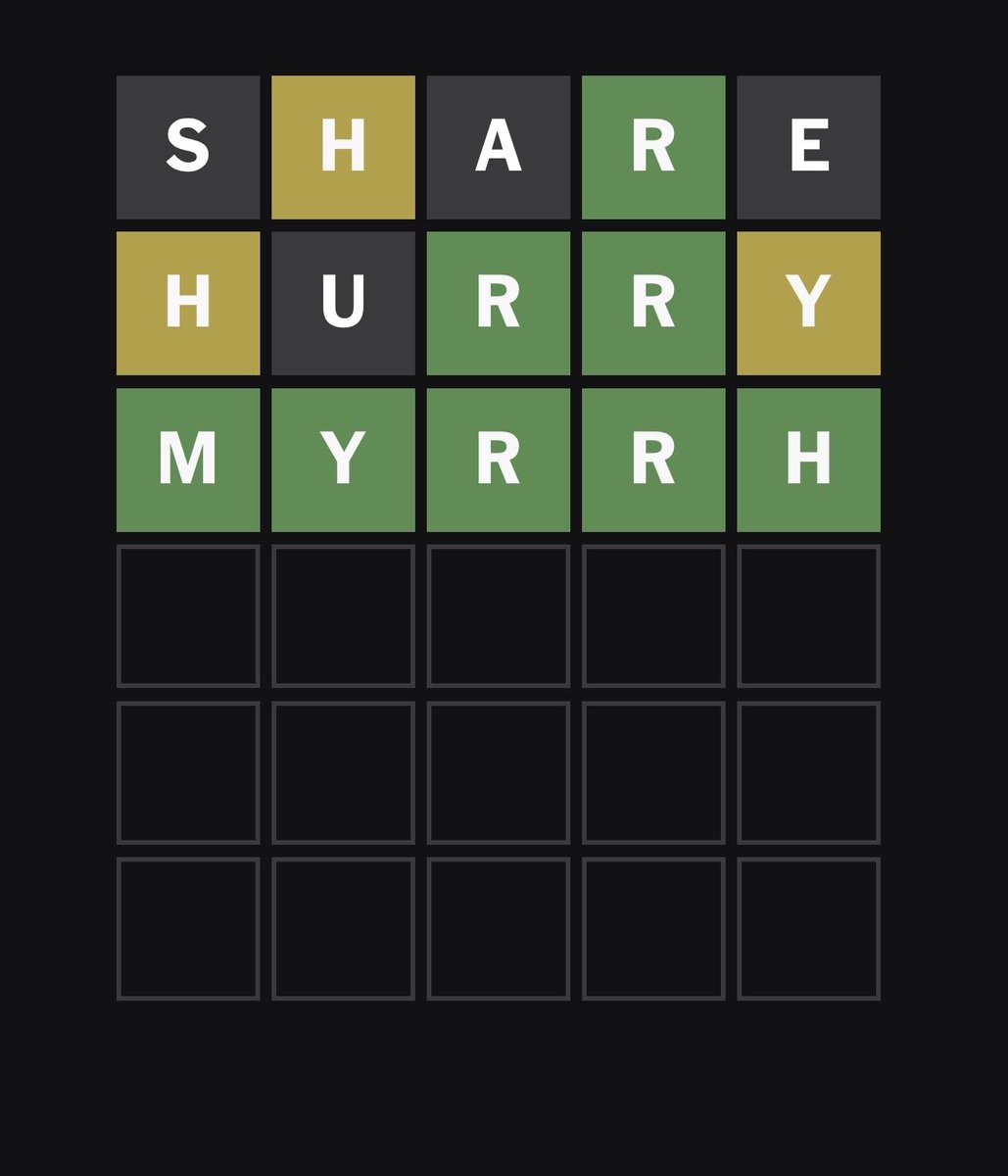

Last year, I came across the idea of constrained decoding (I know, late to the party) and was fascinated. The ability to enforce constraints for LLMs at inference time without fine tuning is a powerful idea. It got me thinking, can we do this for robot foundation models? 1/n🧵

English

LLMs got JSON constraints, now RFMs get safety constraints.

With SafeDec, you can plug in contextual rules and make your model behave safely.

Read our full blog here 👇

parvkpr.github.io/projects/safed…

n/n🧵

English